overall code

The code has been modified in some places based on the code in the TensorFlow entry_2_mnist dataset training and related function explanations , mainly about namespaces

from tensorflow.examples.tutorials.mnist import input_data

import numpy as np

import tensorflow as tf

import matplotlib.pyplot as plt

mnist = input_data.read_data_sets('E:\img\mnist', one_hot=True)

n_batch = mnist.train.num_examples

print(n_batch)

with tf.name_scope("input"):

x = tf.placeholder(tf.float32,[None,784],name = 'x-input')

y = tf.placeholder(tf.float32,[None,10],name = "y-input")

with tf.name_scope('layer'):

with tf.name_scope('wights'):

W = tf.Variable(tf.zeros([784,10]))

with tf.name_scope('biases'):

b = tf.Variable(tf.zeros([10]))

with tf.name_scope('wx_b'):

wx_b = tf.matmul(x,W)+b

with tf.name_scope("prediction"):

prediction = tf.nn.softmax(tf.matmul(x,W)+b)

with tf.name_scope('loss'):

loss = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits_v2(labels = y,logits = prediction))

with tf.name_scope('train_step'):

train_step = tf.train.GradientDescentOptimizer(0.2).minimize(loss)

with tf.name_scope('results'):

with tf.name_scope('prediction_sets'):

c_prediction = tf.equal(tf.argmax(y,1),tf.argmax(prediction,1))

with tf.name_scope('accuracy'):

#将向量转化为float32后求平均值,即得到准确率

accuracy = tf.reduce_mean(tf.cast(c_prediction,tf.float32))

init = tf.global_variables_initializer()

with tf.Session() as sess:

sess.run(init)

writer = tf.summary.FileWriter('logs/',sess.graph)

for epoch in range(300):

batch_x,batch_y = mnist.train.next_batch(100)

sess.run(train_step,feed_dict={x:batch_x,y:batch_y})

acc = sess.run(accuracy,feed_dict={x:mnist.test.images,y:mnist.test.labels})

print("Iter {}, Testing Acc : {}".format(epoch,acc))first acquaintance summary

tf.summaryIt is an Operation that monitors the value of Tensor in the network. These operations are mainly for viewing and do not affect the data stream itself.

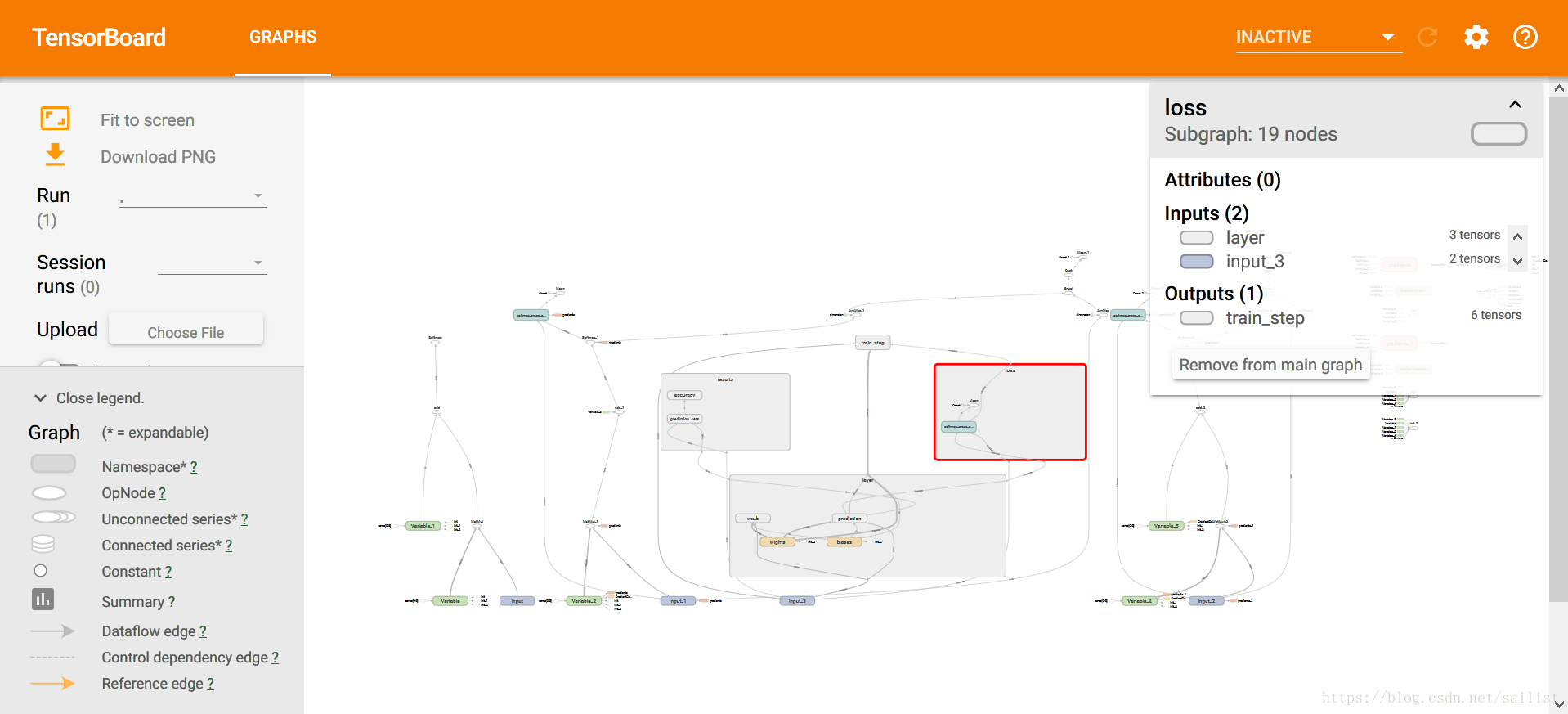

View image

with tf.Session() as sess:

sess.run(init)

writer = tf.summary.FileWriter('logs/',sess.graph)

for epoch in range(300):

batch_x,batch_y = mnist.train.next_batch(100)

sess.run(train_step,feed_dict={x:batch_x,y:batch_y})

acc = sess.run(accuracy,feed_dict={x:mnist.test.images,y:mnist.test.labels})

print("Iter {}, Testing Acc : {}".format(epoch,acc))When the last session is opened, run

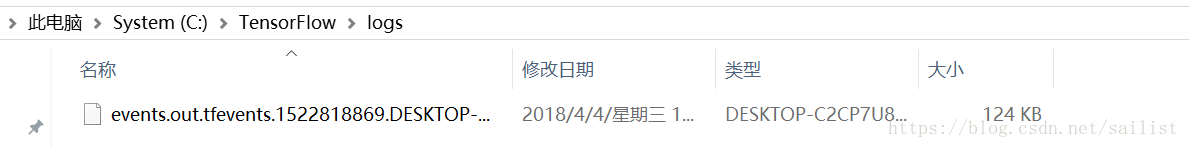

writer = tf.summary.FileWriter('logs/',sess.graph)will generate a file in the directory given by the current first parameter

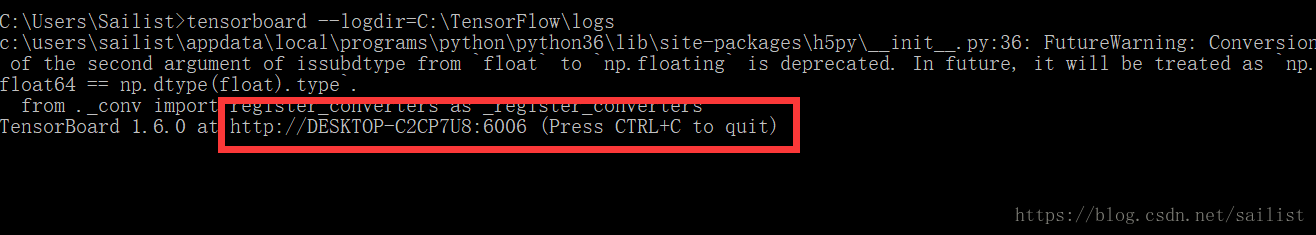

Open the command prompt, run

tensorboard –logdir=[given directory], after executing the command, a link will be given, and after browsing Open the browser (recommended Google, Firefox browser, Sogou browser (pro-test) cannot be opened correctly)

Press and hold the left button to move, the scroll wheel zooms in and out, and the right button can split the node into separate nodes (for easy viewing). Double-click the rounded rectangle node to expand and view the node details. The above is the basic usage of this.

Namespaces

Find the layer node and double-click to expand, you can see that it

just corresponds to the code we wrote before

with tf.name_scope('layer'):

with tf.name_scope('wights'):

W = tf.Variable(tf.zeros([784,10]))

with tf.name_scope('biases'):

b = tf.Variable(tf.zeros([10]))

with tf.name_scope('wx_b'):

wx_b = tf.matmul(x,W)+b

with tf.name_scope("prediction"):

prediction = tf.nn.softmax(tf.matmul(x,W)+b)The role of the namespace is to classify variables and operations. In a slightly larger network, if this method is not adopted, the final generated graph may be extremely complex, and there is no way to view and clarify its structure. In the definition of the hierarchy, you should actively assign a namespace to a class of variables.