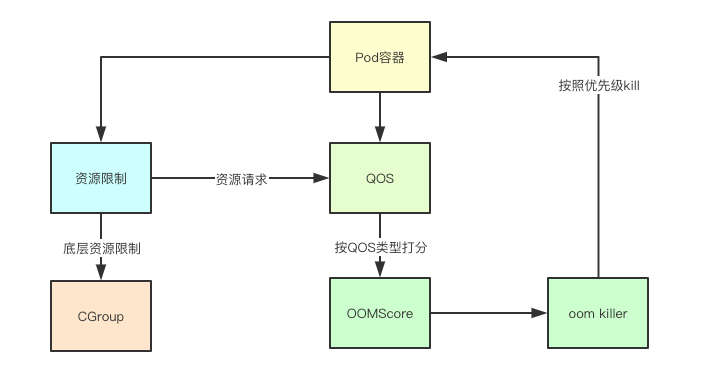

QOS is a resource protection mechanism in k8s. It is mainly a control technology for incompressible resources such as memory. For example, in memory, it constructs OOM scores for different Pods and containers, and is assisted by the kernel's strategy. In this way, when the memory resources of the node are insufficient, the kernel can kill the Pods with lower priority (the higher the score, the lower the priority) according to the priority of the policy. Today, we will analyze the implementation behind it.

1. Key Basic Features

1.1 Everything is a file

In Linux, everything is a file, and the control of the CGroup itself is also carried out through the configuration file. This is the configuration of a container I created for a Pod with a memory Lmits of 200M

# pwd

/sys/fs/cgroup

# cat ./memory/kubepods/pod8e172a5c-57f5-493d-a93d-b0b64bca26df/f2fe67dc90cbfd57d873cd8a81a972213822f3f146ec4458adbe54d868cf410c/memory.limit_in_bytes

209715200

1.2 Kernel memory configuration

Here we focus on two memory-related configurations: VMOvercommitMemory, whose value is 1, indicates that all physical memory resources are allocated for operation. Note that the SWAP resource VMPanicOnOOM is not included, and its value is 0: it means that when memory is insufficient, oom_killer is triggered to select part of the process. kill, QOS is also achieved by affecting its kill process

func setupKernelTunables(option KernelTunableBehavior) error {

desiredState := map[string]int{

utilsysctl.VMOvercommitMemory: utilsysctl.VMOvercommitMemoryAlways,

utilsysctl.VMPanicOnOOM: utilsysctl.VMPanicOnOOMInvokeOOMKiller,

utilsysctl.KernelPanic: utilsysctl.KernelPanicRebootTimeout,

utilsysctl.KernelPanicOnOops: utilsysctl.KernelPanicOnOopsAlways,

utilsysctl.RootMaxKeys: utilsysctl.RootMaxKeysSetting,

utilsysctl.RootMaxBytes: utilsysctl.RootMaxBytesSetting,

}

2. QOS scoring mechanism and judgment implementation

The QOS scoring mechanism is mainly based on the resource constraints in Requests and limits to determine and score types. Let's take a quick look at the implementation of this part.

2.1 Determine the QOS type according to the container

2.1.1 Build the container list

Traverse all container lists, note that all initialization containers and business containers will be included here

requests := v1.ResourceList{}

limits := v1.ResourceList{}

zeroQuantity := resource.MustParse("0")

isGuaranteed := true

allContainers := []v1.Container{}

allContainers = append(allContainers, pod.Spec.Containers...)

// 追加所有的初始化容器

allContainers = append(allContainers, pod.Spec.InitContainers...)

2.1.2 Handling Requests and limits

Here, all the resources limited by Requests and Limits are traversed and added to different resource collection summaries. The determination of whether it is Guaranteed is mainly based on whether the resources in the limits contain CPU and memory resources. Only if they are included, it may be Guaranteed.

for _, container := range allContainers {

// process requests

for name, quantity := range container.Resources.Requests {

if !isSupportedQoSComputeResource(name) {

continue

}

if quantity.Cmp(zeroQuantity) == 1 {

delta := quantity.DeepCopy()

if _, exists := requests[name]; !exists {

requests[name] = delta

} else {

delta.Add(requests[name])

requests[name] = delta

}

}

}

// process limits

qosLimitsFound := sets.NewString()

for name, quantity := range container.Resources.Limits {

if !isSupportedQoSComputeResource(name) {

continue

}

if quantity.Cmp(zeroQuantity) == 1 {

qosLimitsFound.Insert(string(name))

delta := quantity.DeepCopy()

if _, exists := limits[name]; !exists {

limits[name] = delta

} else {

delta.Add(limits[name])

limits[name] = delta

}

}

}

if !qosLimitsFound.HasAll(string(v1.ResourceMemory), string(v1.ResourceCPU)) {

// 必须是全部包含cpu和内存限制

isGuaranteed = false

}

}

2.1.3 BestEffort

If the container in the Pod does not have any requests and limits, it is BestEffort

if len(requests) == 0 && len(limits) == 0 {

return v1.PodQOSBestEffort

}

2.1.4 Guaranteed

If Guaranteed must be equal resources, and the same number of restrictions

// Check is requests match limits for all resources.

if isGuaranteed {

for name, req := range requests {

if lim, exists := limits[name]; !exists || lim.Cmp(req) != 0 {

isGuaranteed = false

break

}

}

}

if isGuaranteed &&

len(requests) == len(limits) {

return v1.PodQOSGuaranteed

}

2.1.5 Burstable

If it is not the above two, it is the last kind of burstable.

return v1.PodQOSBurstable

2.2 QOS OOM scoring mechanism

2.2.1 OOM scoring mechanism

Among them, guaranteedOOMScoreAdj is -998. In fact, this is related to the OOM implementation. A node node is mainly composed of three parts: the kubelet main process, the docker process, and the business container process. In the OOM score, -1000 indicates that the process will not be affected by oom. kill, that business process can only be -999 at least because you can't guarantee that your business will never have problems, so in QOS -999 is actually reserved by the kubelet and docker processes, and the rest can be used as business containers Assignment (the higher the score, the easier it is to be killed)

// KubeletOOMScoreAdj is the OOM score adjustment for Kubelet

KubeletOOMScoreAdj int = -999

// DockerOOMScoreAdj is the OOM score adjustment for Docker

DockerOOMScoreAdj int = -999

// KubeProxyOOMScoreAdj is the OOM score adjustment for kube-proxy

KubeProxyOOMScoreAdj int = -999

guaranteedOOMScoreAdj int = -998

besteffortOOMScoreAdj int = 1000

2.2.2 Key Pods

The key Pod is a special kind of existence. It can be a Burstable or BestEffort Pod, but the OOM score can be the same as Guaranteed. This type of Pod mainly includes three types: Static Pod, Mirrored Pod and High-priority Pod

if types.IsCriticalPod(pod) {

return guaranteedOOMScoreAdj

}

Judgment Implementation

func IsCriticalPod(pod *v1.Pod) bool {

if IsStaticPod(pod) {

return true

}

if IsMirrorPod(pod) {

return true

}

if pod.Spec.Priority != nil && IsCriticalPodBasedOnPriority(*pod.Spec.Priority) {

return true

}

return false

}

2.2.3 Guaranteed 与 BestEffort

Both types have their own default values of Guaranteed (-998) and BestEffort (1000)

switch v1qos.GetPodQOS(pod) {

case v1.PodQOSGuaranteed:

// Guaranteed containers should be the last to get killed.

return guaranteedOOMScoreAdj

case v1.PodQOSBestEffort:

return besteffortOOMScoreAdj

}

2.2.4 Burstable

The key line is: oomScoreAdjust := 1000 - (1000 memoryRequest)/memoryCapacity. It can be seen from this calculation that if we apply for more resources, then the timing value calculated in (1000 memoryRequest)/memoryCapacity will be The smaller the value, the larger the final result. In fact, it means that the less memory we occupy, the higher the score, and this type of container is relatively easy to kill.

memoryRequest := container.Resources.Requests.Memory().Value()

oomScoreAdjust := 1000 - (1000*memoryRequest)/memoryCapacity

// A guaranteed pod using 100% of memory can have an OOM score of 10.

Ensure that burstable pods have a higher OOM score adjustment.

if int(oomScoreAdjust) < (1000 + guaranteedOOMScoreAdj) {

return (1000 + guaranteedOOMScoreAdj)

}

// Give burstable pods a higher chance of survival over besteffort pods.

if int(oomScoreAdjust) == besteffortOOMScoreAdj {

return int(oomScoreAdjust - 1)

}

return int(oomScoreAdjust)

Okay, that's it for today. I was very confused before watching it. After reading it, I felt a sense of enlightenment. That sentence is right. There are no secrets in front of the source code. Come on.

k8s source code reading e-book address: https://www.yuque.com/baxiaoshi/tyado3

> WeChat ID: baxiaoshi2020 > Follow the bulletin number to read more source code analysis articles

> Follow www.sreguide.com for more articles > This article is published by OpenWrite , a multi- post blog platform