background

为什么oplogReplay参数只设置了日志应用结束时间oplogLimit,而没有设置开始时间?

Write in front

摘自https://www.cnblogs.com/lijiaman/p/13531574.html

link

The oplogLimit parameter defines that the database is restored to this point in time. In other words, MongoDB only sets the end position of the oplog without specifying the start position of the oplog. Then there is a problem. Take the following picture as an example. I performed a full backup at T3, and a database error occurred at T4. When I performed the recovery, it was divided into two steps:

Phase 1: Use a full backup to restore the database to time T3;

Phase 2: Use the oplog log to restore the database to before T4 failure. The time point before the T4 failure is controlled by the parameter oplogLimit, but: the start time of oplog is not from time T3, but time T2, where T2 is the earliest time recorded by oplog, which is not under our control.

补充:这里的“不受我们控制”是指在使用mongorestore重做oplog的时候,我们没办法指定开始时间。但是如果想要把oplog的开始时间控制在T3时刻,还是有办法的:使用bsondump分析全备的最后一笔数据,在备份oplog的时候,用query选项过滤掉之前的数据即可。然而,这并不是我们关心的,我所关心的,是为什么mongorestore不给出恢复操作的开始时间参数。

mongorestore在恢复oplog的时候,只限定了日志的结束位置,而没有开始位置,这样就会造成oplog恢复的开始位置不是T3,而是在T2,那么就会存在T2~T3这段时间数据重复操作的问题,理论上会造成数据变化,为什么mongorestore不设定一个开始时间参数去避免重复操作的问题呢?

This test was carried out in mongodb 4.2 replica set environment

Problem exploration

oplog日志格式解析

Since the problem may occur when redo oplog, we might as well take a look at what information is stored in oplog. In order to see what information is stored in the oplog log, insert 1 piece of data into the test collection:

db.test.insert({

"empno":1,"ename":"lijiaman","age":22,"address":"yunnan,kungming"});

View the data information of the test collection

db.test.find()

/* 1 */

{

"_id" : ObjectId("5f30eb58bcefe5270574cd54"),

"empno" : 1.0,

"ename" : "lijiaman",

"age" : 22.0,

"address" : "yunnan,kungming"

}

Use the following query statement to view oplog log information:

use local db.oplog.rs.find( { $and : [ {

"ns" : "testdb.test"} ] } ).sort({ts:1})

The results are as follows:

{

"ts" : Timestamp(1597070283, 1),

"op" : "i",

"ns" : "lijiamandb.test",

"o" : {

"_id" : ObjectId("5f30eb58bcefe5270574cd54"),

"empno" : 1.0,

"ename" : "lijiaman",

"age" : 22.0,

"address" : "yunnan,kungming"

}

}

oplog中各个字段的含义:

ts:数据写的时间,括号里面第1位数据代表时间戳,是自unix纪元以来的秒值,第2位代表在1s内订购时间戳的序列数

op:操作类型,可选参数有:

-- "i": insert

--"u": update

--"d": delete

--"c": db cmd

--"db":声明当前数据库 (其中ns 被设置成为=>数据库名称+ '.')

--"n": no op,即空操作,其会定期执行以确保时效性

ns:命名空间,通常是具体的集合

o:具体的写入信息

o2: 在执行更新操作时的where条件,仅限于update时才有该属性

#文档中的“_id”字段

在上面的插入文档中,我们发现每插入一个文档,都会伴随着产生一个“_id”字段,该字段是一个object类型,对于“_id”,需要知道:

"_id"是集合文档的主键,每个文档(即每行记录)都有一个唯一的"_id"值

"_id"会自动生成,也可以手动指定,但是必须唯一且非空。

After testing, it is found that when the DML operation of the document is executed, it will be performed according to the ID. Let's take a look at the document change of the DML operation.

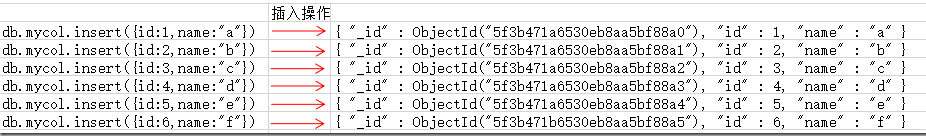

插入文档,查看文档信息与oplog信息

use testdb

//插入文档

db.mycol.insert({id:1,name:"a"})

db.mycol.insert({id:2,name:"b"})

db.mycol.insert({id:3,name:"c"})

db.mycol.insert({id:4,name:"d"})

db.mycol.insert({id:5,name:"e"})

db.mycol.insert({id:6,name:"f"})

rstest:PRIMARY> db.mycol.find()

{ "_id" : ObjectId("5f3b471a6530eb8aa5bf88a0"), "id" : 1, "name" : "a" }

{ "_id" : ObjectId("5f3b471a6530eb8aa5bf88a1"), "id" : 2, "name" : "b" }

{ "_id" : ObjectId("5f3b471a6530eb8aa5bf88a2"), "id" : 3, "name" : "c" }

{ "_id" : ObjectId("5f3b471a6530eb8aa5bf88a3"), "id" : 4, "name" : "d" }

{ "_id" : ObjectId("5f3b471a6530eb8aa5bf88a4"), "id" : 5, "name" : "e" }

{ "_id" : ObjectId("5f3b471b6530eb8aa5bf88a5"), "id" : 6, "name" : "f" }

这里记录该集合文档的变化,可以发现,mongodb为每条数据都分配了一个唯一且非空的”_id”:

Check the oplog at this time, as follows

{

"ts" : Timestamp(1597720346, 2),

"t" : NumberLong(11),

"h" : NumberLong(0),

"v" : 2,

"op" : "i",

"ns" : "testdb.mycol",

"ui" : UUID("56c4e1ad-4a15-44ca-96c8-3b3b5be29616"),

"wall" : ISODate("2020-08-18T03:12:26.231Z"),

"o" : {

"_id" : ObjectId("5f3b471a6530eb8aa5bf88a0"),

"id" : 1.0,

"name" : "a"

}

}

/* 2 */

{

"ts" : Timestamp(1597720346, 3),

"t" : NumberLong(11),

"h" : NumberLong(0),

"v" : 2,

"op" : "i",

"ns" : "testdb.mycol",

"ui" : UUID("56c4e1ad-4a15-44ca-96c8-3b3b5be29616"),

"wall" : ISODate("2020-08-18T03:12:26.246Z"),

"o" : {

"_id" : ObjectId("5f3b471a6530eb8aa5bf88a1"),

"id" : 2.0,

"name" : "b"

}

}

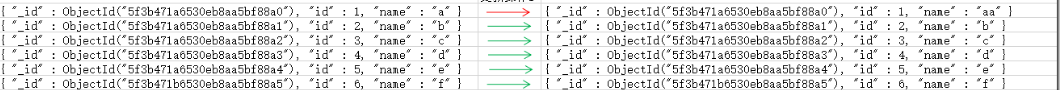

Update operation

rstest:PRIMARY> db.mycol.update({

"id":1},{$set:{

"name":"aa"}})

WriteResult({ "nMatched" : 1, "nUpserted" : 0, "nModified" : 1 })

这里更新了1行数据,可以看到,文档id是没有发生变化的

Check the oplog at this time, as follows:

{

"ts" : Timestamp(1597720412, 1),

"t" : NumberLong(11),

"h" : NumberLong(0),

"v" : 2,

"op" : "u",

"ns" : "testdb.mycol",

"ui" : UUID("56c4e1ad-4a15-44ca-96c8-3b3b5be29616"),

"o2" : {

"_id" : ObjectId("5f3b471a6530eb8aa5bf88a0")

},

"wall" : ISODate("2020-08-18T03:13:32.649Z"),

"o" : {

"$v" : 1,

"$set" : {

"name" : "aa"

}

}

}

这里值得我们注意:

**上面我们说到,oplog的”o2”参数是更新的where条件,我们在执行更新的时候,指定的where条件是”id=1”,id是我们自己定义的列,然而,在oplog里面指定的where条件是

"_id" : ObjectId("5f3b471a6530eb8aa5bf88a0"),很明显,他们都指向了同一条数据。这样,当我们使用oplog进行数据恢复的时候,直接根据”_id”去做数据更新,即使再执行N遍,也不会导致数据更新出错。

使用的是”_id”去做数据更新

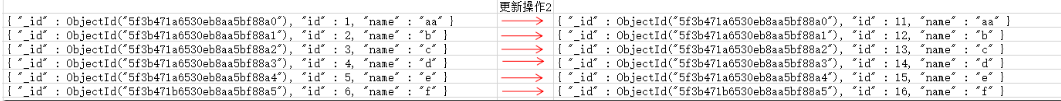

Update operation again

上面我们是对某一条数据进行更新,并且在update中指出了更新后的数据,这里再测试一下,我使用自增的方式更新数据。

// 每条数据的id在当前的基础上加10

rstest:PRIMARY> db.mycol.update({},{$inc:{

"id":10}},{multi:true})

WriteResult({ "nMatched" : 6, "nUpserted" : 0, "nModified" : 6 })

The data changes are shown in the figure. You can see that although id has changed, "_id" has not changed.

Look at oplog information again

{

"ts" : Timestamp(1597720424, 1),

"t" : NumberLong(11),

"h" : NumberLong(0),

"v" : 2,

"op" : "u",

"ns" : "testdb.mycol",

"ui" : UUID("56c4e1ad-4a15-44ca-96c8-3b3b5be29616"),

"o2" : {

"_id" : ObjectId("5f3b471a6530eb8aa5bf88a0")

},

"wall" : ISODate("2020-08-18T03:13:44.398Z"),

"o" : {

"$v" : 1,

"$set" : {

"id" : 11.0

}

}

}

/* 9 */

{

"ts" : Timestamp(1597720424, 2),

"t" : NumberLong(11),

"h" : NumberLong(0),

"v" : 2,

"op" : "u",

"ns" : "testdb.mycol",

"ui" : UUID("56c4e1ad-4a15-44ca-96c8-3b3b5be29616"),

"o2" : {

"_id" : ObjectId("5f3b471a6530eb8aa5bf88a1")

},

"wall" : ISODate("2020-08-18T03:13:44.399Z"),

"o" : {

"$v" : 1,

"$set" : {

"id" : 12.0

}

}

}

/* 10 */

{

"ts" : Timestamp(1597720424, 3),

"t" : NumberLong(11),

"h" : NumberLong(0),

"v" : 2,

"op" : "u",

"ns" : "testdb.mycol",

"ui" : UUID("56c4e1ad-4a15-44ca-96c8-3b3b5be29616"),

"o2" : {

"_id" : ObjectId("5f3b471a6530eb8aa5bf88a2")

},

"wall" : ISODate("2020-08-18T03:13:44.399Z"),

"o" : {

"$v" : 1,

"$set" : {

"id" : 13.0

}

}

}

/* 11 */

{

"ts" : Timestamp(1597720424, 4),

"t" : NumberLong(11),

"h" : NumberLong(0),

"v" : 2,

"op" : "u",

"ns" : "testdb.mycol",

"ui" : UUID("56c4e1ad-4a15-44ca-96c8-3b3b5be29616"),

"o2" : {

"_id" : ObjectId("5f3b471a6530eb8aa5bf88a3")

},

"wall" : ISODate("2020-08-18T03:13:44.400Z"),

"o" : {

"$v" : 1,

"$set" : {

"id" : 14.0

}

}

}

/* 12 */

{

"ts" : Timestamp(1597720424, 5),

"t" : NumberLong(11),

"h" : NumberLong(0),

"v" : 2,

"op" : "u",

"ns" : "testdb.mycol",

"ui" : UUID("56c4e1ad-4a15-44ca-96c8-3b3b5be29616"),

"o2" : {

"_id" : ObjectId("5f3b471a6530eb8aa5bf88a4")

},

"wall" : ISODate("2020-08-18T03:13:44.400Z"),

"o" : {

"$v" : 1,

"$set" : {

"id" : 15.0

}

}

}

/* 13 */

{

"ts" : Timestamp(1597720424, 6),

"t" : NumberLong(11),

"h" : NumberLong(0),

"v" : 2,

"op" : "u",

"ns" : "testdb.mycol",

"ui" : UUID("56c4e1ad-4a15-44ca-96c8-3b3b5be29616"),

"o2" : {

"_id" : ObjectId("5f3b471b6530eb8aa5bf88a5")

},

"wall" : ISODate("2020-08-18T03:13:44.400Z"),

"o" : {

"$v" : 1,

"$set" : {

"id" : 16.0

}

}

}

这里也非常值得我们注意

o2 records the _id of the document that has been changed, o is more interesting, and records the value after the change. We can find that if we execute the above auto-increment and update SQL every time, the id will increase by 10. However, if we repeat the oplog N times, the value of the corresponding record will not be changed.

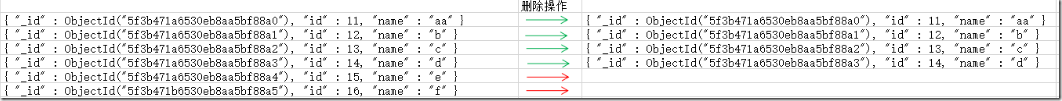

Let's look at the delete operation again

// 删除id大于14的条目

rstest:PRIMARY> db.mycol.remove({

"id":{

"$gt":14}})

WriteResult({ "nRemoved" : 2 })

The data changes are as follows:

Let's take a look at the oplog log:

/* 14 */

{

"ts" : Timestamp(1597720485, 1),

"t" : NumberLong(11),

"h" : NumberLong(0),

"v" : 2,

"op" : "d",

"ns" : "testdb.mycol",

"ui" : UUID("56c4e1ad-4a15-44ca-96c8-3b3b5be29616"),

"wall" : ISODate("2020-08-18T03:14:45.511Z"),

"o" : {

"_id" : ObjectId("5f3b471a6530eb8aa5bf88a4")

}

}

/* 15 */

{

"ts" : Timestamp(1597720485, 2),

"t" : NumberLong(11),

"h" : NumberLong(0),

"v" : 2,

"op" : "d",

"ns" : "testdb.mycol",

"ui" : UUID("56c4e1ad-4a15-44ca-96c8-3b3b5be29616"),

"wall" : ISODate("2020-08-18T03:14:45.511Z"),

"o" : {

"_id" : ObjectId("5f3b471b6530eb8aa5bf88a5")

}

}

”op”:”d”选项记录了该操作是执行删除,具体删除什么数据,由o选项记录,可以看到,o记录的是”_id”,也就是说,oplog中删除操作是根据”_id”执行的。

in conclusion

As you can see, when the DML operates the database, the oplog records document changes based on "_id". So, let's summarize the question raised at the beginning: If the start time is not specified, will the oplog data be repeated?

- If the data with the same id already exists in the current database, the secondary insert will not be executed, and the primary key conflict error will be reported;

- When doing an update, the "_id" of the updated document and the data after the change are recorded. Therefore, if you execute it again, only the data will be modified. Even if it is executed N times, the effect is the same as that of the execution. Don't be afraid to repeatedly operate a single piece of data;

- When the delete operation is executed, the document "_id" that is deleted is recorded. Similarly, the effect of executing N times is the same as executing once, because "_id" is unique.

因此,即使oplog从完全备份之前开始应用,也不会造成数据的多次变更。

Retrieved from https://www.cnblogs.com/lijiaman/p/13531574.html

link

This article explains that the main technical content comes from the sharing of Internet technology giants, as well as some self-processing (only for the role of annotations). If related questions, please leave a message after the confirmation, the implementation of infringement will be deleted