1. Startup method

To start a Hadoop cluster, you need to start two clusters, HDFS and YARN.

Note: When you start HDFS for the first time, you must format it. Essentially some cleaning and preparation work, because at this time HDFS still does not exist physically.

Execute formatting instructions on node1

hadoop namenode -format

Two, single nodes start one by one

Start the HDFS NameNode with the following command on the node1 host:

hadoop-daemon.sh start namenode

Use the following command to start secondarynamenode on the node2 host:

hadoop-daemon.sh start secondarynamenode

On the three hosts node1, node2, and node3, use the following commands to start the HDFS DataNode:

hadoop-daemon.sh start datanode

Use the following command to start YARN ResourceManager on the node1 host:

yarn-daemon.sh start resourcemanager

Use the following command to start YARN nodemanager on the three hosts node1, node2, and node3:

yarn-daemon.sh start nodemanager

The above script is located in the /export/server/hadoop-2.7.5/sbin directory. If you want to stop a certain role on a node, just change the start in the command to stop.

3. One-click script startup-module startup (recommended)

Start HDFS

start-dfs.sh

Start Yarn

start-yarn.sh

Start historical task service process

mr-jobhistory-daemon.sh start historyserver

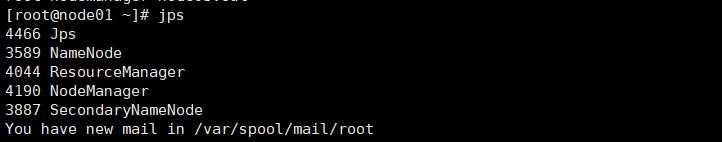

After startup, use the jps command to check whether the related services are started. jps is a command to display the Java-related processes.

node1:

Fourth, the problems encountered

1. Problem description

Hadoop startup start-all.sh error

(master: ssh: connect to host master port 22: Connection refused)

2. Reason

Cannot access node01, 02 and 03

because the mapping is not set in the hosts file

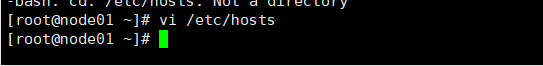

3. Solve

Modify the hosts file: Change it

to the static address of the virtual machine:

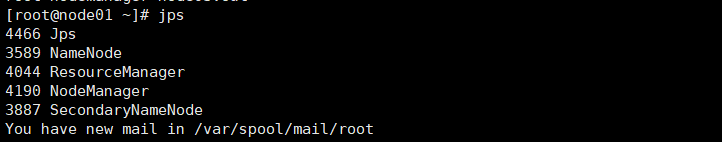

After the modification, restart the cluster, and jps view the process: the

startup is successful!