spark单机安装部署

1.安装scala

1.下载:wget https://downloads.lightbend.com/scala/2.11.12/scala-2.11.12.tgz

2.解压:tar -zxvf scala-2.11.12.tgz -C /usr/local

3.重命名:mv scala-2.10.5/ scala

4.配置到环境变量:

export SCALA_HOME=/usr/local/scala

export PATH=$PATH:$SCALA_HOME/bin# 虽然spark本身自带scala,但还是建议安装

2.安装单机版spark

1.下载:wget http://mirrors.tuna.tsinghua.edu.cn/apache/spark/spark-2.3.2/spark-2.3.2-bin-hadoop2.7.tgz

2.解压:tar -zxvf spark-2.3.2-bin-hadoop2.7.tgz -C /usr/local

3.重命名:mv spark-1.6.2-bin-hadoop2.6/ spark

4.配置到环境变量:

export SPARK_HOME=/usr/local/spark

export PATH=$PATH:$SPARK_HOME/bin:$SPARK_HOME/sbin

5.测试:

前提:

- hadoop集群开启,namenode不处于安全模式(处于安全模式点击这里)

- 向hdfs上的

/路径上传测试文件hellohadoop fs -put hello /

hello文件中的内容如下

hello world

hello tom

world from

om jery

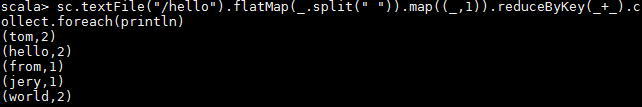

运行一个简单的spark程序

spark-shell

sc.textFile("/hello").flatMap(_.split(" ")).map((_,1)).reduceByKey(_+_).collect.foreach(println)

运行结果如下

完全分布式安装

1.修改spark-env.sh

1、`cd /usr/local/spark/conf`

2、`cp spark-env.sh.template spark-env.sh`

3、`vi spark-env.sh`

export JAVA_HOME=/usr/java/jdk1.8.0_141

export SCALA_HOME=/usr/local/spark

export SPARK_MASTER_IP=uplooking01

export SPARK_MASTER_PORT=7077

export SPARK_WORKER_CORES=1

export SPARK_WORKER_INSTANCES=1

export SPARK_WORKER_MEMORY=1g

export HADOOP_CONF_DIR=/usr/local/hadoop-2.7.5/etc/hadoop

2.修改slaves配置文件

添加`两行`记录

uplooking02

uplooking03

部署到uplooking02和uplooking03这两台机器上(这两台机器需要提前安装scala)

scp -r /usr/local/scala uplooking01@uplooking02:/usr/local/

scp -r /usr/local/spark uplooking01@uplooking03:/usr/local/

----

scp -r/usr/local//scala uplooking01@uplooking02:/usr/local/

scp -r /usr/local//spark uplooking01@uplooking03:/usr/local/

在uplooking02和uplooking03上加载好环境变量,需要source生效

source /etc/profile

3.启动

修改事宜

为了避免和hadoop中的start/stop-all.sh脚本发生冲突,将spark/sbin/start/stop-all.sh重命名

mv start-all.sh start-spark-all.sh

mv stop-all.sh stop-spark-all.sh

启动

sbin/start-spark-all.sh

会在我们配置的主节点uplooking01上启动一个进程Master

会在我们配置的从节点uplooking02上启动一个进程Worker

会在我们配置的从节点uplooking03上启动一个进程Worker

4. 简单的验证

启动spark-shell

bin/spark-shell

scala> sc.textFile("hdfs://master2/hello").flatMap(_.split(" ")).map((_, 1)).reduceByKey(_+_).collect.foreach(println)

我们发现spark非常快速的执行了这个程序,计算出我们想要的结果

一个端口:8080/4040

8080-->spark集群的访问端口,类似于hadoop中的50070和8088的综合

4040-->sparkUI的访问地址

7077-->hadoop中的9000端口

测试案例:

sc.textFile("hdfs://uplooking01:9000/a.log").flatMap(_.split(" ")).map((_, 1)).reduceByKey(_+_).collect.foreach(println)

基于zookeeper的HA配置

最好在集群停止的时候来做

第一件事

注释掉spark-env.sh中两行内容

#export SPARK_MASTER_IP=uplooking01

#export SPARK_MASTER_PORT=7077

第二件事

在spark-env.sh中加一行内容

export SPARK_DAEMON_JAVA_OPTS="-Dspark.deploy.recoveryMode=ZOOKEEPER -Dspark.deploy.zooke

eper.url=uplooking01:2181,uplooking02:2181,uplooking03:2181 -Dspark.deploy.zookeeper.dir

=/spark"

解释

spark.deploy.recoveryMode设置成 ZOOKEEPER

spark.deploy.zookeeper.urlZooKeeper URL

spark.deploy.zookeeper.dir ZooKeeper 保存恢复状态的目录,缺省为 /spark

重启集群

在任何一台spark节点上启动start-spark-all.sh

手动在集群中其他从节点上再启动master进程:sbin/start-master.sh -->在uplooking02

通过浏览器方法 uplooking01:8080 /uplooking02:8080–>Status: STANDBY Status: ALIVE

验证HA,只需要手动停掉master上spark进程Master,等一会slave01上的进程Master状态会从STANDBY编程ALIVE

# 注意,如果在uplooking02上启动,此时uplooking02也会是master,而uplooking01则都不是, 因为配置

文件中并没有指定master,只指定了slave# spark-start-all.sh也包括了start-master.sh的操作,所以才

会在该台机器上也启动master.

Spark源码编译

安装好maven后,并且配置好本地的spark仓库(不然编译时依赖从网上下载会很慢),

然后就可以在spark源码目录执行下面的命令:

mvn -Pyarn -Dhadoop.version=2.6.4 -Dyarn.version=2.6.4 -DskipTests clean package

编译成功后输出如下:

......

[INFO] ------------------------------------------------------------------------

[INFO] Reactor Summary:

[INFO]

[INFO] Spark Project Parent POM ........................... SUCCESS [ 3.617 s]

[INFO] Spark Project Test Tags ............................ SUCCESS [ 17.419 s]

[INFO] Spark Project Launcher ............................. SUCCESS [ 12.102 s]

[INFO] Spark Project Networking ........................... SUCCESS [ 11.878 s]

[INFO] Spark Project Shuffle Streaming Service ............ SUCCESS [ 7.324 s]

[INFO] Spark Project Unsafe ............................... SUCCESS [ 16.326 s]

[INFO] Spark Project Core ................................. SUCCESS [04:31 min]

[INFO] Spark Project Bagel ................................ SUCCESS [ 11.671 s]

[INFO] Spark Project GraphX ............................... SUCCESS [ 55.420 s]

[INFO] Spark Project Streaming ............................ SUCCESS [02:03 min]

[INFO] Spark Project Catalyst ............................. SUCCESS [02:40 min]

[INFO] Spark Project SQL .................................. SUCCESS [03:38 min]

[INFO] Spark Project ML Library ........................... SUCCESS [03:56 min]

[INFO] Spark Project Tools ................................ SUCCESS [ 15.726 s]

[INFO] Spark Project Hive ................................. SUCCESS [02:30 min]

[INFO] Spark Project Docker Integration Tests ............. SUCCESS [ 11.961 s]

[INFO] Spark Project REPL ................................. SUCCESS [ 42.913 s]

[INFO] Spark Project YARN Shuffle Service ................. SUCCESS [ 8.391 s]

[INFO] Spark Project YARN ................................. SUCCESS [ 42.013 s]

[INFO] Spark Project Assembly ............................. SUCCESS [02:06 min]

[INFO] Spark Project External Twitter ..................... SUCCESS [ 19.155 s]

[INFO] Spark Project External Flume Sink .................. SUCCESS [ 22.164 s]

[INFO] Spark Project External Flume ....................... SUCCESS [ 26.228 s]

[INFO] Spark Project External Flume Assembly .............. SUCCESS [ 3.838 s]

[INFO] Spark Project External MQTT ........................ SUCCESS [ 33.132 s]

[INFO] Spark Project External MQTT Assembly ............... SUCCESS [ 7.937 s]

[INFO] Spark Project External ZeroMQ ...................... SUCCESS [ 17.900 s]

[INFO] Spark Project External Kafka ....................... SUCCESS [ 37.597 s]

[INFO] Spark Project Examples ............................. SUCCESS [02:39 min]

[INFO] Spark Project External Kafka Assembly .............. SUCCESS [ 10.556 s]

[INFO] ------------------------------------------------------------------------

[INFO] BUILD SUCCESS

[INFO] ------------------------------------------------------------------------

[INFO] Total time: 31:22 min

[INFO] Finished at: 2018-04-24T18:33:58+08:00

[INFO] Final Memory: 89M/1440M

[INFO] ------------------------------------------------------------------------

然后就可以在下面的目录中看到编译成功的文件:

[uplooking@uplooking01 scala-2.10]$ pwd

/home/uplooking/compile/spark-1.6.2/assembly/target/scala-2.10

[uplooking@uplooking01 scala-2.10]$ ls -lh

总用量 135M

-rw-rw-r-- 1 uplooking uplooking 135M 4月 24 18:28 spark-assembly-1.6.2-hadoop2.6.4.jar

在已经安装的spark的lib目录下也可以看到该文件:

[uplooking@uplooking01 lib]$ ls -lh

总用量 291M-rw-r--r-- 1 uplooking uplooking 332K 6月 22 2016 datanucleus-api-jdo-3.2.6.jar

-rw-r--r-- 1 uplooking uplooking 1.9M 6月 22 2016 datanucleus-core-3.2.10.jar

-rw-r--r-- 1 uplooking uplooking 1.8M 6月 22 2016 datanucleus-rdbms-3.2.9.jar

-rw-r--r-- 1 uplooking uplooking 6.6M 6月 22 2016 spark-1.6.2-yarn-shuffle.jar

-rw-r--r-- 1 uplooking uplooking 173M 6月 22 2016 spark-assembly-1.6.2-hadoop2.6.0.jar

-rw-r--r-- 1 uplooking uplooking 108M 6月 22 2016 spark-examples-1.6.2-hadoop2.6.0.jar