1. K8s implanta o cluster Apache Kudu

Planejamento de instalação

| componentes | réplicas |

|---|---|

| kudu-mestre | 3 |

| must-tserver | 3 |

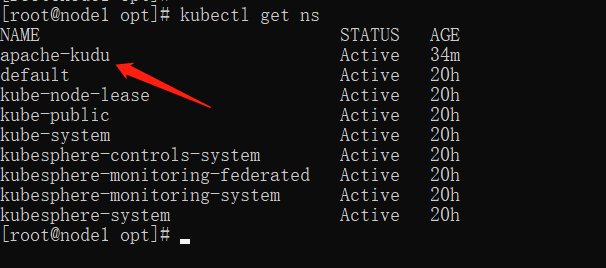

1. Crie um namespace

vi kudu-ns.yaml

apiVersion: v1

kind: Namespace

metadata:

name: apache-kudu

labels:

name: apache-kudu

kubectl apply -f kudu-ns.yaml

Verifique o espaço de nomes:

kubectl get ns

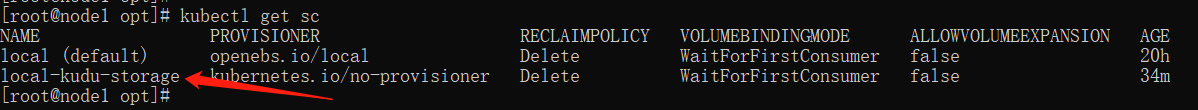

2. Crie um volume de armazenamento

vi local-kudu-storage.yaml

kind: StorageClass

apiVersion: storage.k8s.io/v1

metadata:

name: local-kudu-storage

provisioner: kubernetes.io/no-provisioner

volumeBindingMode: WaitForFirstConsumer # 绑定模式为等待消费者,即当Pod分配到节点后,进行与PV的绑定

kubectl apply -f local-kudu-storage.yaml

Ver volumes de armazenamento

kubectl get sc

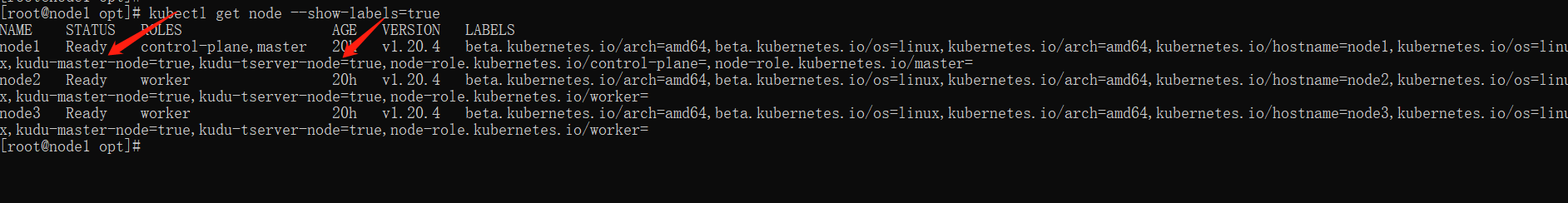

3. rótulo de preenchimento de nó

kubectl label nodes node1 kudu-master-node=true

kubectl label nodes node2 kudu-master-node=true

kubectl label nodes node3 kudu-master-node=true

kubectl label nodes node1 kudu-tserver-node=true

kubectl label nodes node2 kudu-tserver-node=true

kubectl label nodes node3 kudu-tserver-node=true

ver nodeetiqueta

kubectl get node --show-labels=true

4. Crie PV e PVC para kudu-master e tserver

Crie um diretório de armazenamento com antecedência, naquele nodeque precisa ser alocado e conceda permissões:

mkdir -p {

/opt/kudu/master-data,/opt/kudu/tserver-data} && chmod 777 {

/opt/kudu/master-data,/opt/kudu/tserver-data}

vi local-kudu-pv-pvc.yaml

apiVersion: v1

kind: PersistentVolume

metadata:

name: local-kudu-master-pv

spec:

accessModes:

- ReadWriteOnce

capacity:

storage: 5Gi

local:

path: /opt/kudu/master-data # 需要在指定的节点创建相应的目录

nodeAffinity: # 指定节点,对节点配置label

required:

nodeSelectorTerms:

- matchExpressions:

- key: kudu-master-node

operator: In

values:

- "true"

persistentVolumeReclaimPolicy: Retain # 回收策略为保留,不会删除数据,即当pod重新调度的时候,数据不会发生变化.

storageClassName: local-kudu-storage

---

kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: local-kudu-master-pvc

namespace: apache-kudu

spec:

accessModes:

- ReadWriteOnce

storageClassName: local-kudu-storage

resources:

requests:

storage: 5Gi

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: local-kudu-tserver-pv

spec:

accessModes:

- ReadWriteOnce

capacity:

storage: 5Gi

local:

path: /opt/kudu/tserver-data # 需要在指定的节点创建相应的目录

nodeAffinity: # 指定节点,对节点配置label

required:

nodeSelectorTerms:

- matchExpressions:

- key: kudu-tserver-node

operator: In

values:

- "true"

persistentVolumeReclaimPolicy: Retain # 回收策略为保留,不会删除数据,即当pod重新调度的时候,数据不会发生变化.

storageClassName: local-kudu-storage

---

kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: local-kudu-tserver-pvc

namespace: apache-kudu

spec:

accessModes:

- ReadWriteOnce

storageClassName: local-kudu-storage

resources:

requests:

storage: 5Gi

kubectl apply -f local-kudu-pv-pvc.yaml

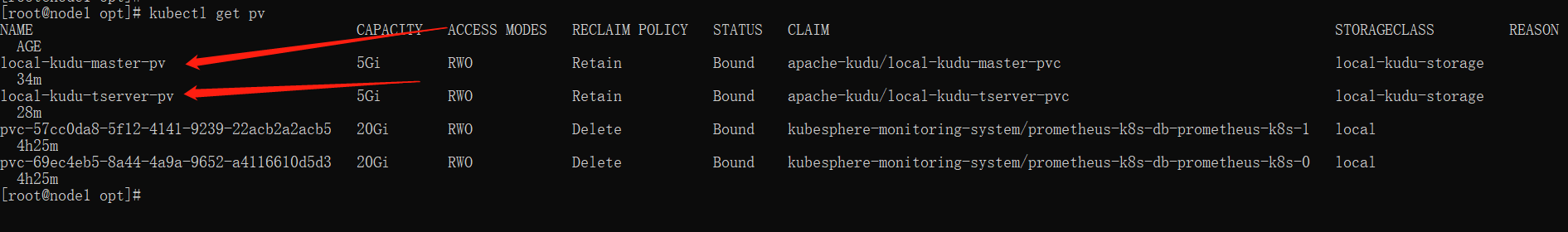

Ver PV:

kubectl get pv

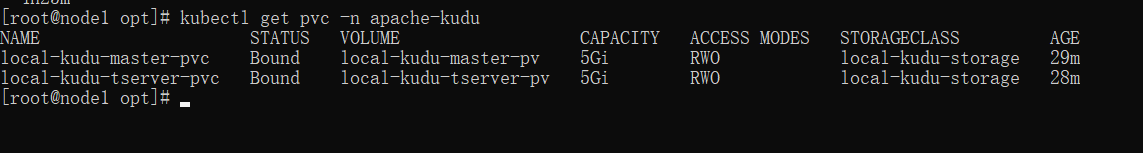

Veja os PVCs:

kubectl get pvc -n apache-kudu

5. Criar serviço de serviço

vi kudu-svc.yaml

# headless service for kudu masters

apiVersion: v1

kind: Service

metadata:

name: kudu-masters

namespace: apache-kudu

labels:

app: kudu-master

spec:

clusterIP: None

ports:

- name: ui

port: 8051

- name: rpc-port

port: 7051

selector:

app: kudu-master

---

# NodePort service for masters

apiVersion: v1

kind: Service

metadata:

name: kudu-master-ui

namespace: apache-kudu

labels:

app: kudu-master

spec:

clusterIP:

ports:

- name: ui

port: 8051

nodePort: 30051

targetPort: 8051

selector:

app: kudu-master

type: NodePort

target-port:

externalTrafficPolicy: Cluster # Local 只有所在node可以访问,Cluster 公平转发

---

# headless service for tservers

apiVersion: v1

kind: Service

metadata:

name: kudu-tservers

namespace: apache-kudu

labels:

app: kudu-tserver

spec:

clusterIP: None

ports:

- name: ui

port: 8050

- name: rpc-port

port: 7050

selector:

app: kudu-tserver

kubectl apply -f kudu-svc.yaml

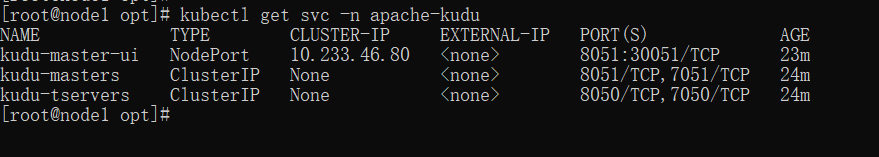

Veja o serviço criado:

kubectl get svc -n apache-kudu

6. Crie contêineres de serviço kudu-master e tserver

vi kudu-master-tserver-statefulset.yaml

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: kudu-master

namespace: apache-kudu

labels:

app: kudu-master

spec:

serviceName: kudu-masters

podManagementPolicy: "Parallel"

replicas: 3

selector:

matchLabels:

app: kudu-master

template:

metadata:

labels:

app: kudu-master

spec:

containers:

- name: kudu-master

image: apache/kudu:1.15.0

imagePullPolicy: IfNotPresent

env:

- name: GET_HOSTS_FROM

value: dns

- name: POD_IP

valueFrom:

fieldRef:

fieldPath: status.podIP

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: KUDU_MASTERS

value: "kudu-master-0.kudu-masters.apache-kudu.svc.cluster.local,kudu-master-1.kudu-masters.apache-kudu.svc.cluster.local,kudu-master-2.kudu-masters.apache-kudu.svc.cluster.local"

args: ["master"]

ports:

- containerPort: 8051

name: master-ui

- containerPort: 7051

name: master-rpc

volumeMounts:

- name: kudu-master-data

mountPath: /mnt/data0

- name: kudu-master-data

mountPath: /var/lib/kudu

volumes:

- name: kudu-master-data

persistentVolumeClaim:

claimName: local-kudu-master-pvc

---

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: kudu-tserver

namespace: apache-kudu

labels:

app: kudu-tserver

spec:

serviceName: kudu-tservers

podManagementPolicy: "Parallel"

replicas: 3

selector:

matchLabels:

app: kudu-tserver

template:

metadata:

labels:

app: kudu-tserver

spec:

containers:

- name: kudu-tserver

image: apache/kudu:1.15.0

imagePullPolicy: IfNotPresent

env:

- name: GET_HOSTS_FROM

value: dns

- name: POD_IP

valueFrom:

fieldRef:

fieldPath: status.podIP

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: KUDU_MASTERS

value: "kudu-master-0.kudu-masters.apache-kudu.svc.cluster.local,kudu-master-1.kudu-masters.apache-kudu.svc.cluster.local,kudu-master-2.kudu-masters.apache-kudu.svc.cluster.local"

args: ["tserver"]

ports:

- containerPort: 8050

name: tserver-ui

- containerPort: 7050

name: tserver-rpc

volumeMounts:

- name: kudu-tserver-data

mountPath: /mnt/data0

- name: kudu-tserver-data

mountPath: /var/lib/kudu

volumes:

- name: kudu-tserver-data

persistentVolumeClaim:

claimName: local-kudu-tserver-pvc

kubectl apply -f kudu-master-tserver-statefulset.yaml

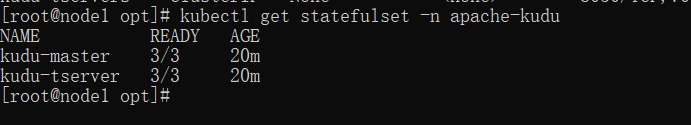

Exibir conjunto de estado:

kubectl get statefulset -n apache-kudu

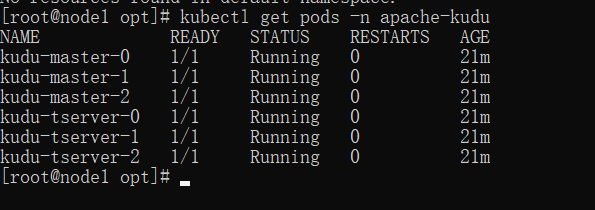

Exibir pods:

kubectl get pods -n apache-kudu

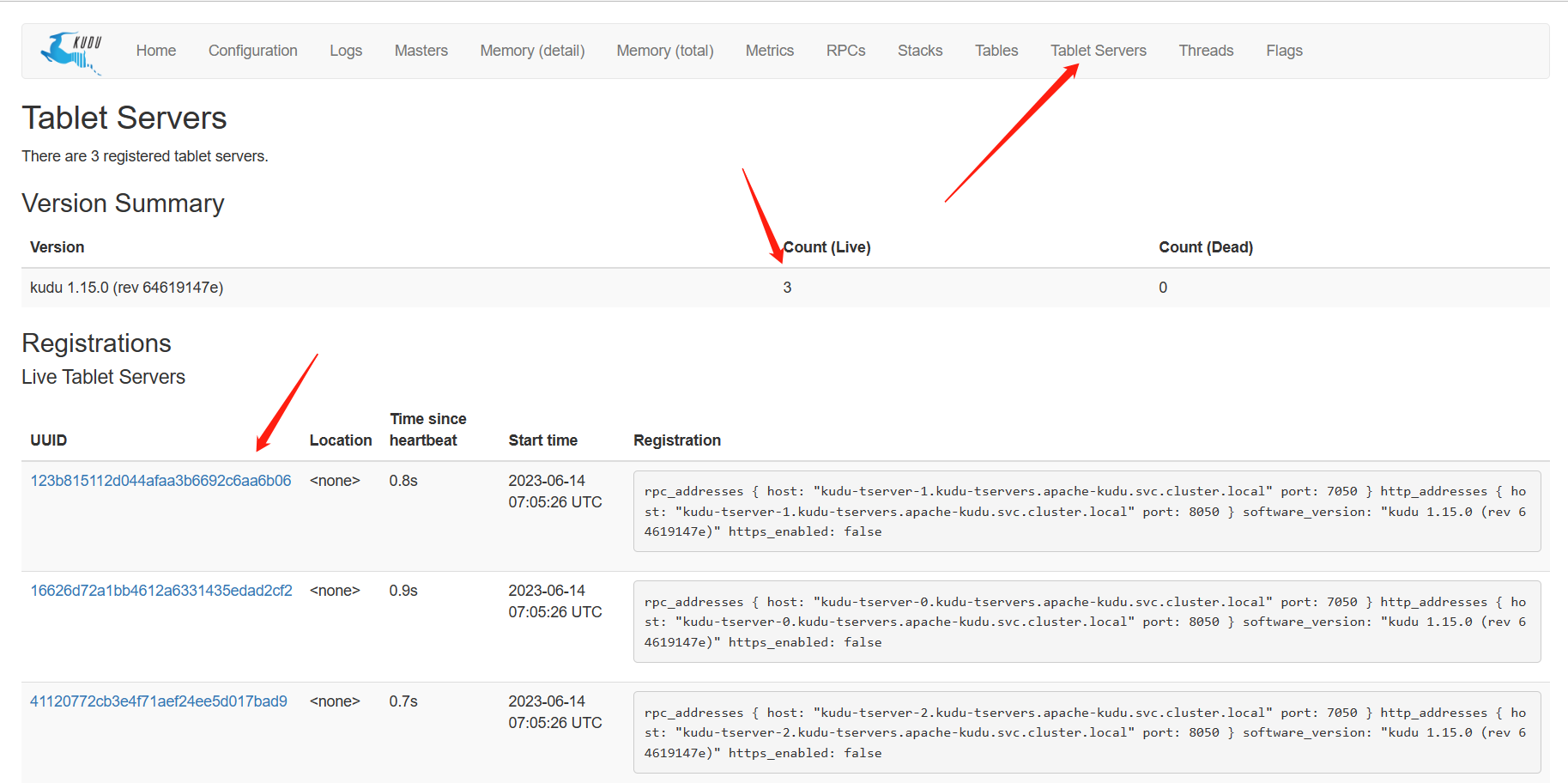

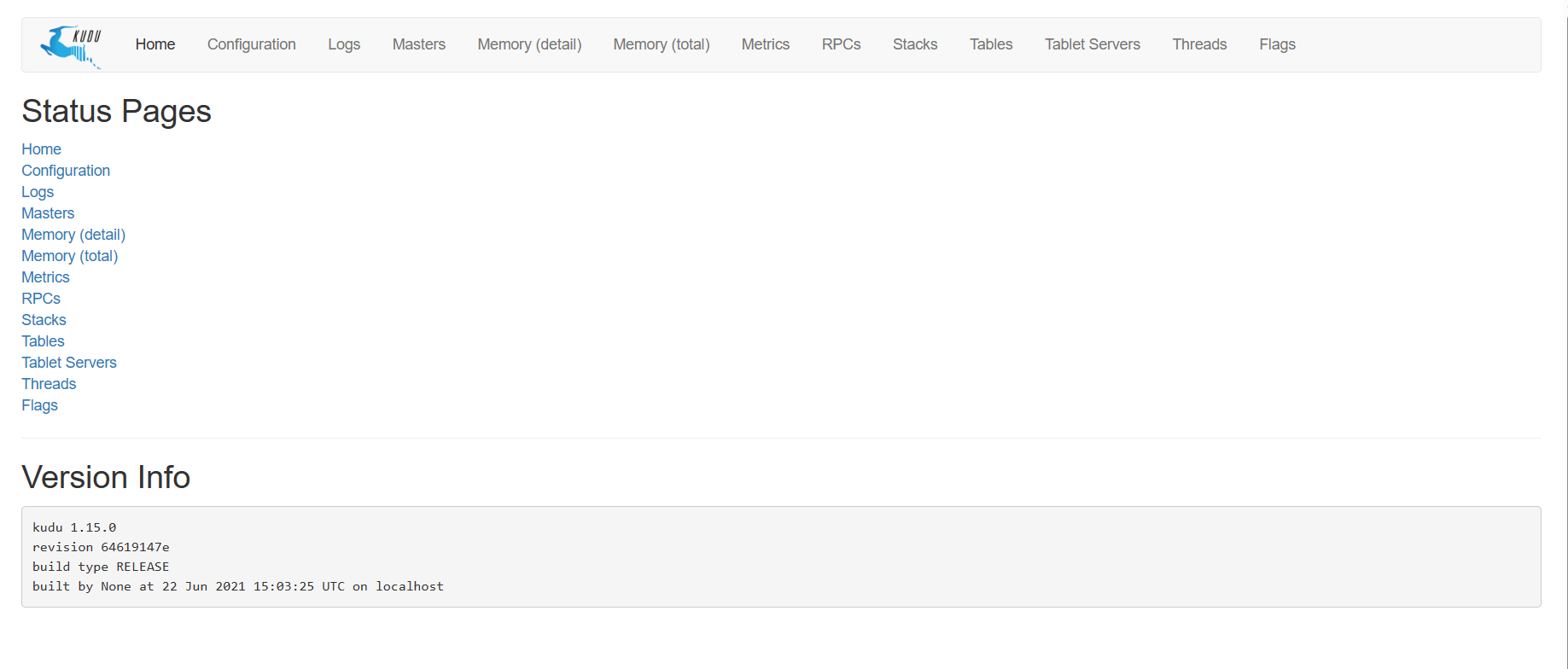

7. Ver páginas da web

http://nodel2:30051

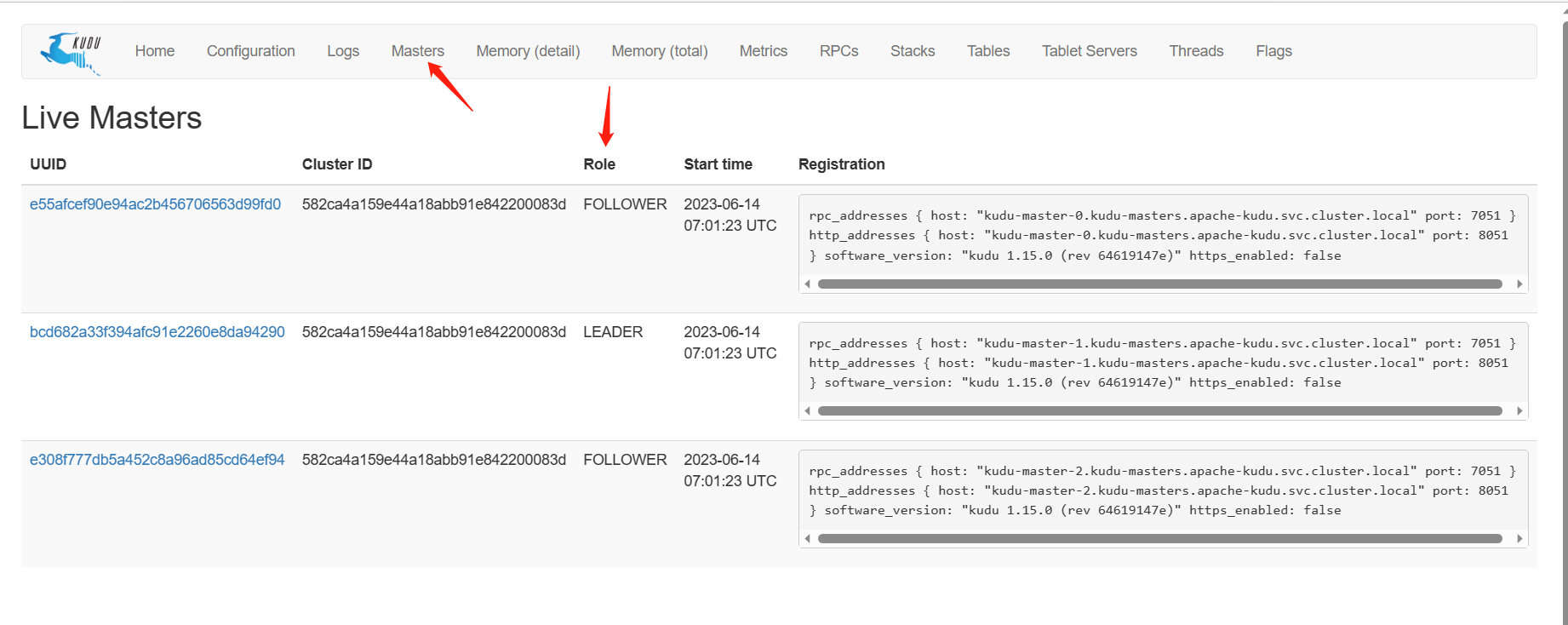

Verifique o status do mestre:

Veja o status do tserver: