Kubernetes overview

Use kubeadm to quickly deploy a k8s cluster

Kubernetes high-availability cluster binary deployment (1) Host preparation and load balancer installation

Kubernetes high-availability cluster binary deployment (2) ETCD cluster deployment

Kubernetes high-availability cluster binary deployment (3) Deploy api-server

Kubernetes high-availability cluster binary deployment (4) Deploy kubectl and kube-controller-manager, kube-scheduler

Kubernetes high-availability cluster binary deployment (5) kubelet, kube-proxy, Calico, CoreDNS

Kubernetes high-availability cluster binary deployment (6) Kubernetes cluster node addition

1. Worker node deployment

1.1 docker installation and configuration

wget -O /etc/yum.repos.d/docker-ce.repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

yum -y install docker-ce

systemctl enable docker

systemctl start docker

cat <<EOF | sudo tee /etc/docker/daemon.json

{

"exec-opts": ["native.cgroupdriver=systemd"],

"registry-mirrors": ["https://8i185852.mirror.aliyuncs.com"]

}

EOF

It must be configured native.cgroupdriver. If this step is not configured, the startup will kubeletfail

systemctl restart docker

1.2 deploy kubelet

Operates on k8s-master1 (both control plane and data plane)

1.2.1 Create kubelet-bootstrap.kubeconfig

BOOTSTRAP_TOKEN=$(awk -F "," '{print $1}' /etc/kubernetes/token.csv)

#192.168.10.100 VIP(虚拟IP)

kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://192.168.10.100:6443 --kubeconfig=kubelet-bootstrap.kubeconfig

kubectl config set-credentials kubelet-bootstrap --token=${

BOOTSTRAP_TOKEN} --kubeconfig=kubelet-bootstrap.kubeconfig

kubectl config set-context default --cluster=kubernetes --user=kubelet-bootstrap --kubeconfig=kubelet-bootstrap.kubeconfig

kubectl config use-context default --kubeconfig=kubelet-bootstrap.kubeconfig

#创建集群角色绑定

kubectl create clusterrolebinding cluster-system-anonymous --clusterrole=cluster-admin --user=kubelet-bootstrap

kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --user=kubelet-bootstrap --kubeconfig=kubelet-bootstrap.kubeconfig

kubectl describe clusterrolebinding cluster-system-anonymous

kubectl describe clusterrolebinding kubelet-bootstrap

1.2.2 Create kubelet configuration file

[root@k8s-master1 k8s-work]# cat > kubelet.json << "EOF"

{

"kind": "KubeletConfiguration",

"apiVersion": "kubelet.config.k8s.io/v1beta1",

"authentication": {

"x509": {

"clientCAFile": "/etc/kubernetes/ssl/ca.pem"

},

"webhook": {

"enabled": true,

"cacheTTL": "2m0s"

},

"anonymous": {

"enabled": false

}

},

"authorization": {

"mode": "Webhook",

"webhook": {

"cacheAuthorizedTTL": "5m0s",

"cacheUnauthorizedTTL": "30s"

}

},

"address": "192.168.10.103", #当前主机地址

"port": 10250,

"readOnlyPort": 10255,

"cgroupDriver": "systemd",

"hairpinMode": "promiscuous-bridge",

"serializeImagePulls": false,

"clusterDomain": "cluster.local.",

"clusterDNS": ["10.96.0.2"]

}

EOF

1.2.3 Create kubelet configuration file

cat > kubelet.service << "EOF"

[Unit]

Description=Kubernetes Kubelet

Documentation=https://github.com/kubernetes/kubernetes

After=docker.service

Requires=docker.service

[Service]

WorkingDirectory=/var/lib/kubelet

ExecStart=/usr/local/bin/kubelet \

--bootstrap-kubeconfig=/etc/kubernetes/kubelet-bootstrap.kubeconfig \

--cert-dir=/etc/kubernetes/ssl \

--kubeconfig=/etc/kubernetes/kubelet.kubeconfig \

--config=/etc/kubernetes/kubelet.json \

--network-plugin=cni \

--rotate-certificates \

--pod-infra-container-image=registry.aliyuncs.com/google_containers/pause:3.2 \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/var/log/kubernetes \

--v=2

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

EOF

1.2.4 Synchronize files to cluster nodes

cp kubelet-bootstrap.kubeconfig /etc/kubernetes/

cp kubelet.json /etc/kubernetes/

cp kubelet.service /usr/lib/systemd/system/

for i in k8s-master2 k8s-master3 k8s-worker1;do scp kubelet-bootstrap.kubeconfig kubelet.json $i:/etc/kubernetes/;done

for i in k8s-master2 k8s-master3 k8s-worker1;do scp ca.pem $i:/etc/kubernetes/ssl/;done

for i in k8s-master2 k8s-master3 k8s-worker1;do scp kubelet.service $i:/usr/lib/systemd/system/;done

说明:

kubelet.json中address需要修改为当前主机IP地址。

vim /etc/kubernetes/kubelet.json

1.2.5 Create directory and start service

Execute on all worker nodes

mkdir -p /var/lib/kubelet

mkdir -p /var/log/kubernetes

systemctl daemon-reload

systemctl enable --now kubelet

systemctl status kubelet

# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master1 NotReady <none> 12s v1.21.10

k8s-master2 NotReady <none> 19s v1.21.10

k8s-master3 NotReady <none> 19s v1.21.10

k8s-worker1 NotReady <none> 18s v1.21.10

NotReady is because the network has not started

# kubectl get csr

NAME AGE SIGNERNAME REQUESTOR CONDITION

csr-b949p 7m55s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Approved,Issued

csr-c9hs4 3m34s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Approved,Issued

csr-r8vhp 5m50s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Approved,Issued

csr-zb4sr 3m40s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Approved,Issued

说明:

确认kubelet服务启动成功后,接着到master上Approve一下bootstrap请求。

1.3 Deploy kube-proxy

1.3.1 Create kube-proxy certificate request file

[root@k8s-master1 k8s-work]# cat > kube-proxy-csr.json << "EOF"

{

"CN": "system:kube-proxy",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "kubemsb",

"OU": "CN"

}

]

}

EOF

1.3.2 Generate certificate

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

# ls kube-proxy*

kube-proxy.csr kube-proxy-csr.json kube-proxy-key.pem kube-proxy.pem

1.3.3 Create kubeconfig file

#设置管理集群

kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://192.168.10.100:6443 --kubeconfig=kube-proxy.kubeconfig

#设置证书

kubectl config set-credentials kube-proxy --client-certificate=kube-proxy.pem --client-key=kube-proxy-key.pem --embed-certs=true --kubeconfig=kube-proxy.kubeconfig

#设置上下文

kubectl config set-context default --cluster=kubernetes --user=kube-proxy --kubeconfig=kube-proxy.kubeconfig

#使用上下文

kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

1.3.4 Create a service configuration file

cat > kube-proxy.yaml << "EOF"

apiVersion: kubeproxy.config.k8s.io/v1alpha1

bindAddress: 192.168.10.103 #本机地址

clientConnection:

kubeconfig: /etc/kubernetes/kube-proxy.kubeconfig

clusterCIDR: 10.244.0.0/103 #pod网络,不用改

healthzBindAddress: 192.168.10.103:10256 #本机地址

kind: KubeProxyConfiguration

metricsBindAddress: 192.168.10.103:10249 #本机地址

mode: "ipvs" #ipvs比iptables更适用于大型集群

EOF

1.3.5 Create a service startup management file

cat > kube-proxy.service << "EOF"

[Unit]

Description=Kubernetes Kube-Proxy Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

WorkingDirectory=/var/lib/kube-proxy

ExecStart=/usr/local/bin/kube-proxy \

--config=/etc/kubernetes/kube-proxy.yaml \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/var/log/kubernetes \

--v=2

Restart=on-failure

RestartSec=5

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

1.3.6 Synchronize files to cluster working node hosts

cp kube-proxy*.pem /etc/kubernetes/ssl/

cp kube-proxy.kubeconfig kube-proxy.yaml /etc/kubernetes/

cp kube-proxy.service /usr/lib/systemd/system/

for i in k8s-master2 k8s-master3 k8s-worker1;do scp kube-proxy.kubeconfig kube-proxy.yaml $i:/etc/kubernetes/;done

for i in k8s-master2 k8s-master3 k8s-worker1;do scp kube-proxy.service $i:/usr/lib/systemd/system/;done

说明:

修改kube-proxy.yaml中IP地址为当前主机IP.

vim /etc/kubernetes/kube-proxy.yaml

1.3.7 Service start

#创建WorkingDirectory

mkdir -p /var/lib/kube-proxy

systemctl daemon-reload

systemctl enable --now kube-proxy

systemctl status kube-proxy

2. Network components deploy Calico

2.1 download

wget https://docs.projectcalico.org/v3.19/manifests/calico.yaml

2.2 Modify files

vim calico.yaml

#修改如下两行,取消注释

3683 - name: CALICO_IPV4POOL_CIDR

3684 value: "10.244.0.0/16" #pod网络

2.3 Application files

kubectl apply -f calico.yaml

2.4 Verify application results

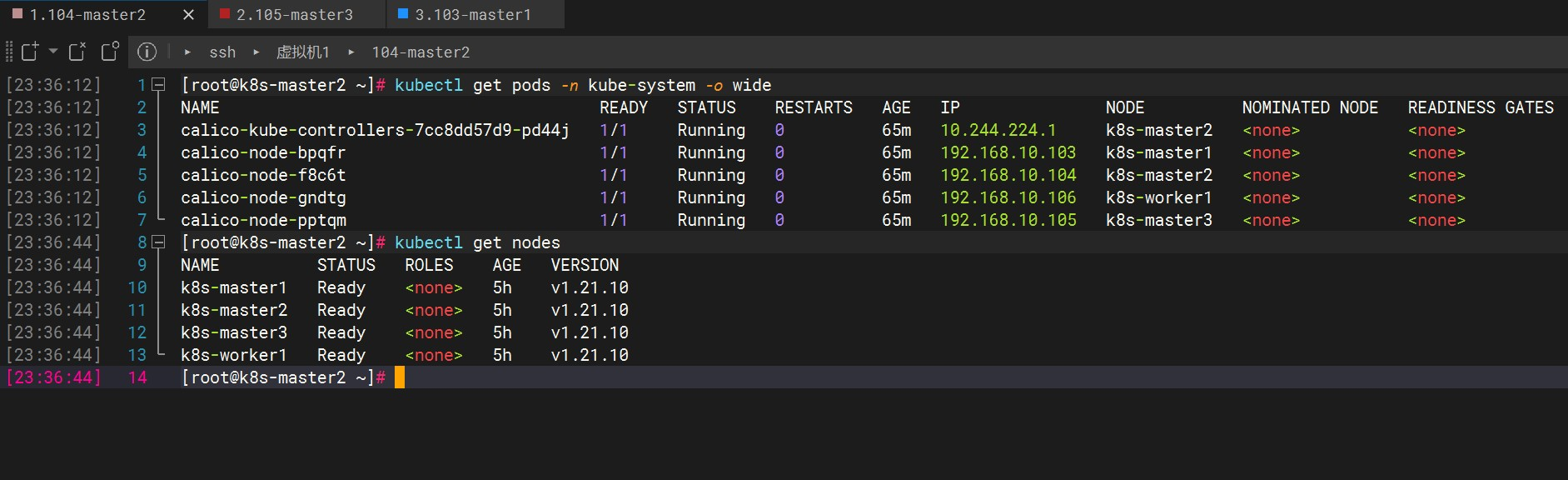

[root@k8s-master1 k8s-work]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

calico-kube-controllers-7cc8dd57d9-dcwjv 0/1 ContainerCreating 0 94s

calico-node-2pmqz 0/1 Init:0/3 0 94s

calico-node-9ms2r 0/1 Init:0/3 0 94s

calico-node-tj5rt 0/1 Init:0/3 0 94s

calico-node-wnjcv 0/1 PodInitializing 0 94s

[root@k8s-master1 k8s-work]# kubectl get pods -n kube-system -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

calico-kube-controllers-7cc8dd57d9-dcwjv 0/1 ContainerCreating 0 2m29s <none> k8s-master2 <none> <none>

calico-node-2pmqz 0/1 Init:0/3 0 2m29s 192.168.10.103 k8s-master1 <none> <none>

calico-node-9ms2r 0/1 Init:ImagePullBackOff 0 2m29s 192.168.10.105 k8s-master3 <none> <none>

calico-node-tj5rt 0/1 Init:0/3 0 2m29s 192.168.10.106 k8s-worker1 <none> <none>

calico-node-wnjcv 0/1 PodInitializing 0 2m29s 192.168.10.104 k8s-master2 <none> <none>

[root@k8s-master1 k8s-work]#

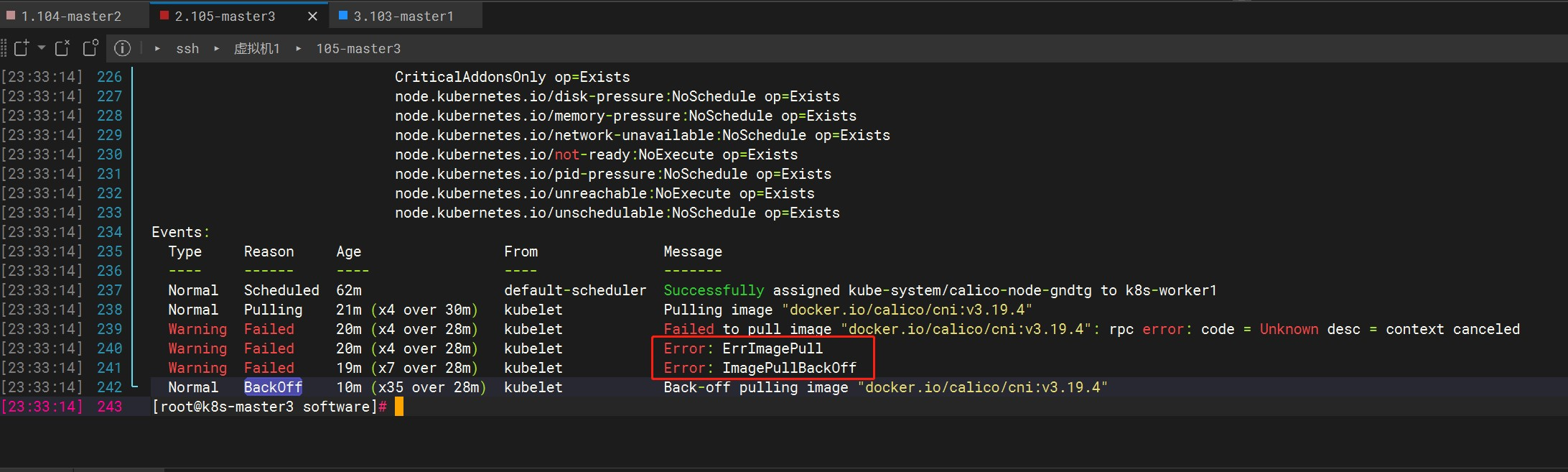

There has been no change for a long time STATUS, you can check the details with the following command

kubectl describe pod calico-node-gndtg -n kube-system

If there are pods that are running all Init:ImagePullBackOffthe time, and there is still no Running after waiting for a long time, you can try to download the image package and upload it to the server through ftp.

https://github.com/projectcalico/calico/releases?page=3 Find the required version to download, and upload the corresponding image in the images directory to the server

docker load -i calico-pod2daemon-flexvol.tar

docker load -i calico-kube-controllers.tar

docker load -i calico-cni.tar

docker load -i calico-node.tar

docker images

I have four working nodes here, one of which downloads and runs normally after executing the command Runing, and the other three wait for a long time and have been in the pull state. Finally, the above methods are used to solve the problem. In summary, it is still a network problem.

If it has been in Pending, check to see if the node has been stained

kubectl describe node k8s-master2 |grep Taint

#删除污点

kubectl taint nodes k8s-master2 key:NoSchedule-

There are three taint values, as follows:

NoSchedule : Must not be scheduled

PreferNoSchedule : Try not to be scheduled [There is also a chance of being scheduled]

NoExecute : Will not be scheduled, and will also expel the existing Pods of the Node

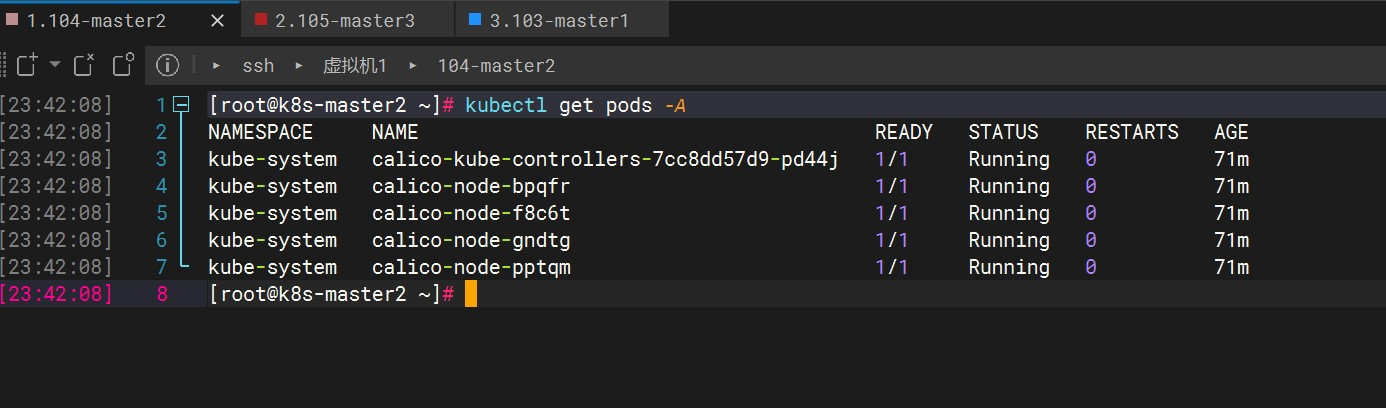

finallyReady

# kubectl get pods -A

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system calico-kube-controllers-7cc8dd57d9-pd44j 1/1 Running 0 70m

kube-system calico-node-bpqfr 1/1 Running 0 70m

kube-system calico-node-f8c6t 1/1 Running 0 70m

kube-system calico-node-gndtg 1/1 Running 0 70m

kube-system calico-node-pptqm 1/1 Running 0 70m

# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master1 Ready <none> 5h v1.21.10

k8s-master2 Ready <none> 5h v1.21.10

k8s-master3 Ready <none> 5h v1.21.10

k8s-worker1 Ready <none> 5h v1.21.10

3. Deploy CoreDNS

It is used to implement name resolution between services in k8s. For example, two services are deployed between k8s and want to access them by name, or services in the k8s cluster want to access some services in the Internet.

Execute up k8s-master1and down/data/k8s-work/ :

cat > coredns.yaml << "EOF"

apiVersion: v1

kind: ServiceAccount

metadata:

name: coredns

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

kubernetes.io/bootstrapping: rbac-defaults

name: system:coredns

rules:

- apiGroups:

- ""

resources:

- endpoints

- services

- pods

- namespaces

verbs:

- list

- watch

- apiGroups:

- discovery.k8s.io

resources:

- endpointslices

verbs:

- list

- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

labels:

kubernetes.io/bootstrapping: rbac-defaults

name: system:coredns

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:coredns

subjects:

- kind: ServiceAccount

name: coredns

namespace: kube-system

---

apiVersion: v1

kind: ConfigMap

metadata:

name: coredns

namespace: kube-system

data:

Corefile: |

.:53 {

errors

health {

lameduck 5s

}

ready

kubernetes cluster.local in-addr.arpa ip6.arpa {

fallthrough in-addr.arpa ip6.arpa

}

prometheus :9153

forward . /etc/resolv.conf {

max_concurrent 1000

}

cache 30

loop

reload

loadbalance

}

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: coredns

namespace: kube-system

labels:

k8s-app: kube-dns

kubernetes.io/name: "CoreDNS"

spec:

# replicas: not specified here:

# 1. Default is 1.

# 2. Will be tuned in real time if DNS horizontal auto-scaling is turned on.

strategy:

type: RollingUpdate

rollingUpdate:

maxUnavailable: 1

selector:

matchLabels:

k8s-app: kube-dns

template:

metadata:

labels:

k8s-app: kube-dns

spec:

priorityClassName: system-cluster-critical

serviceAccountName: coredns

tolerations:

- key: "CriticalAddonsOnly"

operator: "Exists"

nodeSelector:

kubernetes.io/os: linux

affinity:

podAntiAffinity:

preferredDuringSchedulingIgnoredDuringExecution:

- weight: 100

podAffinityTerm:

labelSelector:

matchExpressions:

- key: k8s-app

operator: In

values: ["kube-dns"]

topologyKey: kubernetes.io/hostname

containers:

- name: coredns

image: coredns/coredns:1.8.4

imagePullPolicy: IfNotPresent

resources:

limits:

memory: 170Mi

requests:

cpu: 100m

memory: 70Mi

args: [ "-conf", "/etc/coredns/Corefile" ]

volumeMounts:

- name: config-volume

mountPath: /etc/coredns

readOnly: true

ports:

- containerPort: 53

name: dns

protocol: UDP

- containerPort: 53

name: dns-tcp

protocol: TCP

- containerPort: 9153

name: metrics

protocol: TCP

securityContext:

allowPrivilegeEscalation: false

capabilities:

add:

- NET_BIND_SERVICE

drop:

- all

readOnlyRootFilesystem: true

livenessProbe:

httpGet:

path: /health

port: 8080

scheme: HTTP

initialDelaySeconds: 60

timeoutSeconds: 5

successThreshold: 1

failureThreshold: 5

readinessProbe:

httpGet:

path: /ready

port: 8181

scheme: HTTP

dnsPolicy: Default

volumes:

- name: config-volume

configMap:

name: coredns

items:

- key: Corefile

path: Corefile

---

apiVersion: v1

kind: Service

metadata:

name: kube-dns

namespace: kube-system

annotations:

prometheus.io/port: "9153"

prometheus.io/scrape: "true"

labels:

k8s-app: kube-dns

kubernetes.io/cluster-service: "true"

kubernetes.io/name: "CoreDNS"

spec:

selector:

k8s-app: kube-dns

clusterIP: 10.96.0.2 #需要和上边指定的clusterDNS IP一致

ports:

- name: dns

port: 53

protocol: UDP

- name: dns-tcp

port: 53

protocol: TCP

- name: metrics

port: 9153

protocol: TCP

EOF

kubectl apply -f coredns.yaml

# kubectl get pods -A

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system calico-kube-controllers-7cc8dd57d9-pd44j 1/1 Running 1 24h

kube-system calico-node-bpqfr 1/1 Running 1 24h

kube-system calico-node-f8c6t 1/1 Running 1 24h

kube-system calico-node-gndtg 1/1 Running 2 24h

kube-system calico-node-pptqm 1/1 Running 1 24h

kube-system coredns-675db8b7cc-xlwsp 1/1 Running 0 3m21s

#kubectl get pods -n kube-system -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

calico-kube-controllers-7cc8dd57d9-pd44j 1/1 Running 1 24h 10.244.224.2 k8s-master2 <none> <none>

calico-node-bpqfr 1/1 Running 1 24h 192.168.10.103 k8s-master1 <none> <none>

calico-node-f8c6t 1/1 Running 1 24h 192.168.10.104 k8s-master2 <none> <none>

calico-node-gndtg 1/1 Running 2 24h 192.168.10.106 k8s-worker1 <none> <none>

calico-node-pptqm 1/1 Running 1 24h 192.168.10.105 k8s-master3 <none> <none>

coredns-675db8b7cc-xlwsp 1/1 Running 0 3m47s 10.244.159.129 k8s-master1 <none> <none>

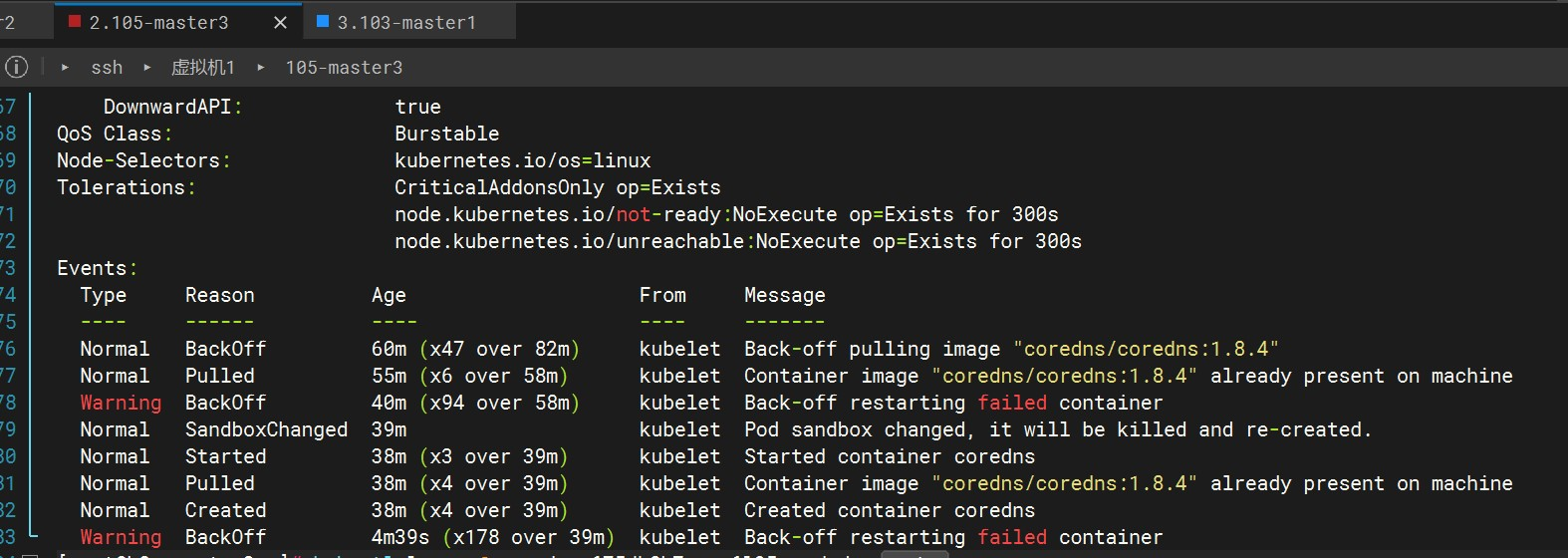

Like Calico, if it is always in ImagePullBackOff, it is because of the problem of pulling the image after checking. You can try to download the image locally and upload it to the server to load

Image download website , go to the docker hub to search for the image and version to be downloaded, download it locally and upload it to the server

docker load -i coredns-coredns-1.8.4-.tar

docker images

#标签不对应的话重新打标签

docker tag 镜像id coredns/coredns:v1.8.4

At this point, I still haven't started normally, and the following information is prompted

kubectl describe pod coredns-675db8b7cc-q6l95 -n kube-system

After trying to delete the pod, recreating the CoreDNS Pod works fine

# 查看日志

kubectl logs -f coredns-675db8b7cc-q6l95 -n kube-system

# 删除并重新创建CoreDNS Pod

kubectl delete pod coredns-675db8b7cc-q6l95 -n kube-system

kubectl apply -f coredns.yaml

4. Deploy application verification

Create pods on k8s-master1

[root@k8s-master1 k8s-work]# cat > nginx.yaml << "EOF"

---

apiVersion: v1

kind: ReplicationController

metadata:

name: nginx-web

spec:

replicas: 2

selector:

name: nginx

template:

metadata:

labels:

name: nginx

spec:

containers:

- name: nginx

image: nginx:1.19.6

ports:

- containerPort: 80

---

apiVersion: v1

kind: Service #可以通过不同的方式对k8s集群服务进行访问

metadata:

name: nginx-service-nodeport

spec:

ports:

- port: 80

targetPort: 80

nodePort: 30001 #把k8s集群中运行应用的80端口映射到30001端口

protocol: TCP

type: NodePort

selector:

name: nginx

EOF

kubectl apply -f nginx.yaml

# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-web-qzvw4 1/1 Running 0 58s 10.244.194.65 k8s-worker1 <none> <none>

nginx-web-spw5t 1/1 Running 0 58s 10.244.224.1 k8s-master2 <none> <none>

# kubectl get all

NAME READY STATUS RESTARTS AGE

pod/nginx-web-jnbhx 1/1 Running 1 23h

NAME DESIRED CURRENT READY AGE

replicationcontroller/nginx-web 1 1 1 2d

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 3d6h

service/nginx-service-nodeport NodePort 10.96.72.89 <none> 80:30001/TCP 2d

Check if there is port 30001

ss -anput | grep ":30001"

You can see that each worker node has

Access: http://192.168.10.103:30001, http://192.168.10.104:30001, http://192.168.10.105:30001, http://192.168.10.106:30001

#查看组件状态

kubectl get cs

#查看pod

kubectl get pods