The first two mapreduce depth study: 14, mapreduce data compression - Use compression snappy

File compression has two advantages, saving disk space and speed up the transmission of data over a network and disk.

A mode: compression code set

Code:

FlowMain:

public static void main(String[] args) throws Exception {

// 设置我们的map阶段的压缩

Configuration configuration = new Configuration();

configuration.set("mapreduce.map.output.compress","true");

configuration.set("mapreduce.map.output.compress.codec","org.apache.hadoop.io.compress.SnappyCodec");

// 设置我们的reduce阶段的压缩

configuration.set("mapreduce.output.fileoutputformat.compress","true");

configuration.set("mapreduce.output.fileoutputformat.compress.type","RECORD");

configuration.set("mapreduce.output.fileoutputformat.compress.codec","org.apache.hadoop.io.compress.SnappyCodec");

int run = ToolRunner.run(configuration, new FlowMain(), args);

System.exit(run);

}

Second way: configure a global MapReduce compression

We can modify mapred-site.xml configuration file, then restart the cluster, so that all mapreduce compression tasks ( usually not so configured )

map the output data is compressed

<property>

<name>mapreduce.map.output.compress</name>

<value>true</value>

</property>

<property>

<name>mapreduce.map.output.compress.codec</name>

<value>org.apache.hadoop.io.compress.SnappyCodec</value>

</property>

reduce the output data is compressed

<property>

<name>mapreduce.output.fileoutputformat.compress</name>

<value>true</value>

</property>

<property>

<name>mapreduce.output.fileoutputformat.compress.type</name>

<value>RECORD</value>

</property>

<property>

<name>mapreduce.output.fileoutputformat.compress.codec</name>

<value>org.apache.hadoop.io.compress.SnappyCodec</value>

</property>

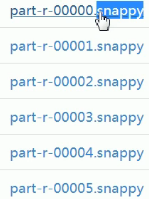

The result: generate the following compressed files.

Note: We are inconvenient to manually open these compressed files, but the program will automatically compress these files according to their extension solution, then passed to the next step.