1. Introduction

Netty is a high-performance, asynchronous event-driven NIO framework. It is based on the API provided by Java Nio and provides support for TCP, UDP and file transfer.

2. Reactor model

Reactor is an event-driven model for concurrently processing responses to client requests. After receiving the client request, the server adopts a multiplexing strategy to receive all client requests asynchronously through a non-blocking thread.

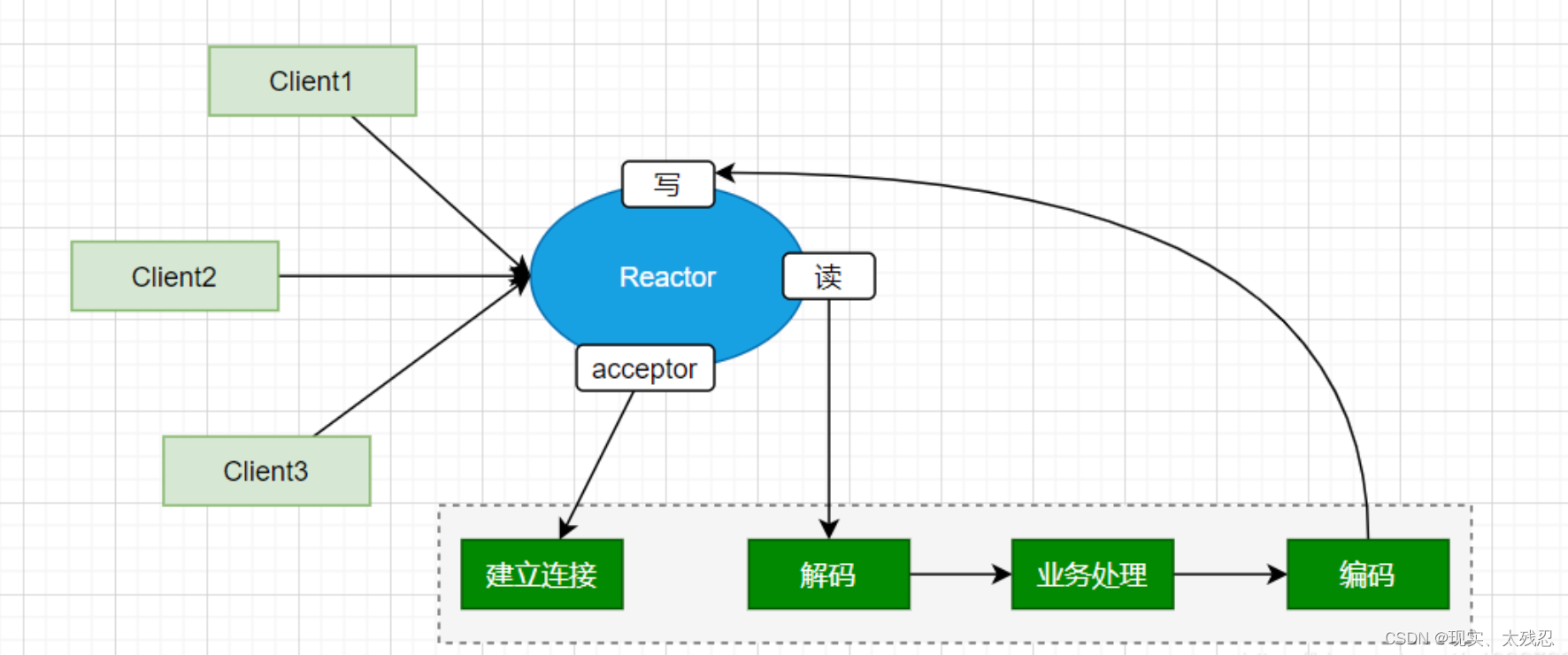

(1) Reactor single-threaded model

A thread is responsible for establishing connections, reading, writing and other operations. If there is a time-consuming operation in the processing of the business, it will cause a delay in the processing of all requests.

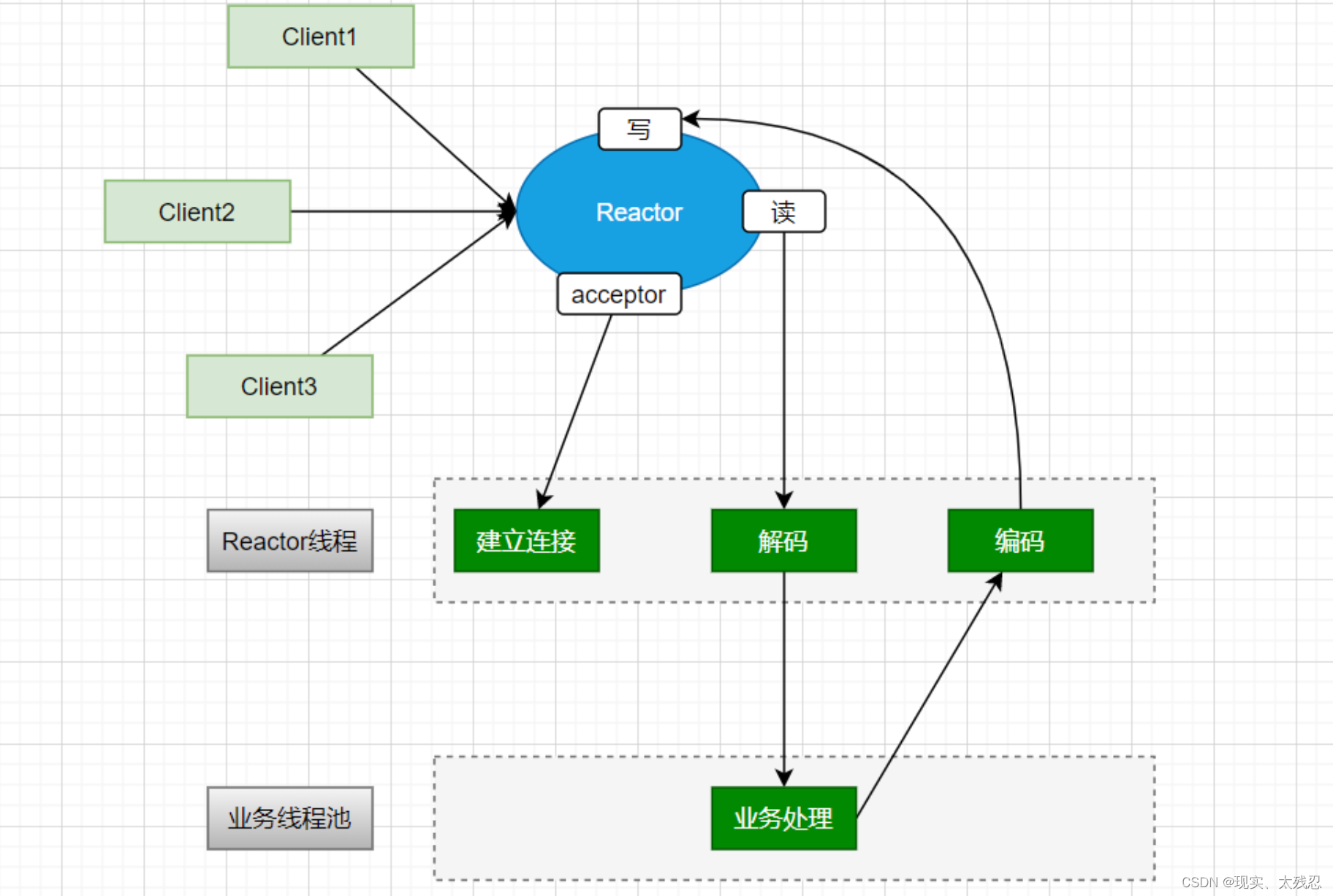

(2) Reactor multithreading model

Only in the case of a single-threaded model, use the thread pool to manage multiple threads, and use multiple threads to process business.

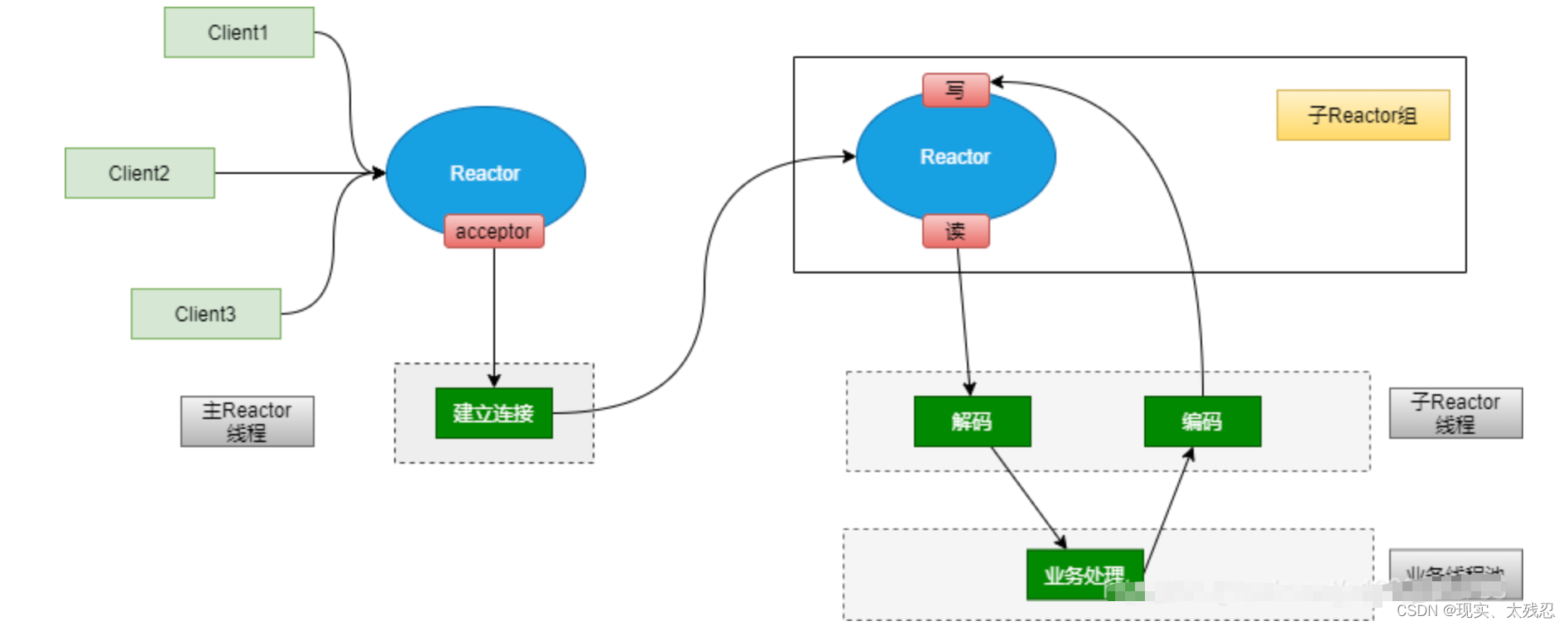

(3) Reactor master-slave multithreading model

This model uses a main Reactor to only handle connections, while multiple sub-Reactors are used to handle IO reads and writes. Then hand it over to the thread pool to handle the business. Tomcat is implemented using this mode. efficient

3. The core components of Netty

(1)BootStrap/ServerBootStrap

BootStrap is used for client service startup. ServerBootStrap is used for server startup.

(2)NioEventLoop

Execute event operations based on thread queues. The specific event operations to be executed include connection registration, port binding, and I/O data reading and writing. Each NioEventLoop thread is responsible for event processing of multiple Channels.

(3)NioEventLoopGroup

Manage the life cycle of NioEventLoop

(4)Future/ChannelFuture

For the realization of asynchronous communication, based on the asynchronous communication method, an event can be registered after the I/O operation is triggered, and the monitoring event is automatically triggered after the I/O operation (data reading and writing is completed or failed) is completed and subsequent operations are completed.

(5)Channel

It is a network communication component in netty, which is used to perform specific I/O operations. The main functions include: establishment of network connection, management of connection status, configuration of network connection parameters, network data operation based on asynchronous NIO.

(6)Selector

Channel management for I/O multiplexing

(7)ChannelHandlerContext

Management of Channel context information. Each ChannelHandler corresponds to a ChannelHandlerContext.

(8)ChannelHandler

Interception and processing of I/O events.

Among them, ChannelInBoundHandler is used to handle the I/O operation of data reception.

Among them, ChannelOutBoundHandler is used to handle the I/O operation of data transmission.

(9)ChannelPipeline

Event interception, processing and forwarding based on the interceptor design pattern.

Each Channel corresponds to a ChannelPipeline. In the ChannelPipeline, a doubly linked list composed of ChannelHandlerContext is maintained. Each ChannelHandlerContext corresponds to a ChannelHandker to complete the interception and processing of specific Channel events.

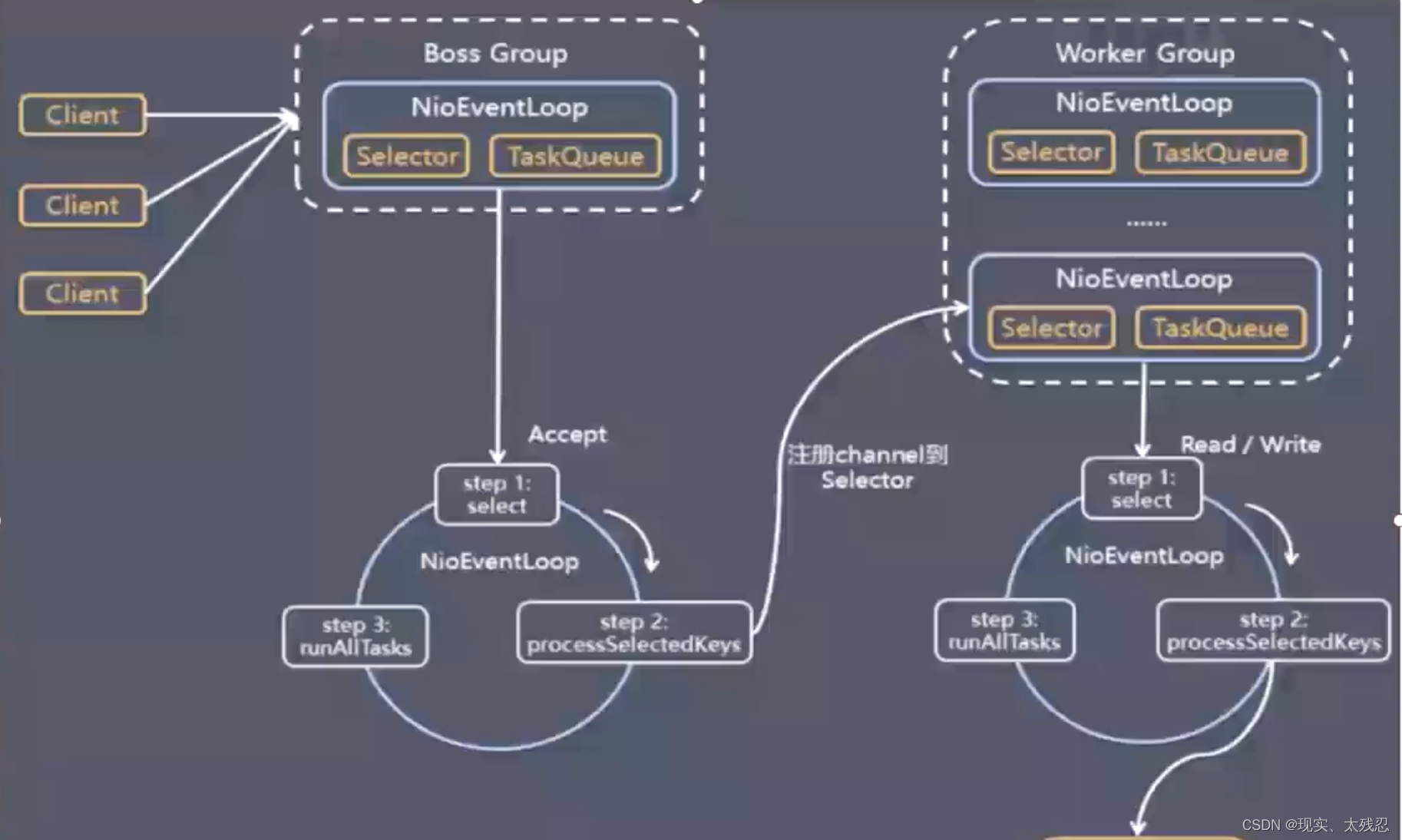

Fourth, the operating principle of netty

Server workflow:

1. When the server starts, bind a local port and initialize NioServerSocketChannel.

2. Register your own NioServerSocketChannel to a selector of a BossNioEventLoopGroup.

The server side contains 1 Boss NioEventLoopGroup and 1 Worker NioEventLoopGroup

Boss NioEventLoopGroup is responsible for receiving client connections, and Worker NioEventLoopGroup is responsible for network read and write.

NioEventLoopGroup is equivalent to an event loop group, which contains multiple event loops NioEventLoop, each NioEventLoop contains a selector and an event loop thread.

The tasks performed by BossNioEventLoopGroup loop:

- Poll accept event;

- Process the accept event, and register the generated NioSocketChannel to a Selector of a WorkNioEventLoopGroup.

- Process tasks in the task queue, runAllTasks. The tasks in the task queue include tasks executed by the user calling eventloop.execute or schedule, or tasks submitted to the eventloop by other threads.

The tasks performed by WorkNioEventLoopGroup loop:

- Polling for read and write events

- Handle IO events, when NioSocketChannel readable and writable events occur, callback (trigger) ChannelHandler for processing.

- Process tasks in the task queue, ie runAllTasks

5. How does netty solve the problem of sticking and unpacking

Use a decoder to solve, there are 4 kinds of decoders

ch.pipeline().addLast(new LengthFieldBasedFrameDecoder());- Fixed-length unpacker FixedLengthFrameDecoder , the splitting of each application layer packet is a fixed-length size

- Line unpacking device LineBasedFrameDecoder , each application layer data packet is split with a newline character as a separator

- Delimiter unpacker DelimiterBasedFrameDecoder , each application layer data packet is split by custom delimiter

- An unpacker based on the length of the data packet, LengthFieldBasedFrameDecoder uses the length of the application layer data packet as the basis for splitting the application layer data packet at the receiving end. (commonly used)

6. Why is the performance of netty so high

(1) Network model (I/O multiplexing model)

Multiplexing IO, using one thread to process connection requests, multiple threads to process IO requests, it can handle more requests than BIO, data requests and data processing are asynchronous, the underlying layer uses linux select, poll, epoll

(2) Data zero copy

Netty's data receiving and sending all use external direct memory for socket reading and writing, and the off-heap memory can be directly used in the operating system memory, without the need for secondary copying of the byte buffer back and forth. At the same time, netty provides combined buffer objects, which can avoid the performance loss caused by the traditional way of merging buffers through memory copy.

Netty file transfer is operated by transferTo method, completely zero copy.

(3) Memory reuse mechanism

Use off-heap direct memory, reuse buffers, and do not require jvm to reclaim memory

(4) Lock-free design

Netty internally adopts a serial lock-free design for I/O operations to avoid performance degradation caused by multi-thread competition for CPU and resource locking.

(5) High-performance serialization framework

Serialization of data using google's protoBuf

(6) Use the FastThreadLocal class

Use the FastThreadLocal class instead of the ThreadLocal class

Seven, netty use

1. Introduce dependencies

<dependency>

<groupId>io.netty</groupId>

<artifactId>netty-all</artifactId>

<version>4.0.36.Final</version>

</dependency>2. Write server code (fixed writing method)

public class Server {

private int port;

public Server(int port) {

this.port = port;

}

public void run() {

EventLoopGroup bossGroup = new NioEventLoopGroup(); //用于处理服务器端接收客户端连接

EventLoopGroup workerGroup = new NioEventLoopGroup(); //进行网络通信(读写)

try {

ServerBootstrap bootstrap = new ServerBootstrap(); //辅助工具类,用于服务器通道的一系列配置

bootstrap.group(bossGroup, workerGroup) //绑定两个线程组

.channel(NioServerSocketChannel.class) //指定NIO的模式

.childHandler(new ChannelInitializer<SocketChannel>() { //配置具体的数据处理方式

@Override

protected void initChannel(SocketChannel socketChannel) throws Exception {

socketChannel.pipeline().addLast(new ServerHandler());

}

})

/**

* 对于ChannelOption.SO_BACKLOG的解释:

* 服务器端TCP内核维护有两个队列,我们称之为A、B队列。客户端向服务器端connect时,会发送带有SYN标志的包(第一次握手),服务器端

* 接收到客户端发送的SYN时,向客户端发送SYN ACK确认(第二次握手),此时TCP内核模块把客户端连接加入到A队列中,然后服务器接收到

* 客户端发送的ACK时(第三次握手),TCP内核模块把客户端连接从A队列移动到B队列,连接完成,应用程序的accept会返回。也就是说accept

* 从B队列中取出完成了三次握手的连接。

* A队列和B队列的长度之和就是backlog。当A、B队列的长度之和大于ChannelOption.SO_BACKLOG时,新的连接将会被TCP内核拒绝。

* 所以,如果backlog过小,可能会出现accept速度跟不上,A、B队列满了,导致新的客户端无法连接。要注意的是,backlog对程序支持的

* 连接数并无影响,backlog影响的只是还没有被accept取出的连接

*/

.option(ChannelOption.SO_BACKLOG, 128) //设置TCP缓冲区

.option(ChannelOption.SO_SNDBUF, 32 * 1024) //设置发送数据缓冲大小

.option(ChannelOption.SO_RCVBUF, 32 * 1024) //设置接受数据缓冲大小

.childOption(ChannelOption.SO_KEEPALIVE, true); //保持连接

ChannelFuture future = bootstrap.bind(port).sync();

future.channel().closeFuture().sync();

} catch (Exception e) {

e.printStackTrace();

} finally {

workerGroup.shutdownGracefully();

bossGroup.shutdownGracefully();

}

}

public static void main(String[] args) {

new Server(8379).run();

}

} 3. Write Handler processing class

public class ServerHandler extends ChannelHandlerAdapter {

@Override

public void channelRead(ChannelHandlerContext ctx, Object msg) throws Exception {

//do something msg

ByteBuf buf = (ByteBuf)msg;

byte[] data = new byte[buf.readableBytes()];

buf.readBytes(data);

String request = new String(data, "utf-8");

System.out.println("Server: " + request);

//写给客户端

String response = "我是反馈的信息";

ctx.writeAndFlush(Unpooled.copiedBuffer("888".getBytes()));

//.addListener(ChannelFutureListener.CLOSE);

}

@Override

public void exceptionCaught(ChannelHandlerContext ctx, Throwable cause) throws Exception {

cause.printStackTrace();

ctx.close();

}

}