Table of contents

Get id, position, tag name and size

Top 10 hot stocks of Sina stock within 1 hour of dynamic rendering page crawling

If you use Selenium to drive the browser to load the webpage, you can directly get the result of JavaScript rendering without worrying about the encryption system used.

The use of Selenium can be seen here

[Python3 web crawler development practice] 7-Dynamic rendering page crawling-1-Selenium use

element selector

To operate on the page, the first thing to do is to select the page element.

The element selection method is as follows

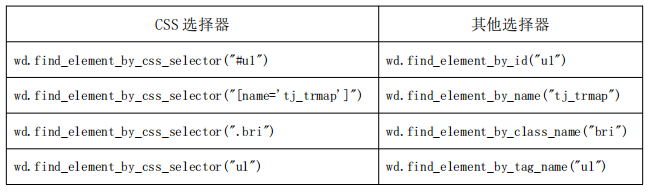

Commonly used CSS selectors compared with other selectors.

Basic use of Selenium

- The Keys () class provides methods for almost all keys on the keyboard. This class can be used to simulate the keys on the keyboard

- expected_conditions() is mainly used to judge the loading of page elements, including whether the element exists, is clickable, etc.

- #WebDriverWait The waiting time set for a specific element

from selenium import webdriver

from selenium.webdriver.common.by import By

from selenium.webdriver.common.keys import Keys

from selenium.webdriver.support import expected_conditions as EC

from selenium.webdriver.support.wait import WebDriverWait

browser = webdriver.Chrome()

try:

browser.get('https://www.baidu.com')

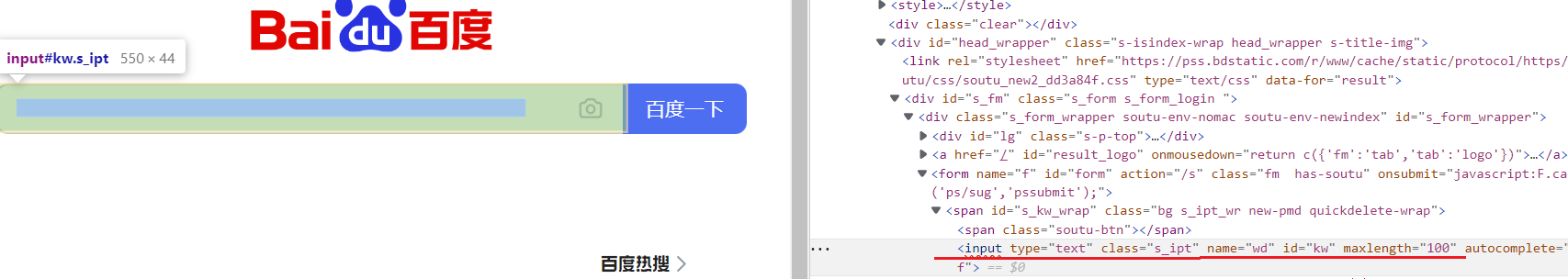

input = browser.find_element(By.ID,'kw') #找到搜索框

input.send_keys('Python')

input.send_keys(Keys.ENTER)

Check that the ID of the web page element search box is 'kw'

wait = WebDriverWait(browser, 10)

#页面元素等待处理。显性等待:

wait.until(EC.presence_of_element_located((By.ID, 'content_left')))

#输出当前的URL、当前的Cookies和网页源代码:

print(browser.current_url)

print(browser.get_cookies())

print(browser.page_source)

finally:

browser.close()execute javascript

For some operations, Selenium API does not provide. The execute_script method can call native JavaScript api

For example, if you pull down the progress bar, it can directly simulate running JavaScript. At this time, you can use execute_script()the method to achieve it. The code is as follows:

from selenium import webdriver

browser = webdriver.Chrome()

browser.get('https://www.zhihu.com/explore')

browser.execute_script('window.scrollTo(0, document.body.scrollHeight)')

browser.execute_script('alert("To Bottom")')Scrolling page function execute_script for python learning

Get node information

As mentioned earlier, page_sourcethe source code of the web page can be obtained through attributes, and then the parsing library (such as regular expressions, Beautiful Soup, pyquery, etc.) can be used to extract information.

However, since Selenium has provided a method to select a node and returns WebElementa type, it also has related methods and properties to directly extract node information, such as attributes, text, and so on. In this way, we can extract information without parsing the source code, which is very convenient.

- get_attribute() method to get the link text of all elements in the list

from selenium import webdriver

from selenium.webdriver import ActionChains

browser = webdriver.Chrome()

url = 'https://www.zhihu.com'

browser.get(url)

print(browser.page_source)

logo = browser.find_element(By.CLASS_NAME,'SignFlowHomepage-logo')

print(logo)

print(logo.get_attribute('class'))After running, the program will drive the browser to open the Zhihu page, then obtain the Zhihu logo node, and finally print out its logo

class.The console output is as follows:

<selenium.webdriver.remote.webelement.WebElement (session="0d0d6e192c2ac469035dbfec6d22fa41", element="e0174d21-607a-4f03-95d2-ad1d9b46016c")> SignFlowHomepage-logo

get text value

Each WebElementnode has textan attribute. You can directly call this attribute to get the text information inside the node. This is equivalent to get_text()the method of Beautiful Soup and the method of pyquery text(). Examples are as follows:

from selenium import webdriver

browser = webdriver.Chrome()

url = 'https://www.zhihu.com/explore'

browser.get(url)

input = browser.find_element(By.CLASS_NAME,'css-1iw0hlv')

print(input.text)

#近期热点

Get id, position, tag name and size

In addition, WebElementthe node has some other attributes, such as idthe attribute can obtain the node id, locationthe attribute can obtain the relative position of the node in the page, the tag_nameattribute can obtain the label name, and sizethe attribute can obtain the size of the node, that is, the width and height. Sometimes these attributes are very useful. Examples are as follows:

Here, first obtain the node "Recent Hotspot" button, and then call its id, location, tag_name, sizeattributes to obtain the corresponding attribute values.

from selenium import webdriver

browser = webdriver.Chrome()

url = 'https://www.zhihu.com/explore'

browser.get(url)

input = browser.find_element(By.CLASS_NAME,'css-1iw0hlv')

print(input.id)

print(input.location)

print(input.tag_name)

print(input.size)Switch Frame

We know that there is a node in a web page called iframe, which is a sub-frame, which is equivalent to a sub-page of a page, and its structure is exactly the same as that of an external web page. After Selenium opens the page, it operates in the parent Frame by default. At this time, if there are sub-Frames in the page, it cannot obtain the nodes in the sub-Frame. At this time, you need to use switch_to.frame()the method to switch the Frame. Examples are as follows:

When the page contains sub-frames, if you want to get the nodes in the sub-frames, you need to call switch_to.frame()the method to switch to the corresponding Frame before proceeding.

import time

from selenium import webdriver

from selenium.common.exceptions import NoSuchElementException

browser = webdriver.Chrome()

url = 'http://www.runoob.com/try/try.php?filename=jqueryui-api-droppable'

browser.get(url)

browser.switch_to.frame('iframeResult')#切换到子Frame里面

try:

logo = browser.find_element(By.CLASS_NAME,'logo')#尝试获取父级Frame里的logo节点

except NoSuchElementException:#找不到的话,就会抛出NoSuchElementException异常

print('NO LOGO')#异常被捕捉之后,就会输出NO LOGO。

browser.switch_to.parent_frame()#重新切换回父级Frame

logo = browser.find_element(By.CLASS_NAME,'logo')#重新获取节点,发现此时可以成功获取了。

print(logo)

print(logo.text)NO LOGO <selenium.webdriver.remote.webelement.WebElement (session="2e67f09261d1add3f8ccc91e625a759c", element="4f17b9bf-7892-498c-8e7a-ba00ded786c8")>

delay waiting

In Selenium, get()the method will end execution after the web page frame is loaded. If it is obtained at this time page_source, it may not be the page that the browser has completely loaded. If some pages have additional Ajax requests, we may not necessarily can be obtained successfully. Therefore, it is necessary to wait for a certain period of time to ensure that the node has been loaded.

There are two ways to wait here: one is implicit waiting and the other is explicit waiting.

implicit wait

When using implicit waiting to execute the test, if Selenium does not find the node in the DOM, it will continue to wait. After the set time is exceeded, an exception that the node cannot be found will be thrown. In other words, when looking for a node and the node does not appear immediately, the implicit wait will wait for a period of time before looking up the DOM, the default time is 0. Examples are as follows:

| 1 2 3 4 5 6 7 |

from selenium import webdriver browser = webdriver.Chrome() browser.implicitly_wait(10) browser.get('https://www.zhihu.com/explore') input = browser.find_element(By.CLASS_NAME,'css-1iw0hlv') print(input) |

Here we implicitly_wait()implement an implicit wait with a method.

explicit wait

The effect of implicit waiting is actually not that good, because we only stipulate a fixed time, and the loading time of the page will be affected by network conditions.

There is also a more appropriate explicit wait method here, which specifies the node to look up, and then specifies a maximum wait time. If the node is loaded within the specified time, the searched node will be returned; if the node is still not loaded within the specified time, a timeout exception will be thrown. Examples are as follows:

|

Here first introduce WebDriverWaitthis object, specify the maximum waiting time, and then call its until()method, passing in the waiting conditions expected_conditions. For example, this condition is passed in here presence_of_element_located, representing the meaning of the node appearing, and its parameter is the location tuple of the node, which is the node search box IDfor .q

The effect that can be achieved in this way is that if the node ( IDthat qis, the search box) is successfully loaded within 10 seconds, the node will be returned; if it has not been loaded for more than 10 seconds, an exception will be thrown.

For the button, you can change the waiting condition, for example, element_to_be_clickableit is clickable, so when looking for the button, look for the button whose CSS selector is .btn-search. If it is clickable within 10 seconds, it is successfully loaded. , returns the button node; if it cannot be clicked for more than 10 seconds, that is, it has not been loaded, an exception will be thrown.

Run the code, and it can be successfully loaded when the network speed is good.

The console output is as follows:

| 1 2 |

<selenium.webdriver.remote.webelement.WebElement(session="5a6a8fb070dbe41e7da0992a05d0e705", element="b6bbd8b4-3048-47b0-8d16-448d7c09ed41")> <selenium.webdriver.remote.webelement.WebElement(session="5a6a8fb070dbe41e7da0992a05d0e705", element="8a256454-c2d8-4782-9b5a-5e9ca726edb1")> |

As you can see, the console successfully outputs two nodes, both of which are WebElementtypes.

If there is a problem with the network and there is no successful loading within 10 seconds, TimeoutExceptionan exception will be thrown

There are actually many waiting conditions, such as judging the content of the title, judging whether a certain text appears in a certain node, etc. Table 7-1 lists all wait conditions.

| waiting condition |

meaning |

|---|---|

|

|

title is something |

|

|

title contains something |

|

|

The node is loaded, and the positioning tuple is passed in, such as |

|

|

The node is visible, pass in the positioning tuple |

|

|

Visible, pass in the node object |

|

|

All nodes are loaded |

|

|

A node text contains a text |

|

|

A node value contains a text |

|

|

load and toggle |

|

|

node is not visible |

|

|

Node is clickable |

|

|

To determine whether a node is still in the DOM, you can determine whether the page has been refreshed |

|

|

The node can be selected, pass the node object |

|

|

The node is optional, and the positioning tuple is passed in |

|

|

Pass in the node object and state, return equal |

|

|

Pass in the positioning tuple and status, return if equal |

|

|

Is there a warning |

forward and backward

There are forward and backward functions when using the browser normally, and Selenium can also complete this operation. It uses back()the method to go back and forward()the method to go forward. Examples are as follows:

Visit 3 pages in a row, then call back()the method to return to the second page, and then call forward()the method to advance to the third page.

import time

from selenium import webdriver

browser = webdriver.Chrome()

browser.get('https://www.baidu.com/')

browser.get('https://www.taobao.com/')

browser.get('https://www.runoob.com/html/html-images.html')

browser.back()

time.sleep(1)

browser.forward()

browser.close()Cookies

Using Selenium, you can also easily operate on Cookies, such as obtaining, adding, and deleting Cookies. Examples are as follows:

from selenium import webdriver

browser = webdriver.Chrome()

browser.get('https://www.zhihu.com/explore')

print(browser.get_cookies())

browser.add_cookie({'name': 'name', 'domain': 'www.zhihu.com', 'value': 'germey'})

print(browser.get_cookies())

browser.delete_all_cookies()

print(browser.get_cookies())我们访问了知乎。加载完成后,浏览器实际上已经生成Cookies了。接着,调用get_cookies()方法获取所有的Cookies。然后,我们添加一个Cookie,这里传入一个字典,有name、domain和value等内容。接下来,再次获取所有的Cookies。可以发现,结果就多了这一项新加的Cookie。最后,调用delete_all_cookies()方法删除所有的Cookies。再重新获取,发现结果就为空了。

选项卡管理

在访问网页的时候,会开启一个个选项卡。在Selenium中,我们也可以对选项卡进行操作。示例如下:

import time

from selenium import webdriver

browser = webdriver.Chrome()

browser.get('https://www.baidu.com')

browser.execute_script('window.open()')#开启一个新的选项卡

print(browser.window_handles)#获取当前开启的所有选项卡,返回的是选项卡的代号列表

browser.switch_to.window(browser.window_handles[1])#切换到第二个选项卡

browser.get('https://www.taobao.com')#在第二个选项卡下打开一个新页面

time.sleep(1)

browser.switch_to.window(browser.window_handles[0])#切换到第1个选项卡

browser.get('https://python.org')

#['CDwindow-737BF5B04D83ACDD158C4C6028E2954F', 'CDwindow-E1BFCF28EA2A51D5D3FCB6D3E6DB0505']

异常处理

在使用Selenium的过程中,难免会遇到一些异常,例如超时、节点未找到等错误,一旦出现此类错误,程序便不会继续运行了。这里我们可以使用try except语句来捕获各种异常。

首先,演示一下节点未找到的异常,示例如下:

from selenium import webdriver

from selenium.common.exceptions import TimeoutException, NoSuchElementException

browser = webdriver.Chrome()

try:

browser.get('https://www.baidu.com')

except TimeoutException:

print('Time Out')

try:

browser.find_element(By.ID,'hello') #查找并不存在的节点

except NoSuchElementException:

print('No Element')#抛出异常

finally:

browser.close()

#No Element

这里我们使用try except来捕获各类异常。比如,我们对find_element_by_id()查找节点的方法捕获NoSuchElementException异常,这样一旦出现这样的错误,就进行异常处理,程序也不会中断了。

try except Exception as e 检查异常

动态渲染页面爬取之新浪股票1小时内10大热门股票

from selenium import webdriver

from selenium.webdriver.common.by import By

try:

brower = webdriver.Chrome() #声明浏览器对象

brower.get('https://finance.sina.com.cn/stock/')

stocks = brower.find_elements(By.XPATH,'//li[@class="xh_hotstock_item"]')#丛任意节点提取属性class="xh_hotstock_item"

for s in stocks:

print("股票名称是:",s.find_element(By.CLASS_NAME,"list02_name").text)

print("股票代码是:", s.get_attribute("data-code"))#Selenium get_attribute()方法获取列表元素信息

print("股票价格是:",s.find_element(By.CLASS_NAME,"list02_diff").text)

print("股票涨幅是:", s.find_element(By.CLASS_NAME,"list02_chg").text)

print("股票网页是:", s.find_element(By.CLASS_NAME,"list02_name").get_attribute("href")) #获取股票的主页地址

except Exception as e:

print(e)

finally:

brower.close()

股票名称是: 包钢股份 股票代码是: 600010 股票价格是: 1.96 股票涨幅是: -1.01% 股票网页是: 包钢股份(600010)股票股价,行情,新闻,财报数据_新浪财经_新浪网 股票名称是: 歌尔股份00000000000000000000000000000000000000000000000000000000000000000000000000000000000000000000000000000000000000000 股票代码是: 002241 股票价格是: 18.99 股票涨幅是: 0.58% 股票网页是: 歌尔股份(002241)股票股价,行情,新闻,财报数据_新浪财经_新浪网 股票名称是: 北方稀土 股票代码是: 600111 股票价格是: 26.74 股票涨幅是: -1.87% 股票网页是: 北方稀土(600111)股票股价,行情,新闻,财报数据_新浪财经_新浪网 股票名称是: 三安光电 股票代码是: 600703 股票价格是: 19.95 股票涨幅是: -2.73% 股票网页是: 三安光电(600703)股票股价,行情,新闻,财报数据_新浪财经_新浪网 股票名称是: 通威股份 股票代码是: 60043800000、 股票价格是: 45.00 股票涨幅是: -1.75% 股票网页是: 通威股份(600438)股票股价,行情,新闻,财报数据_新浪财经_新浪网 股票名称是: 隆基绿能 股票代码是: 601012 股票价格是: 46.65 股票涨幅是: -2.75% 股票网页是: 隆基绿能(601012)股票股价,行情,新闻,财报数据_新浪财经_新浪网 股票名称是: 宁德时代 股票代码是: 300750 股票价格是: 384.07 股票涨幅是: -3.06% 股票网页是: 宁德时代(300750)股票股价,行情,新闻,财报数据_新浪财经_新浪网 股票名称是: 赣锋锂业 股票代码是: 002460 股票价格是: 83.52 股票涨幅是: -2.65% 股票网页是: 赣锋锂业(002460)股票股价,行情,新闻,财报数据_新浪财经_新浪网 股票名称是: 东方财富 股票代码是: 300059 股票价格是: 18.57 股票涨幅是: -2.57% The stock page is: Dongfang Fortune (300059) stock price, quotation, news, financial report data_Sina Finance_Sina.com The stock name is: BOE A The stock code is: 000725 The stock price is: 3.68 The stock increase is: 0.00% The stock page is: BOE A (000725) stock price, market, news, financial report data_Sina Finance_Sina.com