Python connects to InfluxDB database

Official sample code: https://github.com/influxdata/influxdb-client-python/tree/master/examples

Article Directory

basic concept

- Measurement: measure, equivalent to "table"

- DataPoint: data point, equivalent to "a piece of data"

- Time: Timestamp, representing the time when the data point was generated.

- Field: Field without index

- Tag: field with index. Measurement+Tag can be used to uniquely index a part of data

1. Prepare to connect to influxdb

First check if you can connect to the database:

from datetime import datetime

from influxdb_client import InfluxDBClient

bucket = "manager_test_bucket"

influxdb_token = "SkeHprHCgmvtX3LXluMUlgyl5nzwM4zdMtsCuT7BQXsaJlhFPMJizKj0nX3ugr9vRfY7Ak4rIhu-wx-aIqNFig=="

influxdb_org = "manager"

client = InfluxDBClient(url="http://localhost:8086", token=influxdb_token, org=influxdb_org)

Then create a new bucket for testing:

buckets_api = client.buckets_api()

created_bucket = buckets_api.create_bucket(bucket_name=bucket_name, org=influxdb_org)

2. Add new data

Method 1: Use Point

The usual way to add data is as follows:

from influxdb_client import Point

from datetime import datetime

from influxdb_client.client.write_api import SYNCHRONOUS

add_data1 = Point("measurement_1").field("open", 1.1).field("close", 1.1).time(datetime(2023, 3, 14, 12, 1, 1))

add_data2 = Point("measurement_1").field("open", 1.2).field("close", 1.2).time(datetime(2023, 3, 13, 13, 2, 1))

add_data3 = Point("measurement_1").field("open", 1.3).field("close", 1.3).time(datetime(2023, 3, 12, 14, 3, 1))

write_api = client.write_api(write_options=SYNCHRONOUS)

write_api.write(bucket=bucket_name, record=[add_data1, add_data2, add_data3])

The result is shown in the figure below:

Method 2: Dictionary dict method

write_api = client.write_api(write_options=SYNCHRONOUS)

add_data1_new = {

"measurement": "measurement_2",

"fields": {

"open": 1.1, "close": 1.1},

"time": datetime(2023, 3, 15, 12, 1, 1),

}

add_data2_new = {

"measurement": "measurement_2",

"fields": {

"open": 1.2, "close": 1.2},

"time": datetime(2023, 3, 14, 12, 1, 1),

}

write_api.write(bucket=bucket_name, org=influxdb_org, record=[add_data1_new, add_data2_new])

Method 3: Data with Tag index

add_data1_new = {

"measurement": "measurement_2",

"tags": {

"stock": "examp_stock"},

"fields": {

"open": 1.1, "close": 1.1},

"time": datetime(2023, 3, 15, 12, 1, 1),

}

add_data2_new = {

"measurement": "measurement_2",

"tags": {

"stock": "examp_stock"},

"fields": {

"open": 1.2, "close": 1.2},

"time": datetime(2023, 3, 14, 12, 1, 1),

}

add_data3_new = {

"measurement": "measurement_2",

"fields": {

"open": 1.3, "close": 1.3},

"time": datetime(2023, 3, 13, 12, 1, 1),

}

write_api.write(bucket=bucket_name, org=influxdb_org, record=[add_data1_new, add_data2_new, add_data3_new])

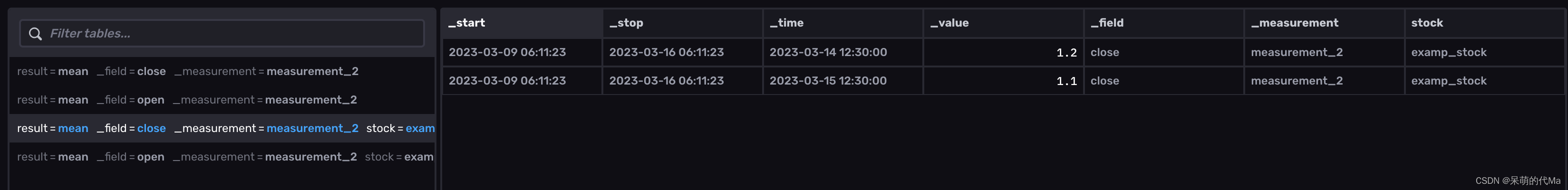

The renderings are as follows:

It can be seen that at this time in the database, use measurement + tagscan uniquely index part of the data, and if not specified tags, then measurementonly part of the data will be indexed

3. Modify data

The procedure for modifying data is similar to adding new data. If the corresponding value is _measurementconsistent field, the value will be overwritten. If not, the data will be added.

new_data = Point("measurement_1").field("open", 11.1).field("high", 21.1).time(datetime(2023, 3, 14, 12, 2, 3))

write_api.write(bucket=bucket_name, record=[new_data])

4. Query data

query_api = client.query_api()

query_tables = query_api.query("""

from(bucket: "manager_test_bucket")

|> range(start: 0, stop: now())

|> filter(fn: (r) => r["_measurement"] == "measurement_1")

""")

for _table in query_tables:

for record in _table.records:

print(record.values)

We'll get something like this:

{'result': '_result', 'table': 0, '_start': datetime.datetime(1970, 1, 1, 0, 0, tzinfo=datetime.timezone.utc), '_stop': datetime.datetime(2023, 3, 16, 5, 27, 6, 567016, tzinfo=datetime.timezone.utc), '_time': datetime.datetime(2023, 3, 12, 5, 3, 1, tzinfo=datetime.timezone.utc), '_value': 1.3, '_field': 'close', '_measurement': 'measurement_1'}

{'result': '_result', 'table': 0, '_start': datetime.datetime(1970, 1, 1, 0, 0, tzinfo=datetime.timezone.utc), '_stop': datetime.datetime(2023, 3, 16, 5, 27, 6, 567016, tzinfo=datetime.timezone.utc), '_time': datetime.datetime(2023, 3, 13, 13, 2, 1, tzinfo=datetime.timezone.utc), '_value': 1.2, '_field': 'close', '_measurement': 'measurement_1'}

{'result': '_result', 'table': 0, '_start': datetime.datetime(1970, 1, 1, 0, 0, tzinfo=datetime.timezone.utc), '_stop': datetime.datetime(2023, 3, 16, 5, 27, 6, 567016, tzinfo=datetime.timezone.utc), '_time': datetime.datetime(2023, 3, 14, 12, 1, 1, tzinfo=datetime.timezone.utc), '_value': 1.1, '_field': 'close', '_measurement': 'measurement_1'}

{'result': '_result', 'table': 1, '_start': datetime.datetime(1970, 1, 1, 0, 0, tzinfo=datetime.timezone.utc), '_stop': datetime.datetime(2023, 3, 16, 5, 27, 6, 567016, tzinfo=datetime.timezone.utc), '_time': datetime.datetime(2023, 3, 12, 5, 3, 1, tzinfo=datetime.timezone.utc), '_value': 1.3, '_field': 'open', '_measurement': 'measurement_1'}

{'result': '_result', 'table': 1, '_start': datetime.datetime(1970, 1, 1, 0, 0, tzinfo=datetime.timezone.utc), '_stop': datetime.datetime(2023, 3, 16, 5, 27, 6, 567016, tzinfo=datetime.timezone.utc), '_time': datetime.datetime(2023, 3, 13, 13, 2, 1, tzinfo=datetime.timezone.utc), '_value': 1.2, '_field': 'open', '_measurement': 'measurement_1'}

{'result': '_result', 'table': 1, '_start': datetime.datetime(1970, 1, 1, 0, 0, tzinfo=datetime.timezone.utc), '_stop': datetime.datetime(2023, 3, 16, 5, 27, 6, 567016, tzinfo=datetime.timezone.utc), '_time': datetime.datetime(2023, 3, 14, 12, 1, 1, tzinfo=datetime.timezone.utc), '_value': 1.1, '_field': 'open', '_measurement': 'measurement_1'}

5. Delete data

start = "2022-03-13T00:00:00Z"

stop = "2023-05-30T00:00:00Z"

delete_api = client.delete_api()

delete_api.delete(start, stop,

predicate='_field=open', # 删除的规则

bucket=bucket_name, org=influxdb_org)

Note: delete data cannot be used _time, _field, _value, no error will be reported but the delete code will be invalid

Complete sample code

Precautions

time is equivalent to the primary key of the table. When the time and tags of a piece of data are exactly the same, the new data will replace the old data, and the old data will be lost (especially in the online environment).

The field types of fields and tags are determined by the value of the first record stored. It is recommended to only include floating point and string types