Netty is widely used in various scenarios, such as remote communication of Dubbo service, shuffle process of Hadoop, client and server communication in the game field, etc. Netty can easily define various private protocol stacks and is a powerful tool for network programming. Netty is an encapsulation of NIO. Netty does not encapsulate AIO because Linux's AIO is also implemented by epoll, and the performance is not greatly improved, and Netty's reactor model is not suitable for encapsulating AIO, so Netty gave up support for AIO. With the rise of the Internet of Things, a large number of devices need to be interconnected, and Netty must be a powerful tool.

In the traditional NIO programming model, the poller selector needs to be used to poll each channel for read and write events, and the ByteBuffer api is obscure and difficult to understand, it is very complicated to maintain, and the business is difficult to decouple. Netty helps us shield NIO's details, and do a lot of performance optimization. Let's take a look at some of the details in Netty.

Note: In the following example, the netty version number is 4.1.52.Final, and the maven configuration is as follows:

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>org.netty</groupId>

<artifactId>netty-demo</artifactId>

<version>0.0.1-SNAPSHOT</version>

<packaging>jar</packaging>

<name>netty-demo</name>

<url>http://maven.apache.org</url>

<properties>

<project.build.sourceEncoding>UTF-8</project.build.sourceEncoding>

</properties>

<dependencies>

<dependency>

<groupId>io.netty</groupId>

<artifactId>netty-all</artifactId>

<version>4.1.52.Final</version>

</dependency>

</dependencies>

</project>

First, the startup of the client side and the server side

1. Server side

The group method in ServerBootstrap has two input parameters, NioEventLoopGroup, which are two thread pools, which respectively represent the thread that accepts the request and the thread that processes the request. This is the embodiment of the reactor model. When each client connects, the server will have A channel corresponds to it, and the childHandler method is to bind a bunch of processors to the channel, where three inbound processors are bound. ctx.fireChannelRead(msg) indicates that the lower-level processor is notified for processing. If this method is not called, the read event will terminate and propagate to the downstream processor.

public class NettyDemoServer {

public static void main(String[] args) {

ServerBootstrap serverBootstrap = new ServerBootstrap();

serverBootstrap.group(new NioEventLoopGroup(), new NioEventLoopGroup()).channel(NioServerSocketChannel.class)

.childHandler(new ChannelInitializer<NioSocketChannel>() {

@Override

protected void initChannel(NioSocketChannel ch) throws Exception {

ch.pipeline().addLast(new StringDecoder()).addLast(new SimpleChannelInboundHandler<Object>() {

@Override

protected void channelRead0(ChannelHandlerContext ctx, Object msg) throws Exception {

System.out.println("Handler1:" + msg);

ctx.fireChannelRead(msg);

}

}).addLast(new SimpleChannelInboundHandler<Object>() {

@Override

protected void channelRead0(ChannelHandlerContext ctx, Object msg) throws Exception {

System.out.println("Handler2:" + msg);

ctx.fireChannelRead(msg);

}

});

}

}).bind(8080).addListener(o -> {

if(o.isSuccess()){

System.out.println("启动成功");

}

});

}

}2. Client side

The client encodes as a string and writes a piece of data to the server every three seconds

public class NettyDemoClient {

public static void main(String[] args) {

Bootstrap bootstrap = new Bootstrap();

NioEventLoopGroup group = new NioEventLoopGroup();

bootstrap.group(group).channel(NioSocketChannel.class).handler(new ChannelInitializer<Channel>() {

@Override

protected void initChannel(Channel ch) {

ch.pipeline().addLast(new StringEncoder());

}

});

Channel channel = bootstrap.connect("localhost", 8080).channel();

while (true) {

channel.writeAndFlush(new Date().toLocaleString() + ":测试netty");

try {

Thread.sleep(3000);

} catch (InterruptedException e) {

e.printStackTrace();

}

}

}

}The operation effect is as follows:

启动成功

Handler1:2020-9-11 17:23:19:测试netty

Handler2:2020-9-11 17:23:19:测试netty

Handler1:2020-9-11 17:23:23:测试netty

Handler2:2020-9-11 17:23:23:测试netty

Handler1:2020-9-11 17:23:26:测试netty

Handler2:2020-9-11 17:23:26:测试netty

Netty shields all IO processing details, and only needs to define the corresponding business without inbound and outbound processors during business development, realizing the decoupling of business and communication.

Second, the data processing channel pipeline and channel processor channelHandler

1、channelHandler

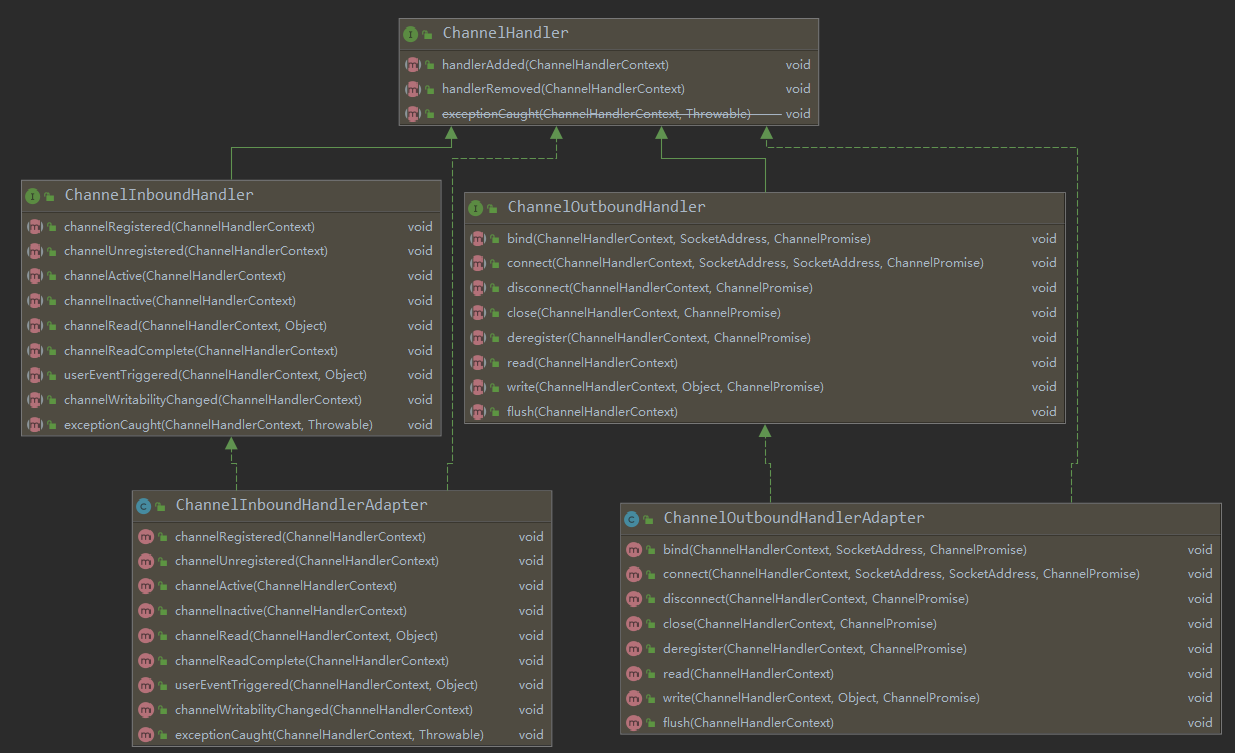

Inherited from ChannelHandler, there are two major interfaces, ChannelInboundHandler and ChannelOutBoundHandler, which represent the inbound and outbound interfaces respectively. For the station handler, the channelRead(ChannelHandlerContext ctx, Object msg) method will be triggered when a message comes in. For the outbound handler, write(ChannelHandlerContext ctx, Object msg, ChannelPromise promise) will be triggered when data is written out. ChannelInboundHandlerAdapter and ChanneloutBoundHandlerAdapter are general implementations of the two types of interfaces. There are some very simple implementations in it, just pass the read and write events in the pipeline.

2. The event propagation sequence of the pipeline

For inbound handlers, the order of execution remains the same as the order in which addLast was added, which has been verified in the example printed earlier, and for outbound handlers, the order of execution is reversed from the order in which addLast was added. Let's verify this on the client side by adding two outbound handlers on the client side.

public class NettyDemoClient {

public static void main(String[] args) {

Bootstrap bootstrap = new Bootstrap();

NioEventLoopGroup group = new NioEventLoopGroup();

bootstrap.group(group).channel(NioSocketChannel.class).handler(new ChannelInitializer<Channel>() {

@Override

protected void initChannel(Channel ch) {

ch.pipeline().addLast(new StringEncoder()).addLast(new OutBoundHandlerFirst()).addLast(new OutBoundHandlerSecond());

}

});

Channel channel = bootstrap.connect("localhost", 8080).channel();

while (true) {

channel.writeAndFlush(new Date().toLocaleString() + ":测试netty");

try {

Thread.sleep(3000);

} catch (InterruptedException e) {

e.printStackTrace();

}

}

}

public static class OutBoundHandlerFirst extends ChannelOutboundHandlerAdapter {

@Override

public void write(ChannelHandlerContext ctx, Object msg, ChannelPromise promise) throws Exception {

System.out.println("OutBoundHandlerFirst");

super.write(ctx, msg, promise);

}

}

public static class OutBoundHandlerSecond extends ChannelOutboundHandlerAdapter {

@Override

public void write(ChannelHandlerContext ctx, Object msg, ChannelPromise promise) throws Exception {

System.out.println("OutBoundHandlerSecond");

super.write(ctx, msg, promise);

}

}

}

The print log is as follows:

OutBoundHandlerSecond

OutBoundHandlerFirst

OutBoundHandlerSecond

OutBoundHandlerFirst

OutBoundHandlerSecond

OutBoundHandlerFirst

OutBoundHandlerSecond

OutBoundHandlerFirst

OutBoundHandlerSecond

OutBoundHandlerFirstHere, the outbound handler is verified, and the order of execution is reversed from the order added by addLast.

The pipeline actually maintains a doubly linked list. Why does this phenomenon occur? We found the answer in AbstractChannelHandlerContext, the findContextInbound method is to take the next node, and the findContextOutbound method is to take the previous node

private AbstractChannelHandlerContext findContextInbound() {

AbstractChannelHandlerContext ctx = this;

do {

ctx = ctx.next;

} while (!ctx.inbound);

return ctx;

}

private AbstractChannelHandlerContext findContextOutbound() {

AbstractChannelHandlerContext ctx = this;

do {

ctx = ctx.prev;

} while (!ctx.outbound);

return ctx;

}Third, the life cycle of the processor

When the connection is established, handlerAdded->channelRegistered->channelActive->channelRead->channelReadComplete

When the connection is closed, channelInactive->channelUnregistered->handlerRemoved

Description: channelRead and channelReadComplete are called every time a complete packet is read

Fourth, unpacking and sticking to solve

The TCP protocol is a streaming protocol, so when transmitting data, a complete packet will not be transmitted according to our business, and multiple packets may be sent together. A complete packet is split into multiple small packets for transmission. At this time, the receiving end needs to combine multiple packets into a complete packet for parsing. So what solutions does netty have?

1. FixedLengthFrameDecoder , a fixed-length unpacker

Each data packet has a fixed length, for example, each data packet is 50, suitable for simple scenarios

2. Line unpacker LineBasedFrameDecoder

Unpack with newlines

3. DelimiterBasedFrameDecoder DelimiterBasedFrameDecoder

This is similar to the separator unpacker, except that special symbols can be customized for unpacking, such as # @ and other symbols. When using, you must ensure that these special symbols are not included in the formal message.

4, the length field unpacker LengthFieldBasedFrameDecoder

This is the most common unpacker, and almost all binary custom protocols can be unpacked based on this unpacker, as long as a length field is defined in the protocol header, for example, 4 bytes are used to store messages body length