Recently, I am using spark on yarn mode to submit tasks. The following is the case I tested

--submit command

spark-submit --master yarn-cluster --class com.htlx.sage.bigdata.spark.etl.Application --driver-memory 1g --num-executors 2 --executor-memory 2g --executor-cores 2 my-spark-etl_2.11-1.0-SNAPSHOT.jar

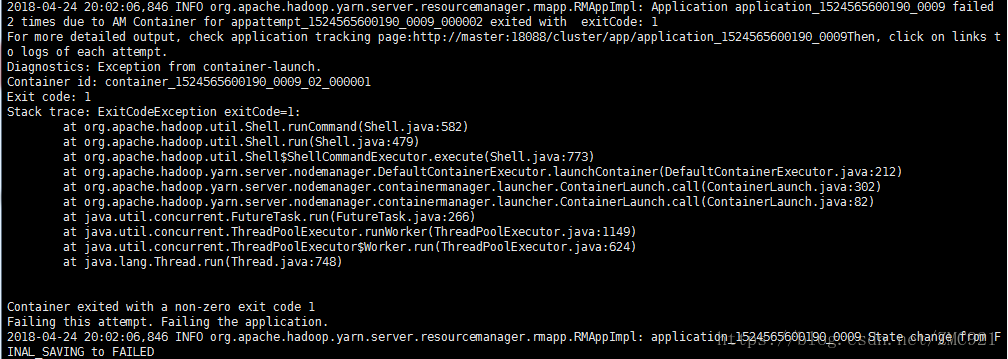

--The error reported after running is

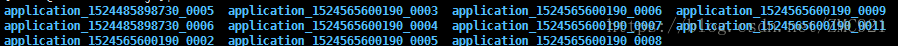

--I'm very depressed about this error. I checked the Internet and said it was a configuration problem, but I had no problem running other codes. Besides, I did a lot of research when building the cluster, and the cluster would not have errors, so I can only go to In the running log of yarn, nothing was found in the log of yarn except the above error, and then I went to the log of running the program to find

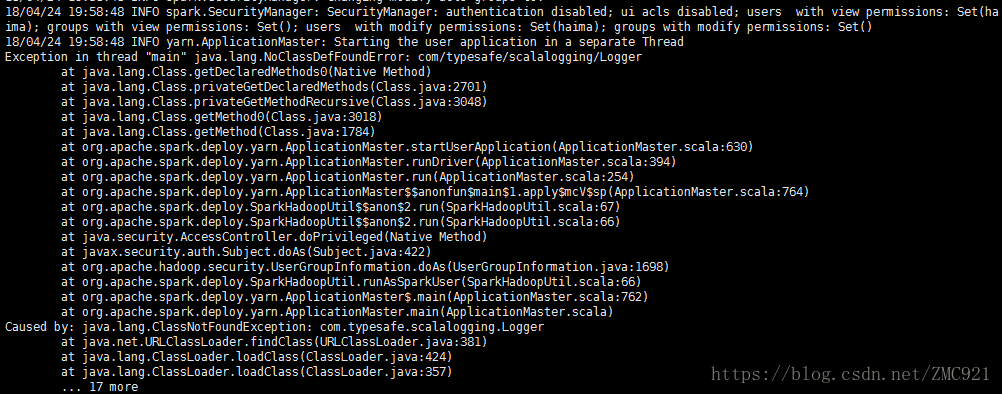

-- Check the stderr file and find that com/typesafe/scalalogging/Logger cannot be found

-- The reason was finally found because the dependency package was not entered in the running jar.

-- Solution, because I use sbt to build the scala project, use the sbt plugin, and do not package the dependent plugins when packaging.

-- The purpose of the ssembly plugin is to:

The goal is simple: Create a fat JAR of your project with all of its dependencies.

-- The large files that the project depends on are also packaged into the generated jar.

-- The configuration of the plugin depends on the version of sbt, mine is sbt 1.1.4, so add the following to project/plugins.sbt :

addSbtPlugin("com.eed3si9n" % "sbt-assembly" % "0.14.6")

-- Generate a jar package, enter the root directory of the project and operate the following command

sbt assembly

Package the dependencies, then package the project

sbt package

-- run the command as follows

spark-submit --master yarn-cluster --class com.htlx.sage.bigdata.spark.etl.Application --driver-memory 1g --num-executors 2 --executor-memory 2g --executor-cores 2 --jars spark-etl-assembly-1.0-SNAPSHOT.jar spark-etl_2.11-1.0-SNAPSHOT.jar