Article Directory

- Preface

- 1. Basic network deployment of docker

- 2. Custom bridge for advanced network configuration

- 3. Cross-host network with advanced network configuration (advanced network)

Preface

Previously learned about docker mirroring and warehouse

docker container technology 1-mirroring article

docker container technology 2-enterprise-level warehouse management harbor use

Start learning docker network here

-

Docker's image is commendable, but the network function is still a relatively weak

part. -

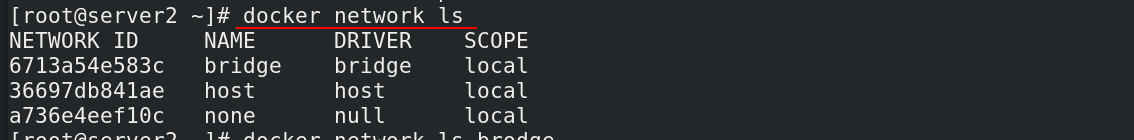

After docker is installed, 3 types of networks will be automatically created: bridge, host, none

1. Basic network deployment of docker

1.1 docker default network mode

After docker is installed, 3 types of networks will be automatically created: bridge, host, none

View command:docker network ls

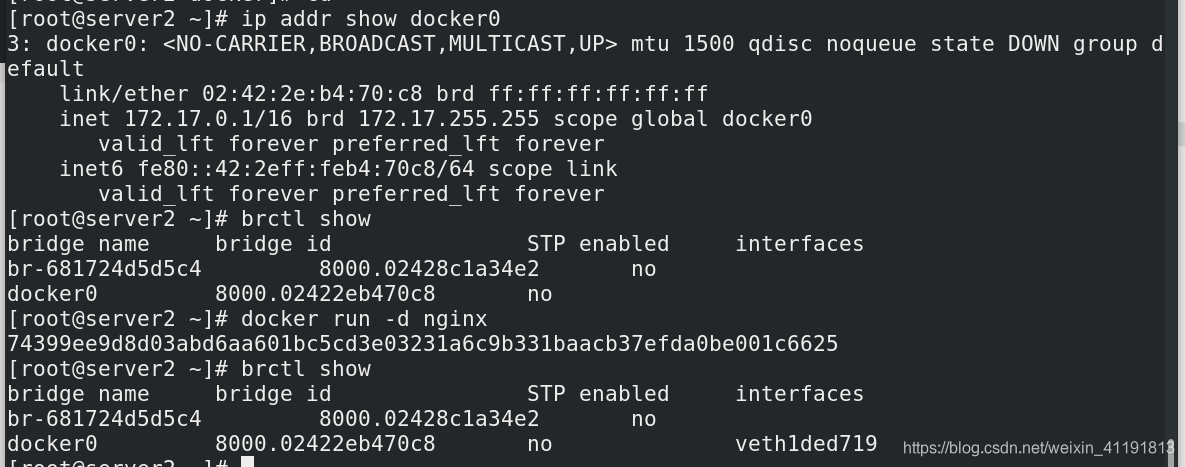

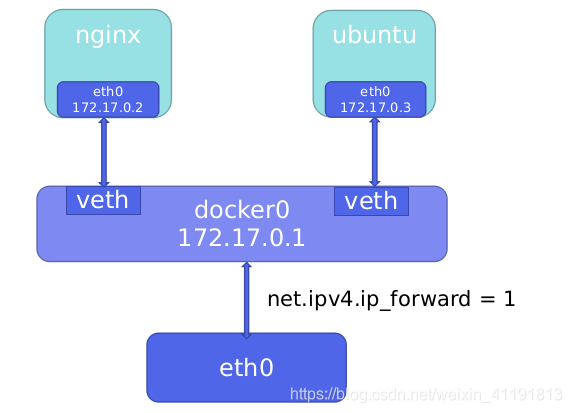

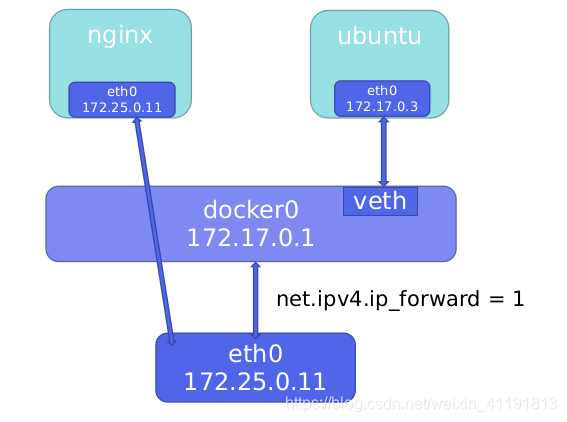

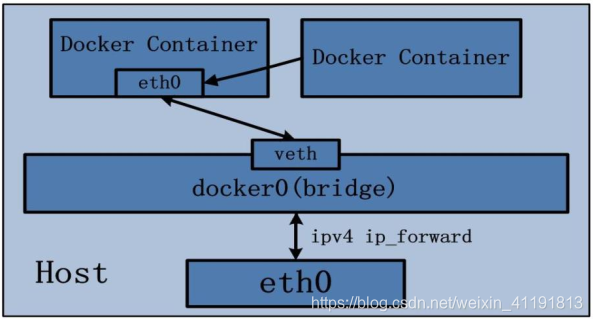

1.2 bridge network mode

When docker is installed, a Linux bridge named docker0 will be created, and the newly created container

will be automatically bridged to this interface.

yum install -y bridge-utils.x86_64 ##安装brctl命令工具

ip addr show docker0

3: docker0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN group default

link/ether 02:42:2e:b4:70:c8 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.1/16 brd 172.17.255.255 scope global docker0

valid_lft forever preferred_lft forever

inet6 fe80::42:2eff:feb4:70c8/64 scope link

valid_lft forever preferred_lft forever

brctl show

bridge name bridge id STP enabled interfaces

br-681724d5d5c4 8000.02428c1a34e2 no

docker0 8000.02422eb470c8 no

docker run -d nginx

74399ee9d8d03abd6aa601bc5cd3e03231a6c9b331baacb37efda0be001c6625

brctl show

bridge name bridge id STP enabled interfaces

br-681724d5d5c4 8000.02428c1a34e2 no

docker0 8000.02422eb470c8 no veth1ded719

In bridge mode, the container does not have a public IP, only the host can directly access it, and the external host is

invisible, but the container can access the external network after passing the NAT rule of the host.

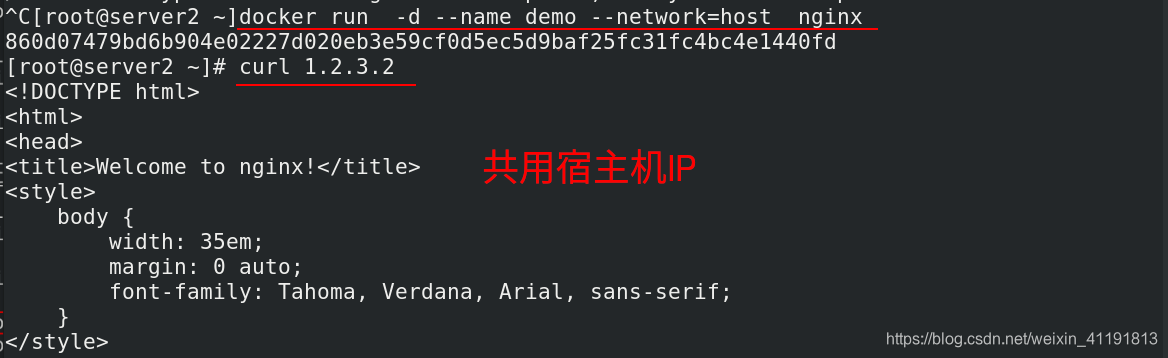

1.3 host network mode

The host network mode needs to be specified when the container is created --network=host

docker run -d --name demo --network=host nginx

b6aaaed51b40a0951f501292a1e65ea7927c752b2bcfc4517e4f41f8b3c5a9ef

brctl show

bridge name bridge id STP enabled interfaces

br-681724d5d5c4 8000.02428c1a34e2 no

docker0 8000.02422eb470c8 no #共享宿主机网络栈,所以没有bridge记录

The host mode allows the container to share the host network stack. The advantage of this is that the external host

communicates directly with the container, but the container's network lacks isolation.

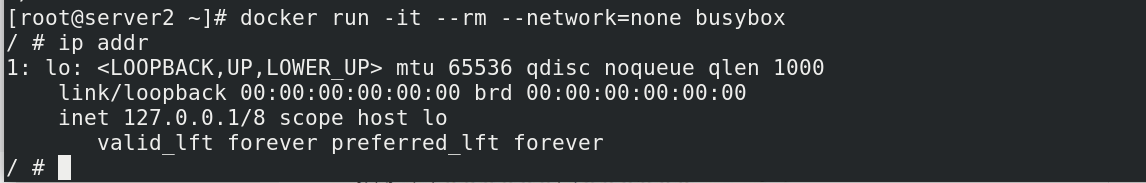

1.4 none mode

The none mode means that the network function is disabled, and only the lo interface is used. When the container is created, use

-network=none to specify.

docker run -it --rm --network=none busybox

/ # ip addr

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

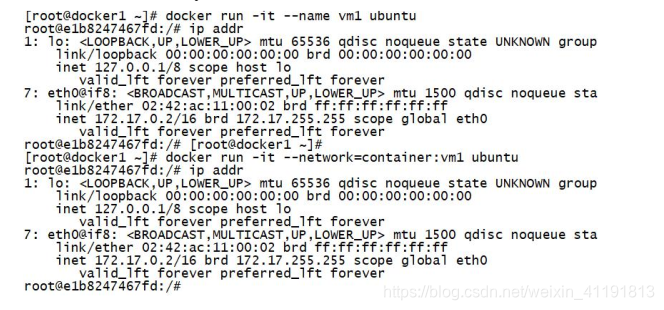

1.5 Container network mode

The Container network mode is a special network mode in Docker.

Use -network=container:vm1 to specify when the container is created. (vm1 specifies the

name of the running container)

Docker containers in this mode will share a network stack, so that two

containers can communicate efficiently and quickly using localhost.

2. Custom bridge for advanced network configuration

For custom network modes, docker provides three custom network drivers:

bridge

overlay runs at the application layer, suitable for IT experts,

macvlan runs at the link layer, and communicates faster; suitable for CT experts,

bridge drivers are similar to the default bridge network mode, but with the addition of Some new features, overlay and macvlan are used to create cross-host networks.

It is recommended to use a custom network to control which containers can communicate with each other, and it can also automatically resolve container names to IP addresses through DNS.

2.1 Custom bridge

Test bridge ip automatic recovery and sequential redistribution

docker images ##查看镜像

docker ps -a ##保证没有任何进程,有的话删除

docker run -d --name demo webserver ##运行demo

docker inspect demo | grep "IPAddress" ##查看IP

"SecondaryIPAddresses": null,

"IPAddress": "172.17.0.2",

"IPAddress": "172.17.0.2",

docker stop demo ##停止运行demo

docker run -d --name demo2 reg.westos.org/nginx # 运行demo2

docker inspect demo2 | grep "IPAddress" #查看ip 拿到刚才demo的.2

"SecondaryIPAddresses": null,

"IPAddress": "172.17.0.2",

"IPAddress": "172.17.0.2",

docker start demo ##重新启动demo

docker inspect demo | grep "IPAddress" ##ip只能递增拿到3

"SecondaryIPAddresses": null,

"IPAddress": "172.17.0.3",

"IPAddress": "172.17.0.3",

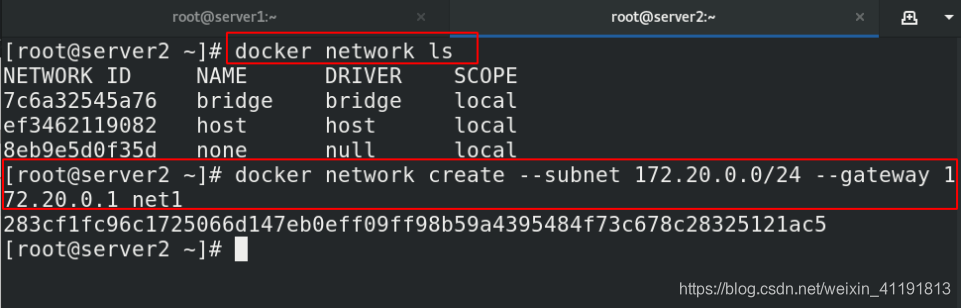

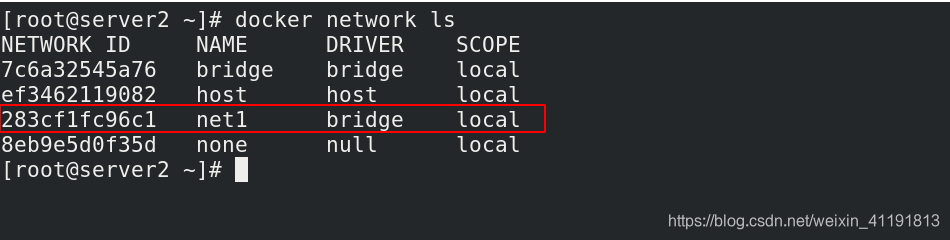

Specify network segment and gateway

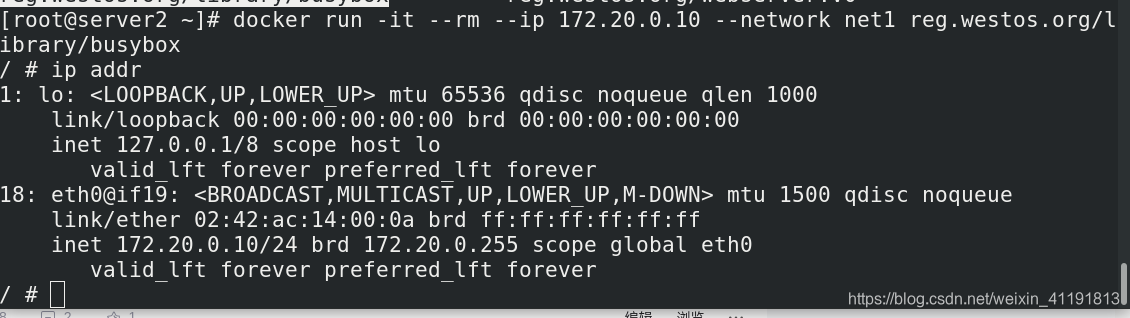

使用--ip参数可以指定容器ip地址,但必须是在自定义网桥上,默认的bridge模式不支持,同一网桥上的容器是可以互通的。

docker network ls

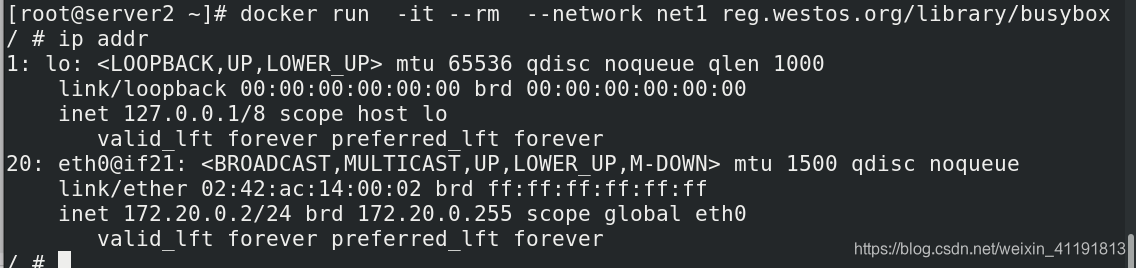

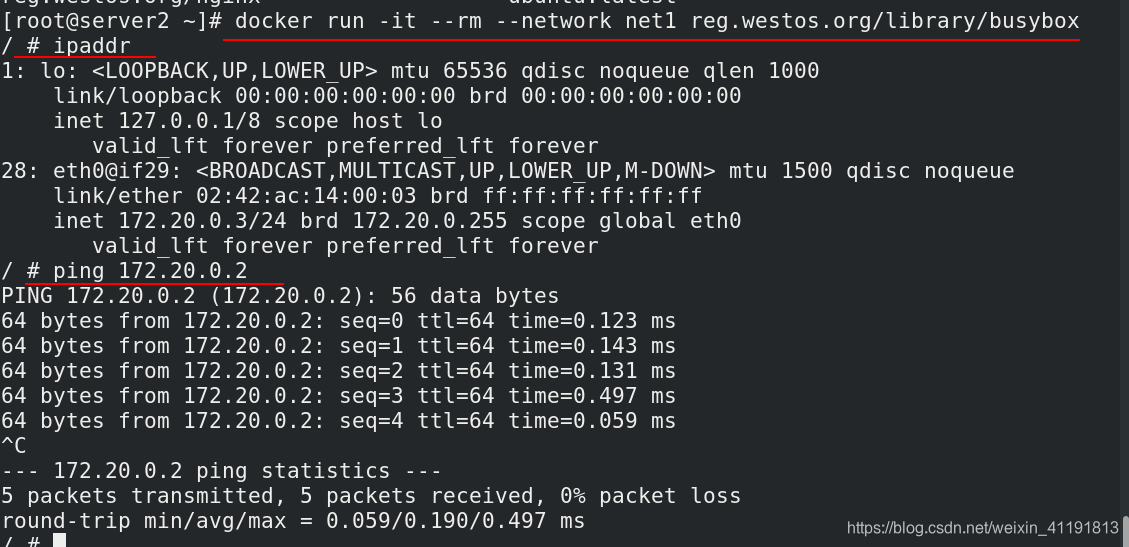

docker network create --subnet 172.20.0.0/24 --gateway 172.20.0.1 net1 ##自定义子网和网关

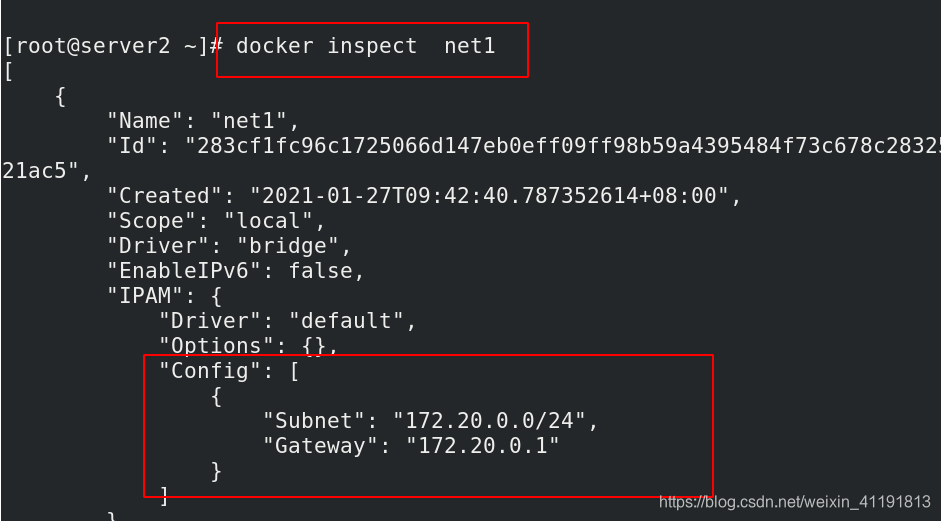

docker inspect net1

ip addr

docker run -it --rm --ip 172.20.0.10 --network net1 busybox

/ # ip addr

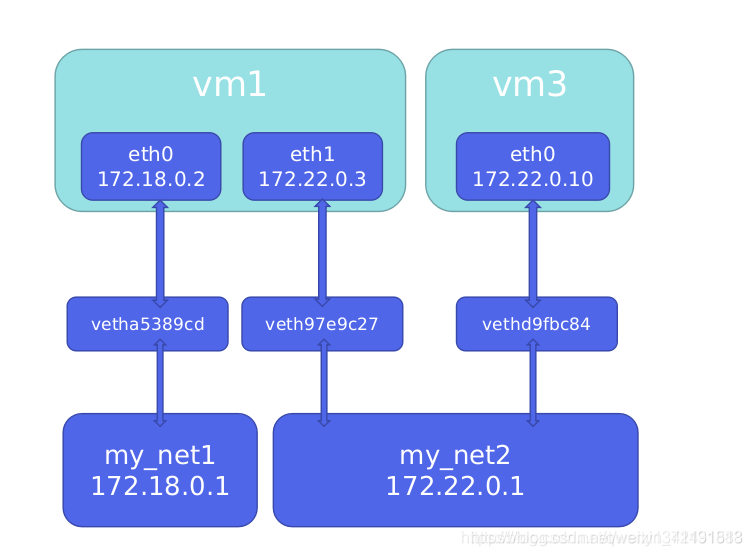

So how to make the containers of two different bridges communicate

The net1 network card has been set to the 172.20.0.0 network segment above. The demo is still the default 172.17.0.0 network segment. Just add the net1 network card.

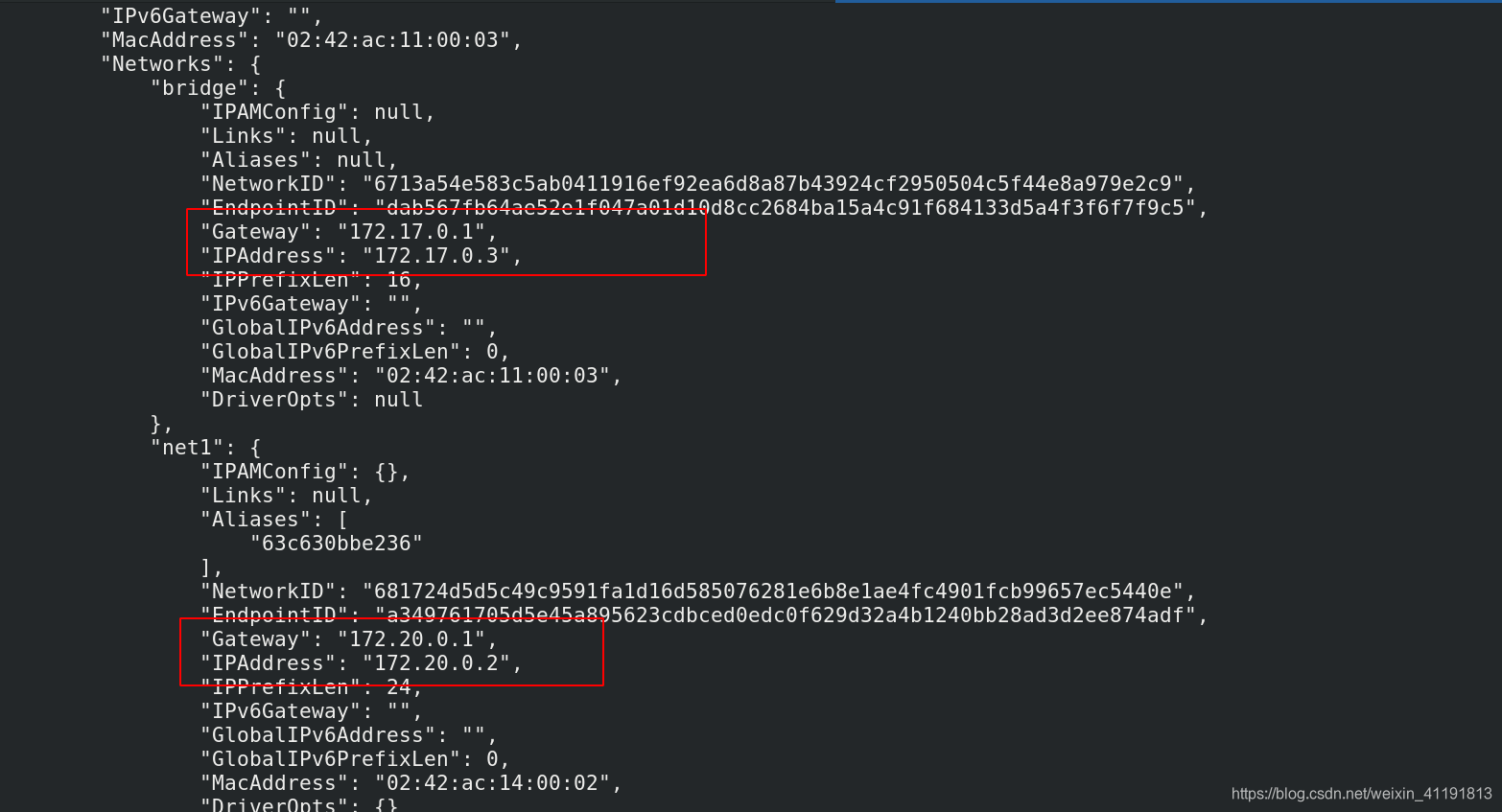

docker network connect net1 demo #使用 docker network connect命令为demo添加一块net1 的网卡

docker inspect demo

2.2 Communication between containers

2.2.1 DNS resolution in docker

- In addition to using ip communication between containers, you can also use container name communication

- Starting from docker 1.10, a DNS server is embedded.

- The dns resolution function must be used in a custom network.

- Use the --name parameter to specify the container name when starting the container.

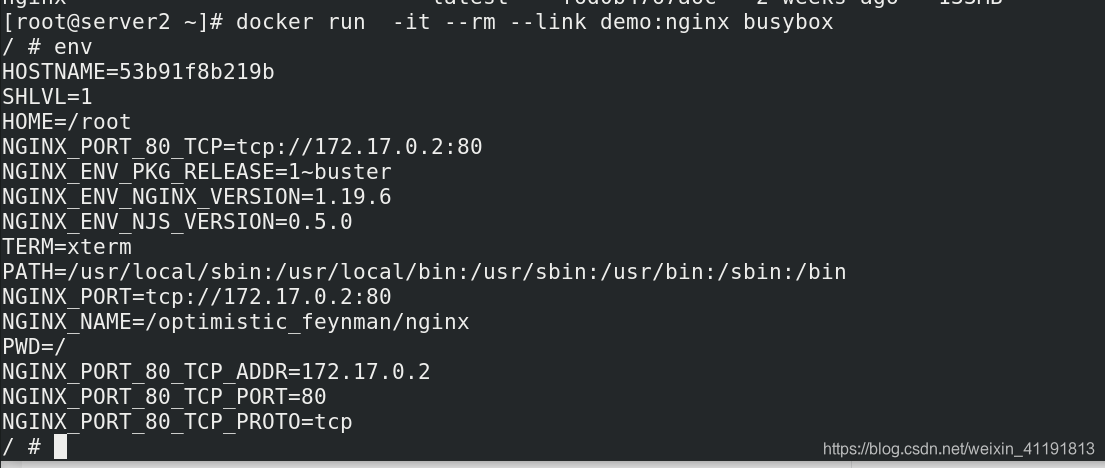

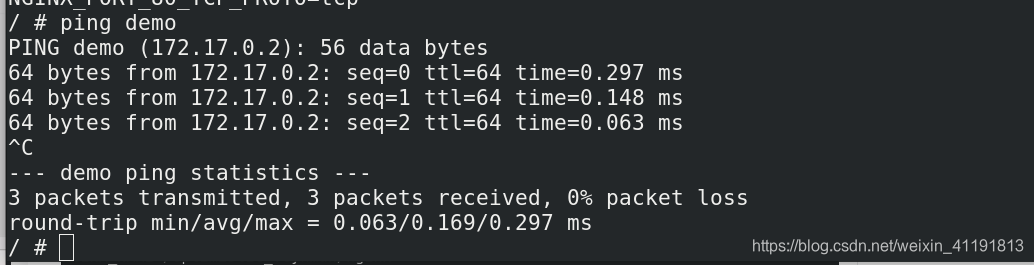

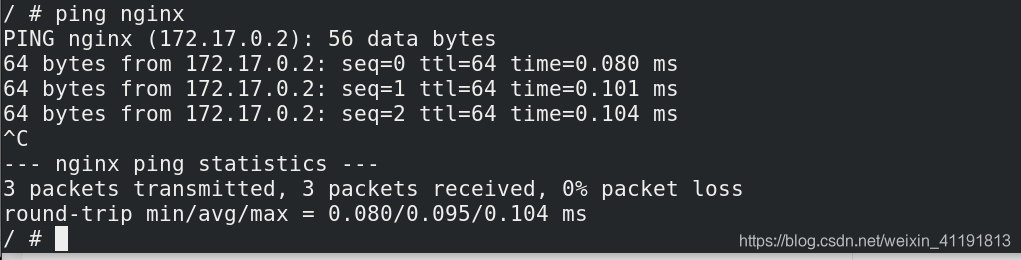

2.2.2 link

--link 可以用来链接2个容器。

--link的格式:

--link <name or id>:alias

name和id是源容器的name和id,alias是源容器在link下的别名。

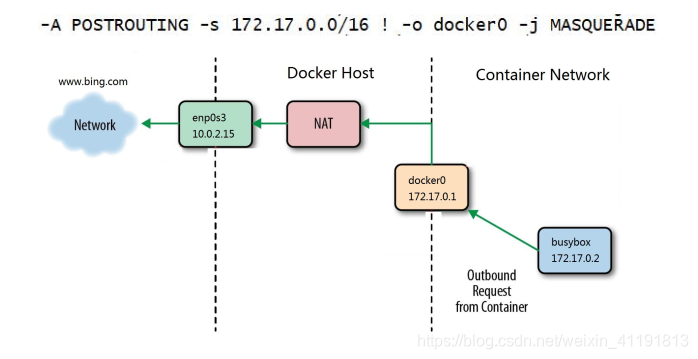

2.3 How the container accesses the external network is achieved through SNAT of iptables

How the container accesses the external network is achieved through SNAT of iptables

2.4 How to access the container from the external network

2.4.1 Need to do port mapping

- Port Mapping

- -p option specifies the mapped port

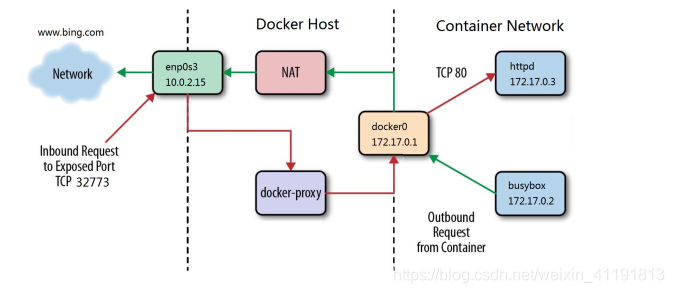

2.4.2 Docker-proxy and iptables DNAT are used to access the container from the external network

Docker-proxy and iptables DNAT are used to access the container from the external network

- The host uses iptables DNAT to access the native container

- The external host accesses the container or the access between the containers is implemented by docker-proxy

Proxy and DNAT can communicate as long as one exists. It is a dual-redundant configuration. localhost uses docker-proxy to forward.

3. Cross-host network with advanced network configuration (advanced network)

3.1 Cross-host network solution

- Docker native overlay and macvlan

- Third-party flannel, weave, calico

How many network solutions are integrated with docker

- libnetwork

- docker container network library

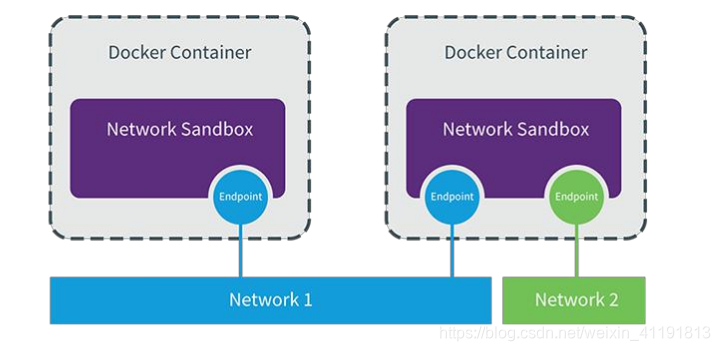

- CNM (Container Network Model) This model container network was pumping

like

CNM is divided into three types of components

- Sandbox: container network stack, including container interface, dns, routing table. (namespace)

- Endpoint: The role is to connect the sandbox to the network (veth pair)

- Network: Contains a set of endpoints, endpoints of the same network can communicate.

3.2 macvlan network solution realization

Introduce

a kind of network card virtualization technology provided by Linux kernel. (The kernel comes with it)

No Linux bridge is required, and the physical interface is directly used, with excellent performance.

Build steps

- Add a network card on each of the two docker hosts and turn on the network card promiscuous mode

- Create a macvlan network on each of the two docker hosts

Macvlan network structure analysis

- No new linux bridge

- The interface of the container is directly connected to the host network card without NAT or port mapping.

[root@server2 ~]# brctl show

bridge name bridge id STP enabled interfaces

br-283cf1fc96c1 8000.02426d0e4f33 no

docker0 8000.024257e101e8 no

macvlan will monopolize the host network card, but you can use vlan subinterfaces to implement multiple macvlan networks.

vlan can divide the physical Layer 2 network into 4094 logical networks, which are isolated from each other. The value of vlan id is 1~4094.

Isolation and connectivity between macvlan networks

- The macvlan network is isolated on the second layer, so containers on different macvlan networks cannot communicate.

- The macvlan network can be connected through the gateway on the third layer.

- Docker itself does not impose any restrictions, it can be managed like a traditional vlan network.

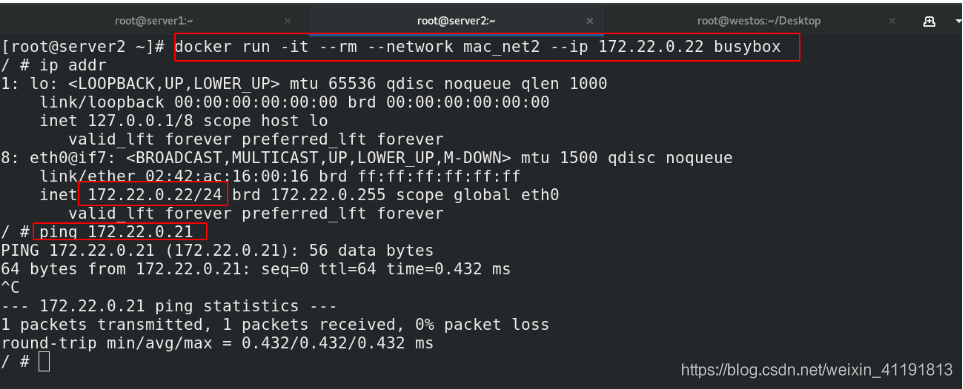

Step screenshot

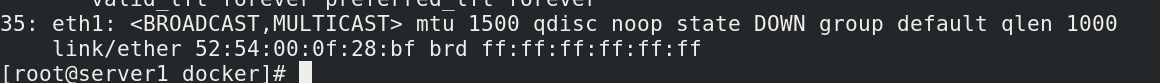

server1 and server2 are added as two network cards

Realize communication between different hosts

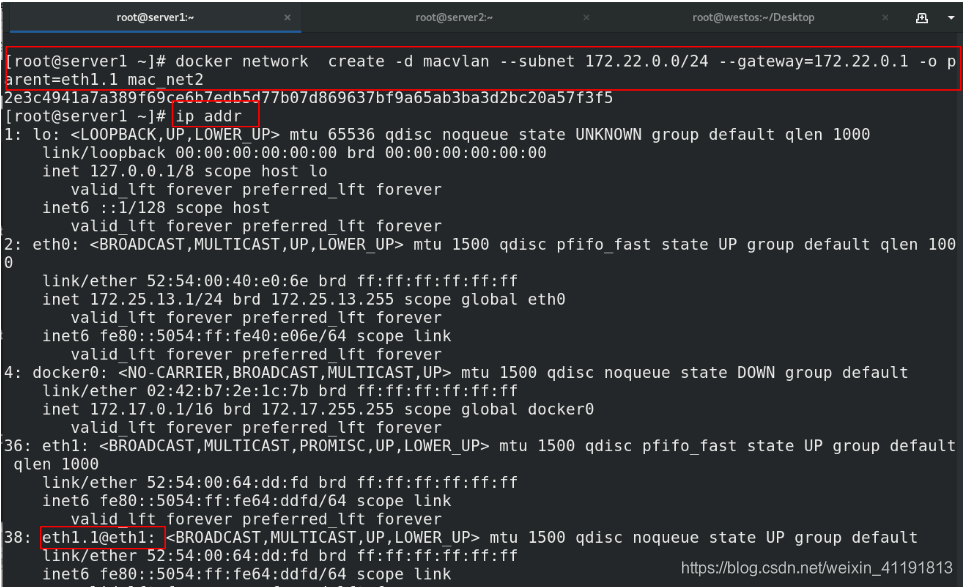

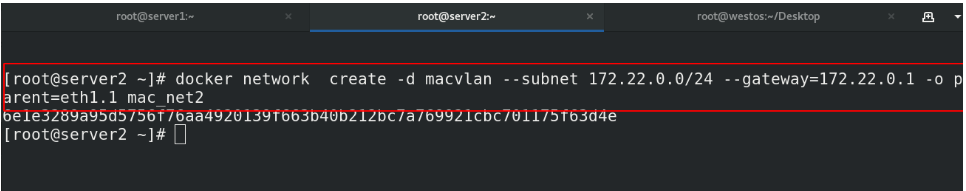

server1和server2同样的操作

ip link set up eth1 ##激活网卡

ip link set eth1 promisc on ##打开混杂模式PROMISC

ip addr show eth1

36: eth1: <BROADCAST,MULTICAST,PROMISC,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 52:54:00:64:dd:fd brd ff:ff:ff:ff:ff:ff

inet6 fe80::5054:ff:fe64:ddfd/64 scope link

valid_lft forever preferred_lft forever

docker network create -d macvlan --subnet 172.21.0.0/24 --gateway=172.21.0.1 -o parent=eth1 mac_net1

##使用eth1网卡

docker inspect mac_net1 | grep "parent"

"parent": "eth1"

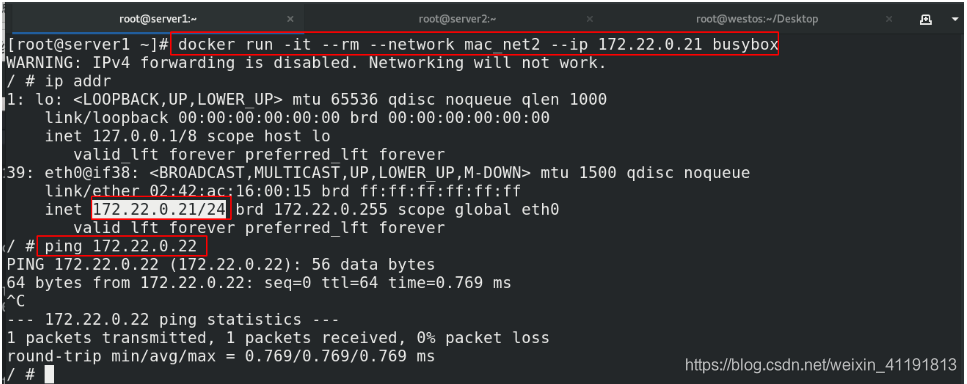

docker run -it --rm --network mac_net1 --ip 172.21.0.11 busybox ##server1设置为11,server2设置为12

WARNING: IPv4 forwarding is disabled. Networking will not work.

/ # ip addr

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

37: eth0@if36: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue

link/ether 02:42:ac:15:00:0b brd ff:ff:ff:ff:ff:ff

inet 172.21.0.11/24 brd 172.21.0.255 scope global eth0

valid_lft forever preferred_lft forever

/ # ping 172.21.0.12

PING 172.21.0.12 (172.21.0.12): 56 data bytes

64 bytes from 172.21.0.12: seq=0 ttl=64 time=0.375 ms

VLAN can divide the physical Layer 2 network into 4094 logical networks, which are isolated from each other. The value of VLAN ID is 1~4094.

3.3 Summary of docker network subcommands

docker network 【参数】 【网络名称】

connect 连接容器到指定网络

create 创建网络

disconnect 断开容器与指定网络的连接

inspect 显示指定网络的详细信息

ls 显示所有网络

rm 删除网络