[Serie de resolución de errores de k8s] Error: no se pudo obtener la tarea del contenedor de espacio aislado: no se encontró ninguna tarea en ejecución: tarea xxx no encontrada

Directorio de artículos

fenómeno problemático

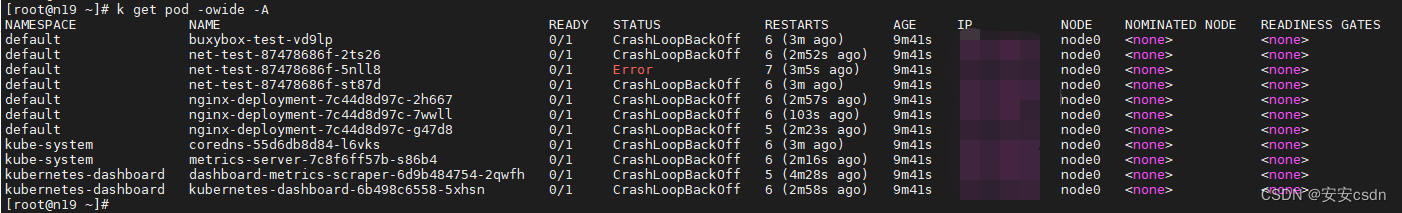

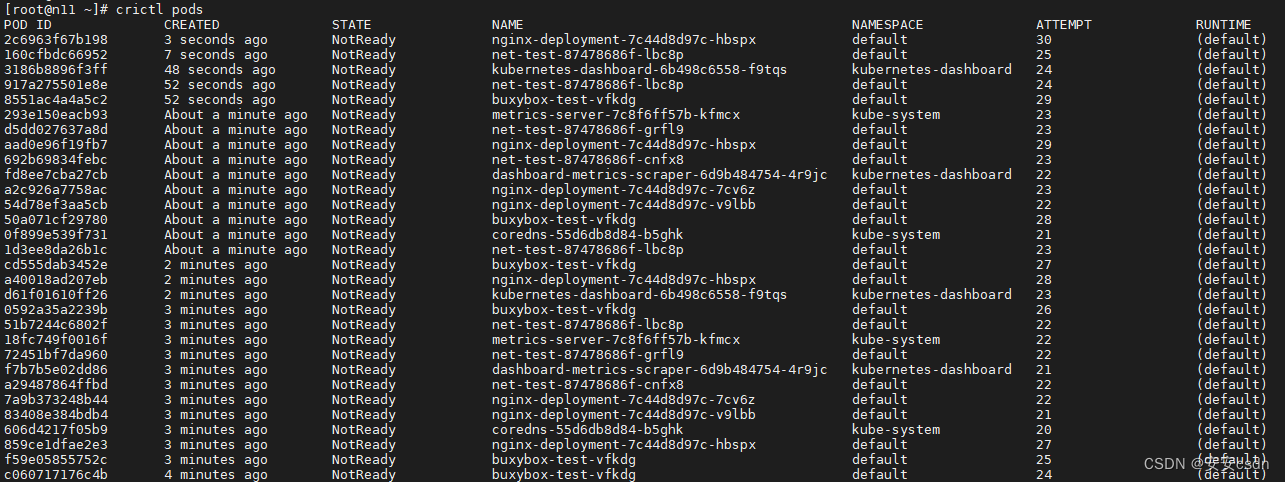

Hay un nodo en el host y, ocasionalmente, uno de los pods iniciados es normal o todos son anormales.

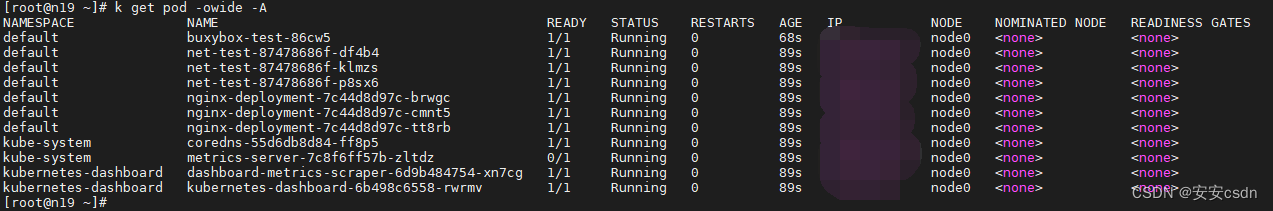

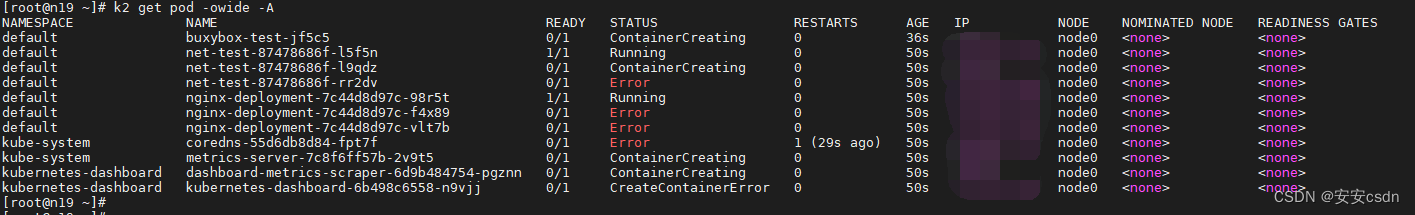

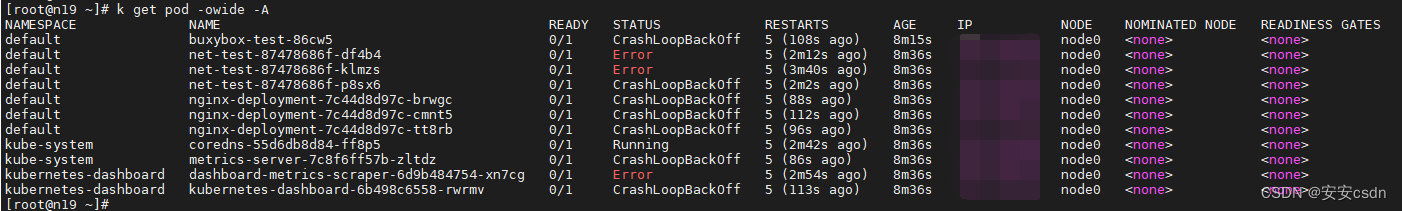

Ver información del pod, todos están no listos

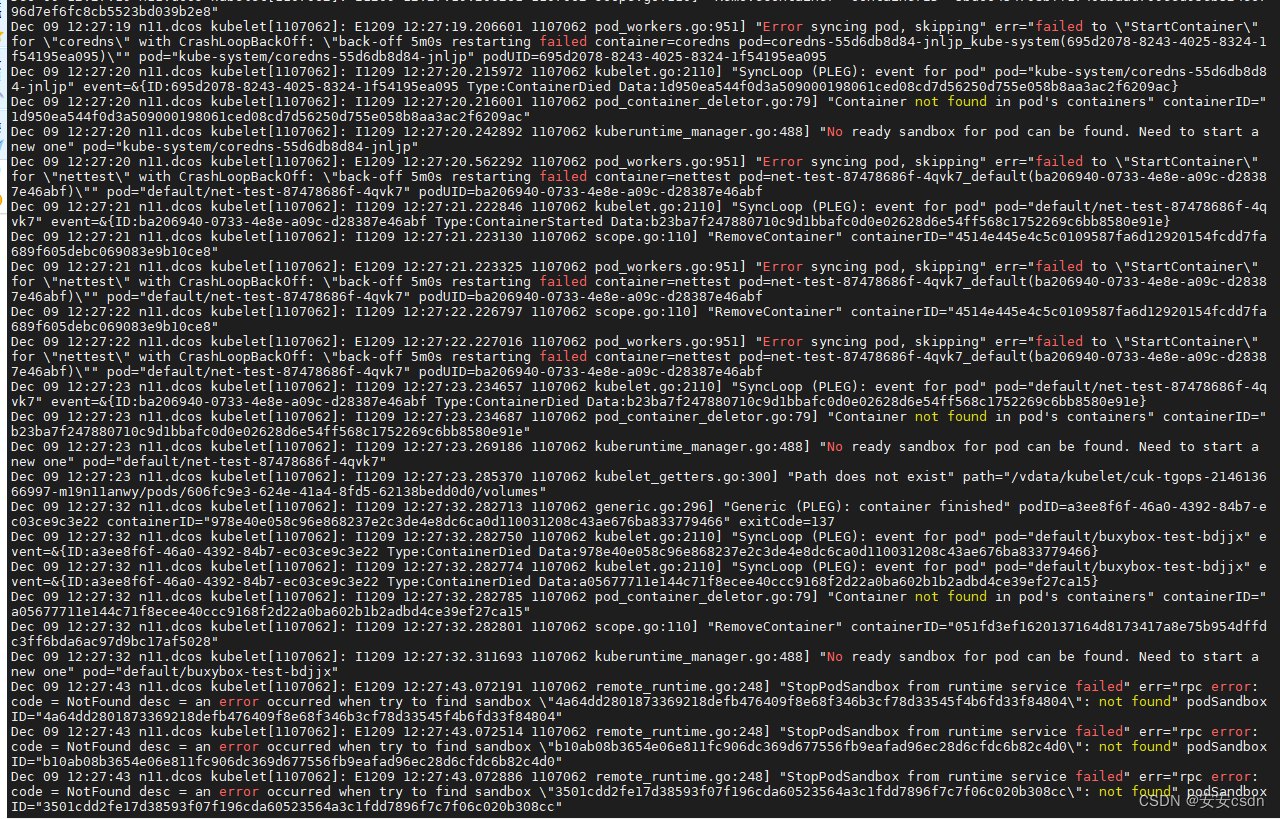

Verifique el registro de kubelet e informe un error loco:

Dec 09 12:33:10 n11.dcos kubelet[1107062]: I1209 12:33:10.817133 1107062 kubelet.go:2110] "SyncLoop (PLEG): event for pod" pod="kube-system/coredns-55d6db8d84-jnljp" event=&{

ID:695d2078-8243-4025-8324-1f54195ea095 Type:ContainerDied Data:5f4e3353f3c396228e993c10d8bba8894a0a68f53987aa2aa534132e4379ca39}

Dec 09 12:33:10 n11.dcos kubelet[1107062]: I1209 12:33:10.817164 1107062 pod_container_deletor.go:79] "Container not found in pod's containers" containerID="5f4e3353f3c396228e993c10d8bba8894a0a68f53987aa2aa534132e4379ca39"

Dec 09 12:33:10 n11.dcos kubelet[1107062]: I1209 12:33:10.879058 1107062 kuberuntime_manager.go:488] "No ready sandbox for pod can be found. Need to start a new one" pod="kube-system/coredns-55d6db8d84-jnljp"

Dec 09 12:33:14 n11.dcos kubelet[1107062]: E1209 12:33:14.684002 1107062 pod_workers.go:951] "Error syncing pod, skipping" err="failed to \"StartContainer\" for \"nettest\" with CrashLoopBackOff: \"back-off 5m0s restarting failed container=nettest pod=net-test-87478686f-4qvk7_default(ba206940-0733-4e8e-a09c-d28387e46abf)\"" pod="default/net-test-87478686f-4qvk7" podUID=ba206940-0733-4e8e-a09c-d28387e46abf

Dec 09 12:33:14 n11.dcos kubelet[1107062]: I1209 12:33:14.844797 1107062 kubelet.go:2110] "SyncLoop (PLEG): event for pod" pod="default/net-test-87478686f-4qvk7" event=&{

ID:ba206940-0733-4e8e-a09c-d28387e46abf Type:ContainerStarted Data:34ef1dc4b2394818a7a843a8655af5444af0766083fe72a53204d9e554ed30c7}

Dec 09 12:33:14 n11.dcos kubelet[1107062]: I1209 12:33:14.845095 1107062 scope.go:110] "RemoveContainer" containerID="797fdf4f559d1079c4e38bc6da7dbc3d547a8f41aa9da86e80d12934326b9507"

Dec 09 12:33:14 n11.dcos kubelet[1107062]: E1209 12:33:14.845294 1107062 pod_workers.go:951] "Error syncing pod, skipping" err="failed to \"StartContainer\" for \"nettest\" with CrashLoopBackOff: \"back-off 5m0s restarting failed container=nettest pod=net-test-87478686f-4qvk7_default(ba206940-0733-4e8e-a09c-d28387e46abf)\"" pod="default/net-test-87478686f-4qvk7" podUID=ba206940-0733-4e8e-a09c-d28387e46abf

Dec 09 12:33:15 n11.dcos kubelet[1107062]: I1209 12:33:15.285417 1107062 kubelet_getters.go:300] "Path does not exist" path="/vdata/kubelet/cuk-tgops-214613666997-m19n11anwy/pods/606fc9e3-624e-41a4-8fd5-62138bedd0d0/volumes"

Dec 09 12:33:15 n11.dcos kubelet[1107062]: I1209 12:33:15.849072 1107062 scope.go:110] "RemoveContainer" containerID="797fdf4f559d1079c4e38bc6da7dbc3d547a8f41aa9da86e80d12934326b9507"

Dec 09 12:33:15 n11.dcos kubelet[1107062]: E1209 12:33:15.849298 1107062 pod_workers.go:951] "Error syncing pod, skipping" err="failed to \"StartContainer\" for \"nettest\" with CrashLoopBackOff: \"back-off 5m0s restarting failed container=nettest pod=net-test-87478686f-4qvk7_default(ba206940-0733-4e8e-a09c-d28387e46abf)\"" pod="default/net-test-87478686f-4qvk7" podUID=ba206940-0733-4e8e-a09c-d28387e46abf

Dec 09 12:33:16 n11.dcos kubelet[1107062]: I1209 12:33:16.856556 1107062 kubelet.go:2110] "SyncLoop (PLEG): event for pod" pod="default/net-test-87478686f-4qvk7" event=&{

ID:ba206940-0733-4e8e-a09c-d28387e46abf Type:ContainerDied Data:34ef1dc4b2394818a7a843a8655af5444af0766083fe72a53204d9e554ed30c7}

Dec 09 12:33:16 n11.dcos kubelet[1107062]: I1209 12:33:16.856585 1107062 pod_container_deletor.go:79] "Container not found in pod's containers" containerID="34ef1dc4b2394818a7a843a8655af5444af0766083fe72a53204d9e554ed30c7"

Dec 09 12:33:16 n11.dcos kubelet[1107062]: I1209 12:33:16.887999 1107062 kuberuntime_manager.go:488] "No ready sandbox for pod can be found. Need to start a new one" pod="default/net-test-87478686f-4qvk7"

Dec 09 12:33:21 n11.dcos kubelet[1107062]: I1209 12:33:21.285448 1107062 kubelet_getters.go:300] "Path does not exist" path="/vdata/kubelet/cuk-tgops-214613666997-m19n11anwy/pods/a53c22da-66e3-4ca0-aa58-70333f97b1f4/volumes"

Dec 09 12:33:36 n11.dcos kubelet[1107062]: E1209 12:33:36.075809 1107062 remote_runtime.go:248] "StopPodSandbox from runtime service failed" err="rpc error: code = NotFound desc = an error occurred when try to find sandbox \"8c9a835ce06133da9af68d2e09fa2c429ebe292d4273b44937300b432c117c79\": not found" podSandbox ID="8c9a835ce06133da9af68d2e09fa2c429ebe292d4273b44937300b432c117c79"

Dec 09 12:33:36 n11.dcos kubelet[1107062]: E1209 12:33:36.076142 1107062 remote_runtime.go:248] "StopPodSandbox from runtime service failed" err="rpc error: code = NotFound desc = an error occurred when try to find sandbox \"7e7d0bfc388bbd1afbaee350c6ee443ebc77ec0fbd47747743cf0b80317fe9a3\": not found" podSandbox ID="7e7d0bfc388bbd1afbaee350c6ee443ebc77ec0fbd47747743cf0b80317fe9a3"

Dec 09 12:33:36 n11.dcos kubelet[1107062]: E1209 12:33:36.076428 1107062 remote_runtime.go:248] "StopPodSandbox from runtime service failed" err="rpc error: code = NotFound desc = an error occurred when try to find sandbox \"ae37e7198949ba4fefe79e0c43cba6d7e2e9e86bfed45315d590654771af550c\": not found" podSandbox ID="ae37e7198949ba4fefe79e0c43cba6d7e2e9e86bfed45315d590654771af550c"

Dec 09 12:33:38 n11.dcos kubelet[1107062]: E1209 12:33:38.225891 1107062 pod_workers.go:951] "Error syncing pod, skipping" err="failed to \"StartContainer\" for \"nginx\" with CrashLoopBackOff: \"back-off 5m0s restarting failed container=nginx pod=nginx-deployment-7c44d8d97c-xmc4d_default(29ca696f-a456-4854-be71-f29850e8c155)\"" pod="default/nginx-deployment-7c44d8d97c-xmc4d" podUID=29ca696f-a456-4854-be71-f29850e8c155

Dec 09 12:33:38 n11.dcos kubelet[1107062]: I1209 12:33:38.955676 1107062 kubelet.go:2110] "SyncLoop (PLEG): event for pod" pod="default/nginx-deployment-7c44d8d97c-xmc4d" event=&{

ID:29ca696f-a456-4854-be71-f29850e8c155 Type:ContainerStarted Data:ab57bd5b5ab16ef80b46e9e6cbec947016c90893c7fb1d90cf3b9ba535c8e588}

Dec 09 12:33:38 n11.dcos kubelet[1107062]: I1209 12:33:38.956127 1107062 scope.go:110] "RemoveContainer" containerID="54a5a0bab215c7b30e34826f78a3027deeb26ea0e7384772f0fc149ca5184ab5"

Dec 09 12:33:38 n11.dcos kubelet[1107062]: E1209 12:33:38.956460 1107062 pod_workers.go:951] "Error syncing pod, skipping" err="failed to \"StartContainer\" for \"nginx\" with CrashLoopBackOff: \"back-off 5m0s restarting failed container=nginx pod=nginx-deployment-7c44d8d97c-xmc4d_default(29ca696f-a456-4854-be71-f29850e8c155)\"" pod="default/nginx-deployment-7c44d8d97c-xmc4d" podUID=29ca696f-a456-4854-be71-f29850e8c155

Dec 09 12:33:39 n11.dcos kubelet[1107062]: I1209 12:33:39.959295 1107062 scope.go:110] "RemoveContainer" containerID="54a5a0bab215c7b30e34826f78a3027deeb26ea0e7384772f0fc149ca5184ab5"

Dec 09 12:33:39 n11.dcos kubelet[1107062]: E1209 12:33:39.959503 1107062 pod_workers.go:951] "Error syncing pod, skipping" err="failed to \"StartContainer\" for \"nginx\" with CrashLoopBackOff: \"back-off 5m0s restarting failed container=nginx pod=nginx-deployment-7c44d8d97c-xmc4d_default(29ca696f-a456-4854-be71-

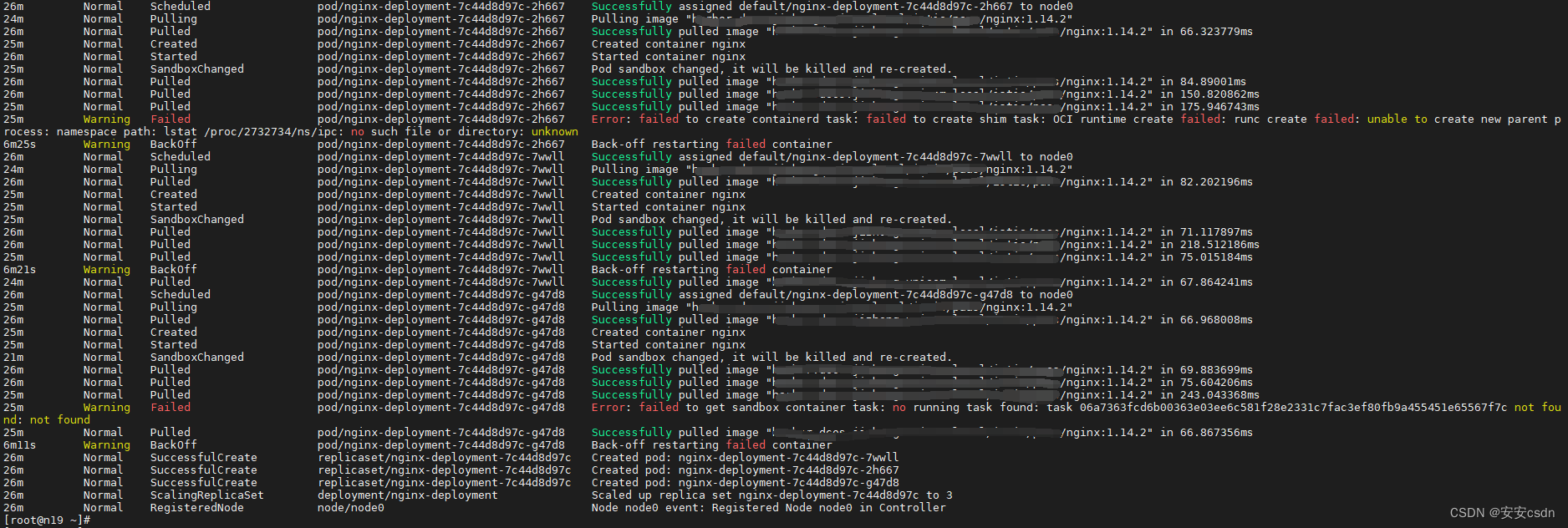

Ver eventos de eventos

kubectl get event

Los principales mensajes de error son los siguientes:

34m Warning Failed pod/buxybox-test-vd9lp Error: failed to create containerd task: failed to create shim task: OCI runtime create failed: runc create failed: unable to start container process: error during container init: read init-p: connection reset by peer: unknown

31m Warning FailedCreatePodSandBox pod/net-test-87478686f-2ts26 Failed to create pod sandbox: rpc error: code = Unknown desc = failed to setup network for sandbox "bd6fef3750ad1321a556bebeb4b86ee3202ecb8760f3a05598e7ee1f395dfc9e": plugin type="cucni" name="cucni" failed (add): cni add error; network not ready after 30s

33m Warning Failed pod/net-test-87478686f-st87d Error: failed to start containerd task "nettest": OCI runtime start failed: container process is already dead: unknown

33m Warning Failed pod/nginx-deployment-7c44d8d97c-2h667 Error: failed to create containerd task: failed to create shim task: OCI runtime create failed: runc create failed: unable to create new parent process: namespace path: lstat /proc/2732734/ns/ipc: no such file or directory: unknown

33m Warning Failed pod/nginx-deployment-7c44d8d97c-g47d8 Error: failed to get sandbox container task: no running task found: task 06a7363fcd6b00363e03ee6c581f28e2331c7fac3ef80fb9a455451e65567f7c not found: not found

Solución

Más tarde, se descubrió que había dos contenedores y dos kubelets en el host, lo que provocó un conflicto. Elimine los contenedores y kubelet restantes y estará bien.

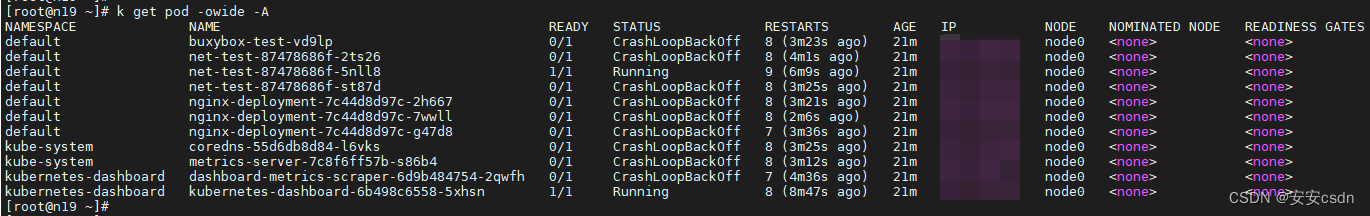

Segunda verificación

Inicie los procesos de nodo (kubelet y contenedor) de dos clústeres en el host al mismo tiempo

Cuando se inició el primer clúster, todo estaba bien.

Después de iniciar el segundo clúster, se descubrió que los pods del segundo clúster no se podían iniciar.

Regrese y mire el primer grupo, y todos están caídos.

2m23s Normal Scheduled pod/buxybox-test-jf5c5 Successfully assigned default/buxybox-test-jf5c5 to node0

93s Warning FailedCreatePodSandBox pod/buxybox-test-jf5c5 Failed to create pod sandbox: rpc error: code = Unknown desc = failed to setup network for sandbox "34d367de3caa7eac610c5b4d119406764ffcbe7ce2001de91cc27a362d3b6e94": plugin type="cucni" name="cucni" failed (add): cni add error; network not ready after 30s

67s Warning FailedCreatePodSandBox pod/buxybox-test-jf5c5 Failed to create pod sandbox: rpc error: code = Unknown desc = failed to start sandbox container task "46dc633fb32aed71d127b78eccc5af35842d81564ba24c713aa29708dff89d61": OCI runtime start failed: container process is already dead: unknown

40s Warning FailedCreatePodSandBox pod/buxybox-test-jf5c5 Failed to create pod sandbox: rpc error: code = Unknown desc = failed to start sandbox container task "f384ef4587957920555bab2d5ecbe91f02526bbf88a969ae60fc8d7aacdc7b16": cannot start a stopped process: unknown

2m

2m7s Warning FailedCreatePodSandBox pod/net-test-87478686f-l5f5n Failed to create pod sandbox: rpc error: code = Unknown desc = failed to setup network for sandbox "0d989f3e527299314aa0f11c073767ff1fc700929a0d9af7367d25afc8312e09": plugin type="cucni" name="cucni" failed (add): error; Link not found

94s Warning FailedCreatePodSandBox pod/net-test-87478686f-l9qdz Failed to create pod sandbox: rpc error: code = Unknown desc = failed to setup network for sandbox "4fa3d5d807cbb0c2be6bb6e6cb13a8ec15ecef124b19b9363507d5e40c8118a5": plugin type="cucni" name="cucni" failed (add): cni add error; network not ready after 30s

2m14s Warning Failed pod/net-test-87478686f-rr2dv Error: failed to get sandbox container task: no running task found: task 947dd55920aa49d9544b7867703242cb3b294fec317de4cafa247377ed1d34aa not found: not found

7m29s Warning Failed pod/net-test-87478686f-klmzs Error: failed to create containerd task: failed to create shim task: OCI runtime create failed: runc create failed: unable to start container process: error during container init: %!w(<nil>): unknown

Por lo tanto, se puede concluir que un host se utiliza como nodo de los dos clústeres, lo que causó el conflicto ~