La vida es demasiado corta, uso Python

Preámbulo

Cuando miramos videos, a veces hay algunos mosaicos extraños que afectan nuestra experiencia de visualización, entonces, ¿cómo se agregan exactamente estos mosaicos?

¡Esta vez usaremos Python para codificar videos automáticamente!

Listo para trabajar

Todavía podemos usar Python3.8 y pycharm2021 para el medio ambiente

Principio de implementación

- Divide el video en audio e imagen;

- El rostro aparece en la pantalla y se compara el objetivo, y se codifica el rostro correspondiente;

- Agregue sonido al video procesado;

módulo

Instale manualmente el módulo cv2, pip install opencv-python installation

Si se informa un error durante la instalación, no se instalará Consulte el artículo en la parte superior de mi página de inicio.

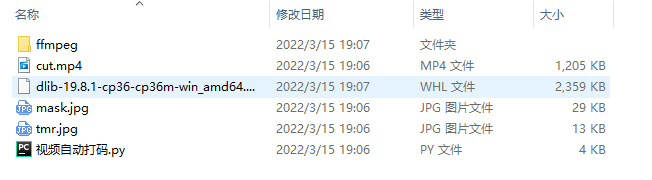

herramienta material

Necesitamos instalar la herramienta de transcodificación de audio y video ffmpeg

Todos los materiales se pueden obtener escaneando el código de la izquierda

análisis de código

Importar módulos requeridos

import cv2

import face_recognition # 人脸识别库 99.7% cmake dlib face_recognition

import subprocess

Convertir video a audio

def video2mp3(file_name):

"""

:param file_name: 视频文件路径

:return:

"""

outfile_name = file_name.split('.')[0] + '.mp3'

cmd = 'ffmpeg -i ' + file_name + ' -f mp3 ' + outfile_name

print(cmd)

subprocess.call(cmd, shell=False)

añadir mosaico

def mask_video(input_video, output_video, mask_path='mask.jpg'):

"""

:param input_video: 需打码的视频

:param output_video: 打码后的视频

:param mask_path: 打码图片

:return:

"""

# 读取图片

mask = cv2.imread(mask_path)

# 读取视频

cap = cv2.VideoCapture(input_video)

# 视频 fps width height

v_fps = cap.get(5)

v_width = cap.get(3)

v_height = cap.get(4)

# 设置写入视频参数 格式MP4

# 画面大小

size = (int(v_width), int(v_height))

fourcc = cv2.VideoWriter_fourcc('m', 'p', '4', 'v')

# 输出视频

out = cv2.VideoWriter(output_video, fourcc, v_fps, size)

# 已知人脸

known_image = face_recognition.load_image_file('tmr.jpg')

biden_encoding = face_recognition.face_encodings(known_image)[0]

cap = cv2.VideoCapture(input_video)

while (cap.isOpened()):

ret, frame = cap.read()

if ret:

# 检测人脸

# 人脸区域

face_locations = face_recognition.face_locations(frame)

for (top_right_y, top_right_x, left_bottom_y, left_bottom_x) in face_locations:

print((top_right_y, top_right_x, left_bottom_y, left_bottom_x))

unknown_image = frame[top_right_y - 50:left_bottom_y + 50, left_bottom_x - 50:top_right_x + 50]

if face_recognition.face_encodings(unknown_image) != []:

unknown_encoding = face_recognition.face_encodings(unknown_image)[0]

# 对比人脸

results = face_recognition.compare_faces([biden_encoding], unknown_encoding)

# [True]

# 贴图

if results == [True]:

mask = cv2.resize(mask, (top_right_x - left_bottom_x, left_bottom_y - top_right_y))

frame[top_right_y:left_bottom_y, left_bottom_x:top_right_x] = mask

out.write(frame)

else:

break

Agregar audio a la imagen

def video_add_mp3(file_name, mp3_file):

"""

:param file_name: 视频画面文件

:param mp3_file: 视频音频文件

:return:

"""

outfile_name = file_name.split('.')[0] + '-f.mp4'

subprocess.call('ffmpeg -i ' + file_name + ' -i ' + mp3_file + ' -strict -2 -f mp4 ' + outfile_name, shell=False)

código completo

import cv2

import face_recognition # 人脸识别库 99.7% cmake dlib face_recognition

import subprocess

def video2mp3(file_name):

outfile_name = file_name.split('.')[0] + '.mp3'

cmd = 'ffmpeg -i ' + file_name + ' -f mp3 ' + outfile_name

print(cmd)

subprocess.call(cmd, shell=False)

def mask_video(input_video, output_video, mask_path='mask.jpg'):

# 读取图片

mask = cv2.imread(mask_path)

# 读取视频

cap = cv2.VideoCapture(input_video)

# 视频 fps width height

v_fps = cap.get(5)

v_width = cap.get(3)

v_height = cap.get(4)

# 设置写入视频参数 格式MP4

# 画面大小

size = (int(v_width), int(v_height))

fourcc = cv2.VideoWriter_fourcc('m', 'p', '4', 'v')

# 输出视频

out = cv2.VideoWriter(output_video, fourcc, v_fps, size)

# 已知人脸

known_image = face_recognition.load_image_file('tmr.jpg')

biden_encoding = face_recognition.face_encodings(known_image)[0]

cap = cv2.VideoCapture(input_video)

while (cap.isOpened()):

ret, frame = cap.read()

if ret:

# 检测人脸

# 人脸区域

face_locations = face_recognition.face_locations(frame)

for (top_right_y, top_right_x, left_bottom_y, left_bottom_x) in face_locations:

print((top_right_y, top_right_x, left_bottom_y, left_bottom_x))

unknown_image = frame[top_right_y - 50:left_bottom_y + 50, left_bottom_x - 50:top_right_x + 50]

if face_recognition.face_encodings(unknown_image) != []:

unknown_encoding = face_recognition.face_encodings(unknown_image)[0]

# 对比人脸

results = face_recognition.compare_faces([biden_encoding], unknown_encoding)

# [True]

# 贴图

if results == [True]:

mask = cv2.resize(mask, (top_right_x - left_bottom_x, left_bottom_y - top_right_y))

frame[top_right_y:left_bottom_y, left_bottom_x:top_right_x] = mask

out.write(frame)

else:

break

def video_add_mp3(file_name, mp3_file):

outfile_name = file_name.split('.')[0] + '-f.mp4'

subprocess.call('ffmpeg -i ' + file_name + ' -i ' + mp3_file + ' -strict -2 -f mp4 ' + outfile_name, shell=False)

if __name__ == '__main__':

# 1.

video2mp3('cut.mp4')

# 2.

mask_video(input_video='cut.mp4',output_video='output.mp4')

# 3.

video_add_mp3(file_name='output.mp4',mp3_file='cut.mp3')

¡Hermanos, pruébenlo!

Bienvenido a discutir e intercambiar en el área de comentarios ~