LVS load balancing cluster ---- NAT mode

A: Meaning cluster

1, consists of multiple hosts, but only outside the performance as a whole

2, the Internet application, as the site for hardware performance, response time, service reliability, higher and higher data reliability requirements, a single server powerless

-

Solution:

-

The use of expensive minicomputers, mainframes

-

Use ordinary server cluster to build service

II: Classification Cluster

-

According to the #### target cluster for the difference, divided into three categories:

-

Load-balancing clusters

To improve the responsiveness of the applications, additional processing access requests as possible, to reduce the delay for the target, to obtain high concurrency, high-load (LB) overall performance;

an LB load distribution depends on the master node split algorithm.

Applications to improve the reliability and reduce the interruption time as much as possible for the target to ensure continuity of service, to achieve high availability (HA) fault tolerance effect;

the HA works includes a duplexer and a master-slave modes.

Application of the system to increase the CPU speed, extension and analysis hardware resource capacities, to obtain the equivalent of a large, high-performance supercomputer computing (HPC) capability;

high-performance high-performance computing clusters depends on the "distributed computing", " parallel computing ", by

dedicated hardware and software will be integrated with the CPU, memory and other resources of multiple servers, only to realize large-scale computing power, the supercomputer was available.

Three: Load Balancing cluster operation mode

-

Load balancing cluster is a cluster of companies with the largest type of

-

Three modes load scheduling technique clusters

-

Address Translation (NAT mode)

-

IP tunnel (TUN mode)

-

Direct Routing (DR Mode)

Four: load balancing cluster structure

First layer: load balancer

- Only responsible response to client requests, and requests through load scheduling algorithm distributed server pool, is the only access to the entire cluster entrance, outside the public domain, vip (Virtual IP, virtual IP) address, also known as the cluster IP address

Second layer: Server Pool

- Used to provide the actual client application services, each real server (server pool called real server node or server) having separate the RIP (Real the IP), only the process scheduler distributes client requests over

Third layer: shared memory

- To provide a stable and consistent file access service for all nodes in the pool of servers used to ensure consistency cluster file (even if the access is not the same node server, but what you see is the same

![[Pictures of foreign chains dump fails, the source station may have a security chain mechanism, it is recommended to save the picture down directly upload (img-XgnJ376i-1579160184709) (C: \ Users \ xumin \ AppData \ Roaming \ Typora \ typora-user-images \ 1579075553939.png)]](https://img-blog.csdnimg.cn/20200116153655125.png?x-oss-process=image/watermark,type_ZmFuZ3poZW5naGVpdGk,shadow_10,text_aHR0cHM6Ly9ibG9nLmNzZG4ubmV0L1h1TWluNg==,size_16,color_FFFFFF,t_70)

Five: LVS load scheduling algorithm

-

##### Polling (Round Robin)

-

The access request received in turn in the order assigned to each node in the cluster (real server), uniformly treat each server, regardless of the actual number of connections and the server system load

-

##### WRR (Weighted Round Robin)

-

The processing capacity of real servers in turn allocate access requests received, the query scheduler may automatically load each node and dynamically adjusts its weight

-

Ensure strong server processing power to take on more traffic

-

##### Least Connection (Least Connections)

-

Allocated according to the number of connections established real server, the request will receive priority access points

assigned to the fewest number of nodes connected -

##### weighted least connections (Weighted Least Connections)

-

In the big difference in performance server node, the weights may be re-adjusted automatically real server

-

Higher weights node will assume a greater proportion of the activities connected load

Six: LVS load balancing mechanism

- LVS load balancing is four, i.e., based on (above the transport layer) of the fourth layer of the OSI model, with a TCP / UDP, LVS supports TCP / UDP transport layer load balancing.

- Because LVS load balancing is four, so it is relative to other high-level load balancing solutions, such as alternate DNS domain name resolution, application layer load scheduling, client scheduling, its efficiency is very high.

Seven: test case

1, the experimental topology

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-AYc4ipy4-1579160184711)(C:\Users\xumin\AppData\Roaming\Typora\typora-user-images\1579077521386.png)]](https://img-blog.csdnimg.cn/20200116153707794.png?x-oss-process=image/watermark,type_ZmFuZ3poZW5naGVpdGk,shadow_10,text_aHR0cHM6Ly9ibG9nLmNzZG4ubmV0L1h1TWluNg==,size_16,color_FFFFFF,t_70)

2, the experimental environment

Taiwan centos7 as LVS Gateway (add a card)

2 centos7 as a web server (web1, web2)

NFS as a shared storage station centos7 services (adding two hard disks)

1 Taiwan as a client win7

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-EVTQfwHu-1579160184711)(C:\Users\xumin\AppData\Roaming\Typora\typora-user-images\1579080015800.png)]](https://img-blog.csdnimg.cn/2020011615372147.png?x-oss-process=image/watermark,type_ZmFuZ3poZW5naGVpdGk,shadow_10,text_aHR0cHM6Ly9ibG9nLmNzZG4ubmV0L1h1TWluNg==,size_16,color_FFFFFF,t_70)

3, experimental purposes

win7 client access 12.0.0.1 website, by nat address translation, access to polling web1 and web2 host;

Build a NFS network file storage services.

4, the experiment

(1) In the NFS storage server must be added to the two hard drives, add after the restart required. You can enter the command ls / dev / see if the added successfully

To two hard disk partition, format:

[root@nfs ~]# fdisk /dev/sdb ‘对磁盘sdb的分区’

[root@nfs ~]# mkfs.xfs /dev/sdb1 ‘格式化’

[root@nfs ~]# fdisk /dev/sdc ‘对磁盘sdc的分区’

[root@nfs ~]# mkfs.xfs /dev/sdc1

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-eXopdap4-1579160184712)(C:\Users\xumin\AppData\Roaming\Typora\typora-user-images\1579081068737.png)]](https://img-blog.csdnimg.cn/20200116153754312.png?x-oss-process=image/watermark,type_ZmFuZ3poZW5naGVpdGk,shadow_10,text_aHR0cHM6Ly9ibG9nLmNzZG4ubmV0L1h1TWluNg==,size_16,color_FFFFFF,t_70)

(2) create the directory as a mount point and mount

[root@nfs ~]# mkdir /opt/kg /opt/ac

[root@nfs ~]# vim /etc/fstab ‘添加自动挂载的设置’

‘添加2行内容’

/dev/sdb1 /opt/kg xfs defaults 0 0

/dev/sdc1 /opt/ac xfs defaults 0 0

[root@nfs ~]# mount -a

[root@nfs ~]# df -hT

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-Y6dtJRy2-1579160184712)(C:\Users\xumin\AppData\Roaming\Typora\typora-user-images\1579084764151.png)]](https://img-blog.csdnimg.cn/20200116153809154.png?x-oss-process=image/watermark,type_ZmFuZ3poZW5naGVpdGk,shadow_10,text_aHR0cHM6Ly9ibG9nLmNzZG4ubmV0L1h1TWluNg==,size_16,color_FFFFFF,t_70)

(3) turn off the firewall to see if there is NFS related software

[root@nfs ~]# systemctl stop firewalld.service

[root@nfs ~]# setenforce 0

[root@nfs ~]# rpm -q nfs-utils ‘已安装nfs组件’

nfs-utils-1.3.0-0.48.el7.x86_64

[root@nfs ~]# rpm -q rpcbind

rpcbind-0.2.0-42.el7.x86_64 ‘已安装远端过程调用组件’

(4) setting rules, edit the configuration file sharing

[root@nfs ~]# vim /etc/exports

‘192.168.100.0 共享可访问的地址’

/opt/kg 192.168.100.0/24(rw,sync,no_root_squash)

/opt/ac 192.168.100.0/24(rw,sync,no_root_squash)

(5) open the service, and view profiles shared nfs

[root@nfs ~]# systemctl start nfs

[root@nfs ~]# systemctl start rpcbind

[root@nfs ~]# showmount -e

Export list for nfs:

/opt/ac 192.168.100.0/24

/opt/kg 192.168.100.0/24

(6) The card read only host mode, change the IP address

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-MqSxNqmQ-1579160184713)(C:\Users\xumin\AppData\Roaming\Typora\typora-user-images\1579085751876.png)]](https://img-blog.csdnimg.cn/20200116153823865.png?x-oss-process=image/watermark,type_ZmFuZ3poZW5naGVpdGk,shadow_10,text_aHR0cHM6Ly9ibG9nLmNzZG4ubmV0L1h1TWluNg==,size_16,color_FFFFFF,t_70)

[root@nfs ~]# vim /etc/sysconfig/network-scripts/ifcfg-ens33 ‘修改IP地址’

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-AzoqMT5J-1579160184714)(C:\Users\xumin\AppData\Roaming\Typora\typora-user-images\1579085954545.png)]](https://img-blog.csdnimg.cn/20200116153843153.png?x-oss-process=image/watermark,type_ZmFuZ3poZW5naGVpdGk,shadow_10,text_aHR0cHM6Ly9ibG9nLmNzZG4ubmV0L1h1TWluNg==,size_16,color_FFFFFF,t_70)

[root@nfs ~]# service network restart ‘重启网络服务’

Restarting network (via systemctl): [ 确定 ]

[root@nfs ~]# ifconfig ‘查看IP地址’

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-owtYKEni-1579160184714)(C:\Users\xumin\AppData\Roaming\Typora\typora-user-images\1579086156523.png)]](https://img-blog.csdnimg.cn/20200116153849514.png?x-oss-process=image/watermark,type_ZmFuZ3poZW5naGVpdGk,shadow_10,text_aHR0cHM6Ly9ibG9nLmNzZG4ubmV0L1h1TWluNg==,size_16,color_FFFFFF,t_70)

(Two servers same configuration)

(1) install apache service, turn off the firewall

[root@web1 ~]# yum install httpd -y

[root@web1 ~]# systemctl stop firewalld.service

[root@web1 ~]# setenforce 0

(2) Set the card to the host only mode, change the IP address and

[root@web1 ~]# vim /etc/sysconfig/network-scripts/ifcfg-ens33

[root@web1 ~]# service network restart

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-ghtZRKPm-1579160184715)(C:\Users\xumin\AppData\Roaming\Typora\typora-user-images\1579086885469.png)]](https://img-blog.csdnimg.cn/20200116153911961.png?x-oss-process=image/watermark,type_ZmFuZ3poZW5naGVpdGk,shadow_10,text_aHR0cHM6Ly9ibG9nLmNzZG4ubmV0L1h1TWluNg==,size_16,color_FFFFFF,t_70)

[root@web2 ~]# vim /etc/sysconfig/network-scripts/ifcfg-ens33

[root@web2 ~]# service network restart

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-89bcburk-1579160184715)(C:\Users\xumin\AppData\Roaming\Typora\typora-user-images\1579087086154.png)]](https://img-blog.csdnimg.cn/20200116153920983.png?x-oss-process=image/watermark,type_ZmFuZ3poZW5naGVpdGk,shadow_10,text_aHR0cHM6Ly9ibG9nLmNzZG4ubmV0L1h1TWluNg==,size_16,color_FFFFFF,t_70)

(3) Verify that the NFS service is not a problem

[root@web1 ~]# showmount -e 192.168.100.120

Export list for 192.168.100.120:

/opt/ac 192.168.100.0/24

/opt/kg 192.168.100.0/24 ‘两台服务器都需验证’

(4) automatically mount the NFS share to the local

[root@web1 ~]# vim /etc/fstab

‘末尾添加挂载设置’

192.168.100.120:/opt/kg /var/www/html nfs defaults,_netdev 0 0

[root@web2 ~]# vim /etc/fstab

192.168.100.120:/opt/ac /var/www/html nfs defaults,_netdev 0 0

[root@web1 ~]# mount -a '使挂载文件生效'

[root@web1 ~]# df -hT ‘查看挂载’

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-T9CYeZsZ-1579160184716)(C:\Users\xumin\AppData\Roaming\Typora\typora-user-images\1579088291113.png)]](https://img-blog.csdnimg.cn/20200116153949198.png?x-oss-process=image/watermark,type_ZmFuZ3poZW5naGVpdGk,shadow_10,text_aHR0cHM6Ly9ibG9nLmNzZG4ubmV0L1h1TWluNg==,size_16,color_FFFFFF,t_70)

(5) into the home, respectively, to write two web servers Home files

[root@web1 ~]# cd /var/www/html/

[root@web1 html]# vim index.html

‘添加web1首页内容’

<h1>this is kg web</h1>

[root@web1 html]# systemctl start httpd ‘开启服务’

[root@web1 html]# netstat -ntap | grep 80

tcp6 0 0 :::80 :::* LISTEN 6765/httpd

[root@web2 ~]# cd /var/www/html/

[root@web2 html]# vim index.html

‘添加web2首页内容’

<h1>this is ac web</h1>

[root@web2 html]# systemctl start httpd ‘开启服务’

[root@web2 html]# netstat -ntap | grep 80

tcp6 0 0 :::80 :::* LISTEN 4815/httpd

LVS load balancing configuration

(1) installation services ipvsadm

[root@lvs ~]# yum install ipvsadm -y

(2) adding a card, to the host mode are set

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-CMi82xqD-1579160184717)(C:\Users\xumin\AppData\Roaming\Typora\typora-user-images\1579089318747.png)]](https://img-blog.csdnimg.cn/20200116154003967.png?x-oss-process=image/watermark,type_ZmFuZ3poZW5naGVpdGk,shadow_10,text_aHR0cHM6Ly9ibG9nLmNzZG4ubmV0L1h1TWluNg==,size_16,color_FFFFFF,t_70)

(3) modify the IP address

[root@lvs ~]# cd /etc/sysconfig/network-scripts/

[root@lvs network-scripts]# cp -p ifcfg-ens33 ifcfg-ens36 ‘复制一份作为另一个网卡ens36’

[root@lvs network-scripts]# ls

ifcfg-ens33 ifdown-post ifup-eth ifup-sit

ifcfg-ens36 ......

[root@lvs network-scripts]# vim ifcfg-ens33

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-wJQxlgZo-1579160184718)(C:\Users\xumin\AppData\Roaming\Typora\typora-user-images\1579089756203.png)]](https://img-blog.csdnimg.cn/20200116154018367.png?x-oss-process=image/watermark,type_ZmFuZ3poZW5naGVpdGk,shadow_10,text_aHR0cHM6Ly9ibG9nLmNzZG4ubmV0L1h1TWluNg==,size_16,color_FFFFFF,t_70)

[root@lvs network-scripts]# vim ifcfg-ens36

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-CYAnZ4eE-1579160184718)(C:\Users\xumin\AppData\Roaming\Typora\typora-user-images\1579159255324.png)]](https://img-blog.csdnimg.cn/20200116154024527.png?x-oss-process=image/watermark,type_ZmFuZ3poZW5naGVpdGk,shadow_10,text_aHR0cHM6Ly9ibG9nLmNzZG4ubmV0L1h1TWluNg==,size_16,color_FFFFFF,t_70)

[root@lvs network-scripts]# service network restart

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-MaaoyCTL-1579160184719)(C:\Users\xumin\AppData\Roaming\Typora\typora-user-images\1579089972917.png)]](https://img-blog.csdnimg.cn/20200116154043669.png?x-oss-process=image/watermark,type_ZmFuZ3poZW5naGVpdGk,shadow_10,text_aHR0cHM6Ly9ibG9nLmNzZG4ubmV0L1h1TWluNg==,size_16,color_FFFFFF,t_70)

(4) To verify the web server

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-zWPa4hBg-1579160184719)(C:\Users\xumin\AppData\Roaming\Typora\typora-user-images\1579090051488.png)]](https://img-blog.csdnimg.cn/20200116154103466.png)

(5) turn routing forwarding, setting firewall rules

[root@lvs network-scripts]# vim /etc/sysctl.conf

‘添加到末尾’

net.ipv4.ip_forward=1 ‘启动路由转化功能’

[root@lvs ~]# sysctl -p ‘启动’

[root@lvs network-scripts]# iptables -F ‘情况转发表’

[root@lvs network-scripts]# iptables -t nat -F ‘清空nat地址转换表’

[root@lvs network-scripts]# iptables -t nat -A POSTROUTING -o ens36 -s 192.168.100.0/24 -j SNAT --to-source 12.0.0.1 ‘添加地址转换规则’

(6) load module, open ipvsadm service

[root@lvs ~]# cd /etc/sysconfig/network-scripts/

[root@lvs network-scripts]# modprobe ip_vs ‘加载’

[root@lvs network-scripts]# ipvsadm --save > /etc/sysconfig/ipvsadm

[root@lvs network-scripts]# systemctl start ipvsadm

(7) add a script to set LVS rules, plus permission to perform

[root@lvs network-scripts]# cd /opt/

[root@lvs opt]# vim nat.sh

‘添加脚本 ,采用轮询算法访问两个网站’

#! /bin/bash

ipvsadm -C

ipvsadm -A -t 12.0.0.1:80 -s rr ‘轮询’

ipvsadm -a -t 12.0.0.1:80 -r 192.168.100.110:80 -m

ipvsadm -a -t 12.0.0.1:80 -r 192.168.100.111:80 -m ‘映射web两台服务器’

ipvsadm

[root@lvs opt]# chmod +x nat.sh ‘给nat.sh脚本权限’

[root@lvs opt]# ./nat.sh ‘执行脚本’

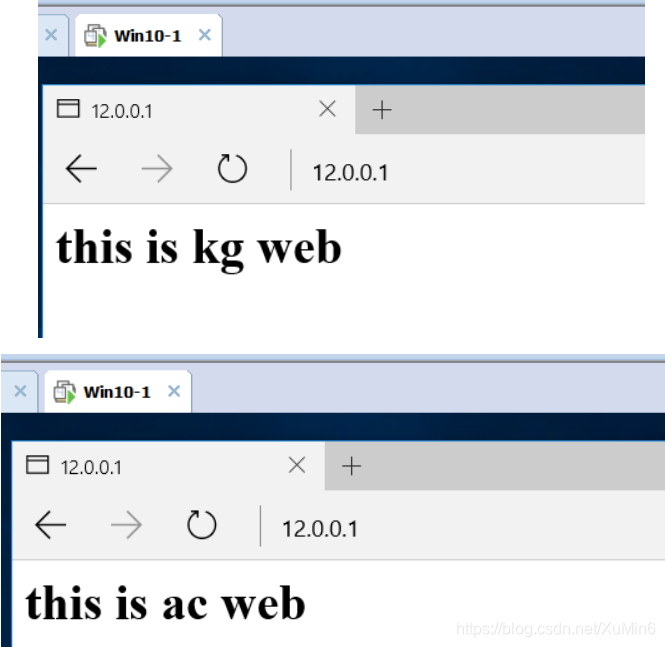

(8) To verify the win10

Mapping the external network through the gateway client access network directly to the web interface of the network, the network web polling manner shown, i.e. a display screen web1, web2 a display screen, a web server can effectively alleviate the pressure . (During the visit if there is no change, it can clear the cache)