大家都知道,ffmpeg是一个强大的转码程序,但是ffmpeg功能实在太多了,以至于我们读ffmpeg.c都觉得特别头疼!其实你我都只是想看看mp4转ts,或avi转mkv怎么做的~

好吧,其实ffmpeg的源码里有sample code,是简化版的转码程序,读起来就容易多了!

具体位置:ffmpeg/doc/examples/transcoding.c。

我把这个代码拉出来,修改了一下,单独编译成一个程序。也很好用。

为了让更多的朋友了解,我决定博此一文,说说这个转码程序!

1 源码+详细注释

#include <libavcodec/avcodec.h>

#include <libavformat/avformat.h>

#include <libavfilter/avfiltergraph.h>

#include <libavfilter/buffersink.h>

#include <libavfilter/buffersrc.h>

#include <libavutil/opt.h>

#include <libavutil/pixdesc.h>

static AVFormatContext *ifmt_ctx;//DEMUX,比如把输入的input.mp4拆分为audio和video的ES数据

static AVFormatContext *ofmt_ctx;//MUX,比如把编码好的audio/video ES数据打包成output.ts

typedef struct FilteringContext {

AVFilterContext *buffersink_ctx;

AVFilterContext *buffersrc_ctx;

AVFilterGraph *filter_graph;

} FilteringContext;

static FilteringContext *filter_ctx;

//打开并解析要转码的源文件(如input.mp4),并分配相应的decoder

//比如,源文件是input.mp4(H264+AAC),那么就有2个stream,分配2个decoder,H264 decoder & AAC decoder

static int open_input_file(const char *filename)

{

int ret;

unsigned int i;

ifmt_ctx = NULL;

if ((ret = avformat_open_input(&ifmt_ctx, filename, NULL, NULL)) < 0) {

printf("Cannot open input file\n");

return ret;

}

if ((ret = avformat_find_stream_info(ifmt_ctx, NULL)) < 0) {

printf("Cannot find stream information\n");

return ret;

}

//一般有2个streams,一个video, 一个audio

for (i = 0; i < ifmt_ctx->nb_streams; i++) {

AVStream *stream;

AVCodecContext *codec_ctx;

stream = ifmt_ctx->streams[i];

codec_ctx = stream->codec;

/* Reencode video & audio and remux subtitles etc. */

if (codec_ctx->codec_type == AVMEDIA_TYPE_VIDEO

|| codec_ctx->codec_type == AVMEDIA_TYPE_AUDIO) {

/* Open decoder */

//为stream寻找一个合适的decoder,for example, video stream use H264 decoder, audio stream use AAC decoder

ret = avcodec_open2(codec_ctx,

avcodec_find_decoder(codec_ctx->codec_id), NULL);

if (ret < 0) {

printf("Failed to open decoder for stream #%u\n", i);

return ret;

}

}

}

av_dump_format(ifmt_ctx, 0, filename, 0);

return 0;

}

//打开输出文件,如我们的目标是转码成TS文件,output.ts

//分配相应的encoder, 本例,encoder的codec与decoder的一样。也就是, output.ts的codec还是和input.mp4一样(H264+AAC)

static int open_output_file(const char *filename)

{

AVStream *out_stream;

AVStream *in_stream;

AVCodecContext *dec_ctx, *enc_ctx;

AVCodec *encoder;

int ret;

unsigned int i;

ofmt_ctx = NULL;

avformat_alloc_output_context2(&ofmt_ctx, NULL, NULL, filename);

if (!ofmt_ctx) {

printf("Could not create output context\n");

return AVERROR_UNKNOWN;

}

for (i = 0; i < ifmt_ctx->nb_streams; i++) {

out_stream = avformat_new_stream(ofmt_ctx, NULL);

if (!out_stream) {

printf("Failed allocating output stream\n");

return AVERROR_UNKNOWN;

}

in_stream = ifmt_ctx->streams[i];

dec_ctx = in_stream->codec;

enc_ctx = out_stream->codec;

if (dec_ctx->codec_type == AVMEDIA_TYPE_VIDEO

|| dec_ctx->codec_type == AVMEDIA_TYPE_AUDIO) {

// in this example, we choose transcoding to same codec

//假设输入input.mp4(H264+AAC), 那输出还是H264+AAC, 只不过容器变成ts, 输出名字output.ts

encoder = avcodec_find_encoder(dec_ctx->codec_id);

if (!encoder) {

av_log(NULL, AV_LOG_FATAL, "Necessary encoder not found\n");

return AVERROR_INVALIDDATA;

}

/* In this example, we transcode to same properties (picture size,

* sample rate etc.). These properties can be changed for output

* streams easily using filters */

if (dec_ctx->codec_type == AVMEDIA_TYPE_VIDEO) {

enc_ctx->height = dec_ctx->height;

enc_ctx->width = dec_ctx->width;

enc_ctx->sample_aspect_ratio = dec_ctx->sample_aspect_ratio;

/* take first format from list of supported formats */

enc_ctx->pix_fmt = encoder->pix_fmts[0];

/* video time_base can be set to whatever is handy and supported by encoder */

enc_ctx->time_base = dec_ctx->time_base;

} else {

enc_ctx->sample_rate = dec_ctx->sample_rate;

enc_ctx->channel_layout = dec_ctx->channel_layout;

enc_ctx->channels = av_get_channel_layout_nb_channels(enc_ctx->channel_layout);

/* take first format from list of supported formats */

enc_ctx->sample_fmt = encoder->sample_fmts[0];

enc_ctx->time_base = (AVRational){1, enc_ctx->sample_rate};

}

/* Third parameter can be used to pass settings to encoder */

ret = avcodec_open2(enc_ctx, encoder, NULL);

if (ret < 0) {

printf("Cannot open video encoder for stream #%u\n", i);

return ret;

}

} else if (dec_ctx->codec_type == AVMEDIA_TYPE_UNKNOWN) {

av_log(NULL, AV_LOG_FATAL, "Elementary stream #%d is of unknown type, cannot proceed\n", i);

return AVERROR_INVALIDDATA;

} else {

/* if this stream must be remuxed */

ret = avcodec_copy_context(ofmt_ctx->streams[i]->codec,

ifmt_ctx->streams[i]->codec);

if (ret < 0) {

printf("Copying stream context failed\n");

return ret;

}

}

if (ofmt_ctx->oformat->flags & AVFMT_GLOBALHEADER)

enc_ctx->flags |= AV_CODEC_FLAG_GLOBAL_HEADER;

}

av_dump_format(ofmt_ctx, 0, filename, 1);

if (!(ofmt_ctx->oformat->flags & AVFMT_NOFILE)) {

ret = avio_open(&ofmt_ctx->pb, filename, AVIO_FLAG_WRITE);

if (ret < 0) {

printf("Could not open output file '%s'", filename);

return ret;

}

}

/* init muxer, write output file header */

ret = avformat_write_header(ofmt_ctx, NULL);

if (ret < 0) {

printf("Error occurred when opening output file\n");

return ret;

}

return 0;

}

//filter不要怕,请看http://blog.csdn.net/newchenxf/article/details/51364105

//其实对本例,你可以忽略其细节,就把filter当作FIFO,输入就是buffersrc,输出就是buffersink,buffer无非在这走了个过场而已。

//不过不用filter,直接把buffer从decoder送给encoder,也是可以的。只不过缺点是,这buffer就不能改了,不像用filter,你还可以处理buffer

static int init_filter(FilteringContext* fctx, AVCodecContext *dec_ctx,

AVCodecContext *enc_ctx, const char *filter_spec)

{

char args[512];

int ret = 0;

AVFilter *buffersrc = NULL;

AVFilter *buffersink = NULL;

AVFilterContext *buffersrc_ctx = NULL;

AVFilterContext *buffersink_ctx = NULL;

AVFilterInOut *outputs = avfilter_inout_alloc();

AVFilterInOut *inputs = avfilter_inout_alloc();

AVFilterGraph *filter_graph = avfilter_graph_alloc();

if (!outputs || !inputs || !filter_graph) {

ret = AVERROR(ENOMEM);

goto end;

}

if (dec_ctx->codec_type == AVMEDIA_TYPE_VIDEO) {

buffersrc = avfilter_get_by_name("buffer");

buffersink = avfilter_get_by_name("buffersink");

if (!buffersrc || !buffersink) {

printf("filtering source or sink element not found\n");

ret = AVERROR_UNKNOWN;

goto end;

}

snprintf(args, sizeof(args),

"video_size=%dx%d:pix_fmt=%d:time_base=%d/%d:pixel_aspect=%d/%d",

dec_ctx->width, dec_ctx->height, dec_ctx->pix_fmt,

dec_ctx->time_base.num, dec_ctx->time_base.den,

dec_ctx->sample_aspect_ratio.num,

dec_ctx->sample_aspect_ratio.den);

ret = avfilter_graph_create_filter(&buffersrc_ctx, buffersrc, "in",

args, NULL, filter_graph);

if (ret < 0) {

printf("Cannot create buffer source\n");

goto end;

}

ret = avfilter_graph_create_filter(&buffersink_ctx, buffersink, "out",

NULL, NULL, filter_graph);

if (ret < 0) {

printf("Cannot create buffer sink\n");

goto end;

}

ret = av_opt_set_bin(buffersink_ctx, "pix_fmts",

(uint8_t*)&enc_ctx->pix_fmt, sizeof(enc_ctx->pix_fmt),

AV_OPT_SEARCH_CHILDREN);

if (ret < 0) {

printf("Cannot set output pixel format\n");

goto end;

}

} else if (dec_ctx->codec_type == AVMEDIA_TYPE_AUDIO) {

buffersrc = avfilter_get_by_name("abuffer");

buffersink = avfilter_get_by_name("abuffersink");

if (!buffersrc || !buffersink) {

printf("filtering source or sink element not found\n");

ret = AVERROR_UNKNOWN;

goto end;

}

if (!dec_ctx->channel_layout)

dec_ctx->channel_layout =

av_get_default_channel_layout(dec_ctx->channels);

snprintf(args, sizeof(args),

"time_base=%d/%d:sample_rate=%d:sample_fmt=%s:channel_layout=0x%"PRIx64,

dec_ctx->time_base.num, dec_ctx->time_base.den, dec_ctx->sample_rate,

av_get_sample_fmt_name(dec_ctx->sample_fmt),

dec_ctx->channel_layout);

ret = avfilter_graph_create_filter(&buffersrc_ctx, buffersrc, "in",

args, NULL, filter_graph);

if (ret < 0) {

printf("Cannot create audio buffer source\n");

goto end;

}

ret = avfilter_graph_create_filter(&buffersink_ctx, buffersink, "out",

NULL, NULL, filter_graph);

if (ret < 0) {

printf("Cannot create audio buffer sink\n");

goto end;

}

ret = av_opt_set_bin(buffersink_ctx, "sample_fmts",

(uint8_t*)&enc_ctx->sample_fmt, sizeof(enc_ctx->sample_fmt),

AV_OPT_SEARCH_CHILDREN);

if (ret < 0) {

printf("Cannot set output sample format\n");

goto end;

}

ret = av_opt_set_bin(buffersink_ctx, "channel_layouts",

(uint8_t*)&enc_ctx->channel_layout,

sizeof(enc_ctx->channel_layout), AV_OPT_SEARCH_CHILDREN);

if (ret < 0) {

printf("Cannot set output channel layout\n");

goto end;

}

ret = av_opt_set_bin(buffersink_ctx, "sample_rates",

(uint8_t*)&enc_ctx->sample_rate, sizeof(enc_ctx->sample_rate),

AV_OPT_SEARCH_CHILDREN);

if (ret < 0) {

printf("Cannot set output sample rate\n");

goto end;

}

} else {

ret = AVERROR_UNKNOWN;

goto end;

}

/* Endpoints for the filter graph. */

outputs->name = av_strdup("in");

outputs->filter_ctx = buffersrc_ctx;

outputs->pad_idx = 0;

outputs->next = NULL;

inputs->name = av_strdup("out");

inputs->filter_ctx = buffersink_ctx;

inputs->pad_idx = 0;

inputs->next = NULL;

if (!outputs->name || !inputs->name) {

ret = AVERROR(ENOMEM);

goto end;

}

if ((ret = avfilter_graph_parse_ptr(filter_graph, filter_spec,

&inputs, &outputs, NULL)) < 0)

goto end;

if ((ret = avfilter_graph_config(filter_graph, NULL)) < 0)

goto end;

/* Fill FilteringContext */

fctx->buffersrc_ctx = buffersrc_ctx;

fctx->buffersink_ctx = buffersink_ctx;

fctx->filter_graph = filter_graph;

end:

avfilter_inout_free(&inputs);

avfilter_inout_free(&outputs);

return ret;

}

static int init_filters(void)

{

const char *filter_spec;

unsigned int i;

int ret;

filter_ctx = av_malloc_array(ifmt_ctx->nb_streams, sizeof(*filter_ctx));

if (!filter_ctx)

return AVERROR(ENOMEM);

for (i = 0; i < ifmt_ctx->nb_streams; i++) {

filter_ctx[i].buffersrc_ctx = NULL;

filter_ctx[i].buffersink_ctx = NULL;

filter_ctx[i].filter_graph = NULL;

if (!(ifmt_ctx->streams[i]->codec->codec_type == AVMEDIA_TYPE_AUDIO

|| ifmt_ctx->streams[i]->codec->codec_type == AVMEDIA_TYPE_VIDEO))

continue;

if (ifmt_ctx->streams[i]->codec->codec_type == AVMEDIA_TYPE_VIDEO)

filter_spec = "null"; /* passthrough (dummy) filter for video */

else

filter_spec = "anull"; /* passthrough (dummy) filter for audio */

ret = init_filter(&filter_ctx[i], ifmt_ctx->streams[i]->codec,

ofmt_ctx->streams[i]->codec, filter_spec);

if (ret)

return ret;

}

return 0;

}

static void dumptimebase(const char* flag, int stream_index, AVRational timebase) {

printf("%s[%d]: timebase: %d/%d \n", flag, stream_index, timebase.num, timebase.den);

}

static int encode_write_frame(AVFrame *filt_frame, unsigned int stream_index, int *got_frame) {

int ret;

int got_frame_local;

AVPacket enc_pkt;

//函数指针,看要encode video or audio

int (*enc_func)(AVCodecContext *, AVPacket *, const AVFrame *, int *) =

(ifmt_ctx->streams[stream_index]->codec->codec_type ==

AVMEDIA_TYPE_VIDEO) ? avcodec_encode_video2 : avcodec_encode_audio2;

if (!got_frame)

got_frame = &got_frame_local;

printf("Encoding frame\n");

/* encode filtered frame */

enc_pkt.data = NULL;

enc_pkt.size = 0;

av_init_packet(&enc_pkt);

ret = enc_func(ofmt_ctx->streams[stream_index]->codec, &enc_pkt,

filt_frame, got_frame);

av_frame_free(&filt_frame);

if (ret < 0)

return ret;

if (!(*got_frame))

return 0;

// 编码完成,准备做muxing,做之前,又得把time_base,从encoder,转到输出的time_base

enc_pkt.stream_index = stream_index;

printf("stream[%d]: finish encoding, enc_pkt.pts = %lld\n", stream_index, enc_pkt.pts);

dumptimebase("output streams", stream_index, ofmt_ctx->streams[stream_index]->time_base);

dumptimebase("output streams codec ", stream_index, ofmt_ctx->streams[stream_index]->codec->time_base);

av_packet_rescale_ts(&enc_pkt,

ofmt_ctx->streams[stream_index]->codec->time_base,

ofmt_ctx->streams[stream_index]->time_base);

printf("stream[%d], after scale, now enc_pkt.pts = %lld\n", stream_index, enc_pkt.pts);

printf("Muxing frame\n");

/* mux encoded frame */

ret = av_interleaved_write_frame(ofmt_ctx, &enc_pkt);

return ret;

}

static int filter_encode_write_frame(AVFrame *frame, unsigned int stream_index)

{

int ret;

AVFrame *filt_frame;

printf("Pushing decoded frame to filters\n");

// push the decoded frame into the filtergraph

//其实你如果不知道filter,也不怕,就把它当作一个FIFO,buffersrc是FIFO的输入,buffersinkFIFO的输出

//这里做的,无非就是先把buffer送到FIFO,待会要encode时,再从FIFO拿数据,其实很简单的

ret = av_buffersrc_add_frame_flags(filter_ctx[stream_index].buffersrc_ctx,

frame, 0);

if (ret < 0) {

printf("Error while feeding the filtergraph\n");

return ret;

}

/* pull filtered frames from the filtergraph */

while (1) {

filt_frame = av_frame_alloc();

if (!filt_frame) {

ret = AVERROR(ENOMEM);

break;

}

printf("Pulling filtered frame from filters\n");

//如上文所说,就当作从FIFO拿出数据

ret = av_buffersink_get_frame(filter_ctx[stream_index].buffersink_ctx,

filt_frame);

if (ret < 0) {

/* if no more frames for output - returns AVERROR(EAGAIN)

* if flushed and no more frames for output - returns AVERROR_EOF

* rewrite retcode to 0 to show it as normal procedure completion

*/

if (ret == AVERROR(EAGAIN) || ret == AVERROR_EOF)

ret = 0;

av_frame_free(&filt_frame);

break;

}

filt_frame->pict_type = AV_PICTURE_TYPE_NONE;

//好了,FIFO走了个过场,真的要开始编码了

ret = encode_write_frame(filt_frame, stream_index, NULL);

if (ret < 0)

break;

}

return ret;

}

static int flush_encoder(unsigned int stream_index)

{

int ret;

int got_frame;

if (!(ofmt_ctx->streams[stream_index]->codec->codec->capabilities &

AV_CODEC_CAP_DELAY))

return 0;

while (1) {

av_log(NULL, AV_LOG_INFO, "Flushing stream #%u encoder\n", stream_index);

ret = encode_write_frame(NULL, stream_index, &got_frame);

if (ret < 0)

break;

if (!got_frame)

return 0;

}

return ret;

}

int main(int argc, char **argv)

{

int ret;

AVPacket packet = { .data = NULL, .size = 0 };

AVFrame *frame = NULL;

enum AVMediaType type;

unsigned int stream_index;

unsigned int i;

int got_frame;

int (*dec_func)(AVCodecContext *, AVFrame *, int *, const AVPacket *);

if (argc != 3) {

printf("Usage: %s <input file> <output file>\n", argv[0]);

return 1;

}

//任何ffmpeg程序,都得av_register_all(),哈哈!

av_register_all();

avfilter_register_all();

if ((ret = open_input_file(argv[1])) < 0)

goto end;

if ((ret = open_output_file(argv[2])) < 0)

goto end;

if ((ret = init_filters()) < 0)

goto end;

/* read all packets */

while (1) {

//从输入文件读取一帧ES数据,要么是音频,要么是视频

if ((ret = av_read_frame(ifmt_ctx, &packet)) < 0)

break;

//stream_index表示这一帧是属于哪个流(要么是视频流,要么是音频流, 一般视频流,stream_index=0,音频流stream_index=1

stream_index = packet.stream_index;

type = ifmt_ctx->streams[packet.stream_index]->codec->codec_type;

//printf("Demuxer gave frame of stream_index %u\n", stream_index);

if (filter_ctx[stream_index].filter_graph) {

printf("Going to reencode&filter the frame\n");

//先分配一个空帧,准备存储解码的数据

frame = av_frame_alloc();

if (!frame) {

ret = AVERROR(ENOMEM);

break;

}

//packet的pts的time_base是基于编码格式的,比如TS码流,就是"1/90000",要解码了,所以要scale到decoder,decoder的time_base一般="1/FPS"

printf("stream[%d]: before scale, packet pts %lld\n", stream_index, packet.pts);

av_packet_rescale_ts(&packet,

ifmt_ctx->streams[stream_index]->time_base,

ifmt_ctx->streams[stream_index]->codec->time_base);

dumptimebase("streams", stream_index, ifmt_ctx->streams[stream_index]->time_base);

dumptimebase("streams codec", stream_index, ifmt_ctx->streams[stream_index]->codec->time_base);

printf("stream[%d]: after scale, packet pts %lld\n", stream_index, packet.pts);

//函数指针,根据情况,解码音频或者视频

dec_func = (type == AVMEDIA_TYPE_VIDEO) ? avcodec_decode_video2 :

avcodec_decode_audio4;

ret = dec_func(ifmt_ctx->streams[stream_index]->codec, frame,

&got_frame, &packet);

if (ret < 0) {

av_frame_free(&frame);

printf("Decoding failed\n");

break;

}

//解码成功!准备开始编码咯

if (got_frame) {

//其实,这时候解码出来的frame,pts一般=AV_NOPTS_VALUE,即64位的最小值-9223372036854775808(0x8000000000000000)

printf("stream_index %d: decode a frame: frame->pts %lld, %lld \n", stream_index, frame->pts, AV_NOPTS_VALUE);

//所以,就调用av_frame_get_best_effort_timestamp,这个函数获得的,就是上面packet.pts

//av_frame_get_best_effort_timestamp是由宏定义的,搜索ffmpeg源码是搜索不到的。

//宏定义在ffmpeg-2.7.1\libavutil\internal.h

//type av_##name##_get_##field(const str *s) { return s->field; } \ void av_##name##_set_##field(str *s, type v) { s->field = v; }

//set函数,就在上面做decode的时候调用的,所以get才能获得packet.pts

frame->pts = av_frame_get_best_effort_timestamp(frame);

printf("stream index %d: av_frame_get_best_effort_timestamp, frame->pts %lld\n", stream_index, frame->pts);

//准备编码并写入输出文件

ret = filter_encode_write_frame(frame, stream_index);

av_frame_free(&frame);

if (ret < 0)

goto end;

} else {

printf("didn't got frame? \n");

av_frame_free(&frame);

}

} else {

/* remux this frame without reencoding */

av_packet_rescale_ts(&packet,

ifmt_ctx->streams[stream_index]->time_base,

ofmt_ctx->streams[stream_index]->time_base);

ret = av_interleaved_write_frame(ofmt_ctx, &packet);

if (ret < 0)

goto end;

}

av_packet_unref(&packet);

}

/* flush filters and encoders */

for (i = 0; i < ifmt_ctx->nb_streams; i++) {

/* flush filter */

if (!filter_ctx[i].filter_graph)

continue;

ret = filter_encode_write_frame(NULL, i);

if (ret < 0) {

printf("Flushing filter failed\n");

goto end;

}

/* flush encoder */

ret = flush_encoder(i);

if (ret < 0) {

printf("Flushing encoder failed\n");

goto end;

}

}

//写输出文件的尾巴

av_write_trailer(ofmt_ctx);

end:

av_packet_unref(&packet);

av_frame_free(&frame);

for (i = 0; i < ifmt_ctx->nb_streams; i++) {

avcodec_close(ifmt_ctx->streams[i]->codec);

if (ofmt_ctx && ofmt_ctx->nb_streams > i && ofmt_ctx->streams[i] && ofmt_ctx->streams[i]->codec)

avcodec_close(ofmt_ctx->streams[i]->codec);

if (filter_ctx && filter_ctx[i].filter_graph)

avfilter_graph_free(&filter_ctx[i].filter_graph);

}

av_free(filter_ctx);

avformat_close_input(&ifmt_ctx);

if (ofmt_ctx && !(ofmt_ctx->oformat->flags & AVFMT_NOFILE))

avio_closep(&ofmt_ctx->pb);

avformat_free_context(ofmt_ctx);

if (ret < 0)

printf("Error occurred: %s\n", av_err2str(ret));

return ret ? 1 : 0;

}

2 详细解析

看了代码,可能你还意犹未尽。

那么,我先来说说代码的流程。

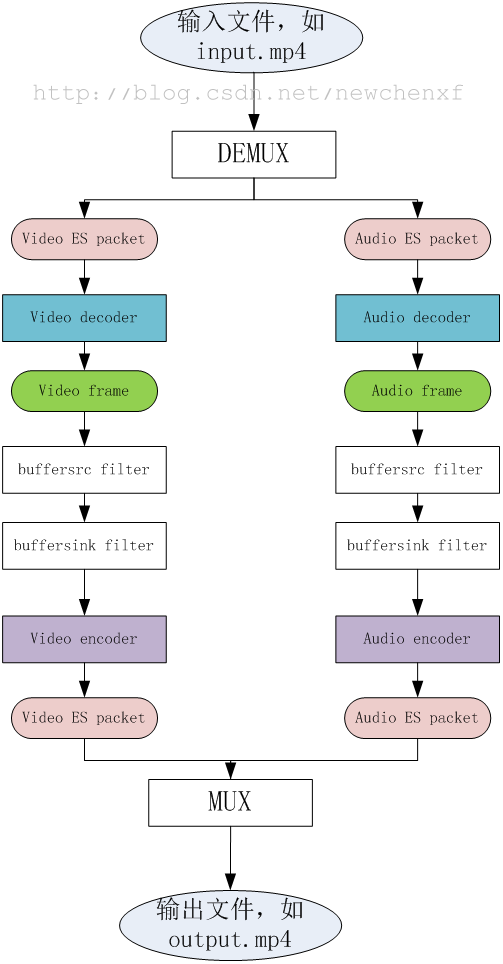

既然是转码,那整理流程就是,先把一个源文件,比如input.mp4,拆包(DEMUX),获得编码过的数据packet,然后把packet送给decoder解码,获得audio/video的原始数据frame,再把frame送给encoder编码,又变成编码过的数据packet,然后把packet打包(MUX)成另一种格式,比如output.ts。

程序流程图就不说了,代码量那么少,一看就知道。

3 关于PTS

本例最难懂的就是PTS的计算。

time_base

time_base称为时间基准,在不同的阶段(结构体),每个time_base具体的值不一样,ffmpeg提供函数av_packet_rescale_ts()在各个time_base中进行切换。

time_base主要在2个结构体最常用,一个是AVStream。

//libavformat/avformat.h

typedef struct AVStream {

AVCodecContext *codec;

/**

* This is the fundamental unit of time (in seconds) in terms

* of which frame timestamps are represented.

*

* decoding: set by libavformat

* encoding: May be set by the caller before avformat_write_header() to

* provide a hint to the muxer about the desired timebase. In

* avformat_write_header(), the muxer will overwrite this field

* with the timebase that will actually be used for the timestamps

* written into the file (which may or may not be related to the

* user-provided one, depending on the format).

*/

AVRational time_base;

//......

}一个是AVCodecContext

//libavcodec/avcodec.h

typedef struct AVCodecContext {

const AVClass *av_class;

enum AVMediaType codec_type; /* see AVMEDIA_TYPE_xxx */

const struct AVCodec *codec;

/**

* This is the fundamental unit of time (in seconds) in terms

* of which frame timestamps are represented. For fixed-fps content,

* timebase should be 1/framerate and timestamp increments should be

* identically 1.

* This often, but not always is the inverse of the frame rate or field rate

* for video. 1/time_base is not the average frame rate if the frame rate is not

* constant.

*

* Like containers, elementary streams also can store timestamps, 1/time_base

* is the unit in which these timestamps are specified.

* As example of such codec time base see ISO/IEC 14496-2:2001(E)

* vop_time_increment_resolution and fixed_vop_rate

* (fixed_vop_rate == 0 implies that it is different from the framerate)

*

* - encoding: MUST be set by user.

* - decoding: the use of this field for decoding is deprecated.

* Use framerate instead.

*/

AVRational time_base;AVRational 本身很简单,定义是

typedef struct AVRational{

int num; ///< numerator

int den; ///< denominator

} AVRational;什么意思呢?

以video为例子。

在AVStream的阶段,也就是还没解码之前的原始数据,假设是mp4文件,FPS = 15,那么

time_base={1, 15},那么

懂第一帧到开始,每一ES packet的PTS就是1, 2, 3,。。。。

为何呢?

简单啊,比如第一帧,packet.pts=1,实际的packet.pts = 1/15 = 0.0666s

同理第二帧,packet.pts=2,实际的packet.pts = 2/15 = 0.1333s

到第15帧,packet.pts=15,实际的packet.pts = 15/15 = 1s

也符合我们的预想嘛!