前言

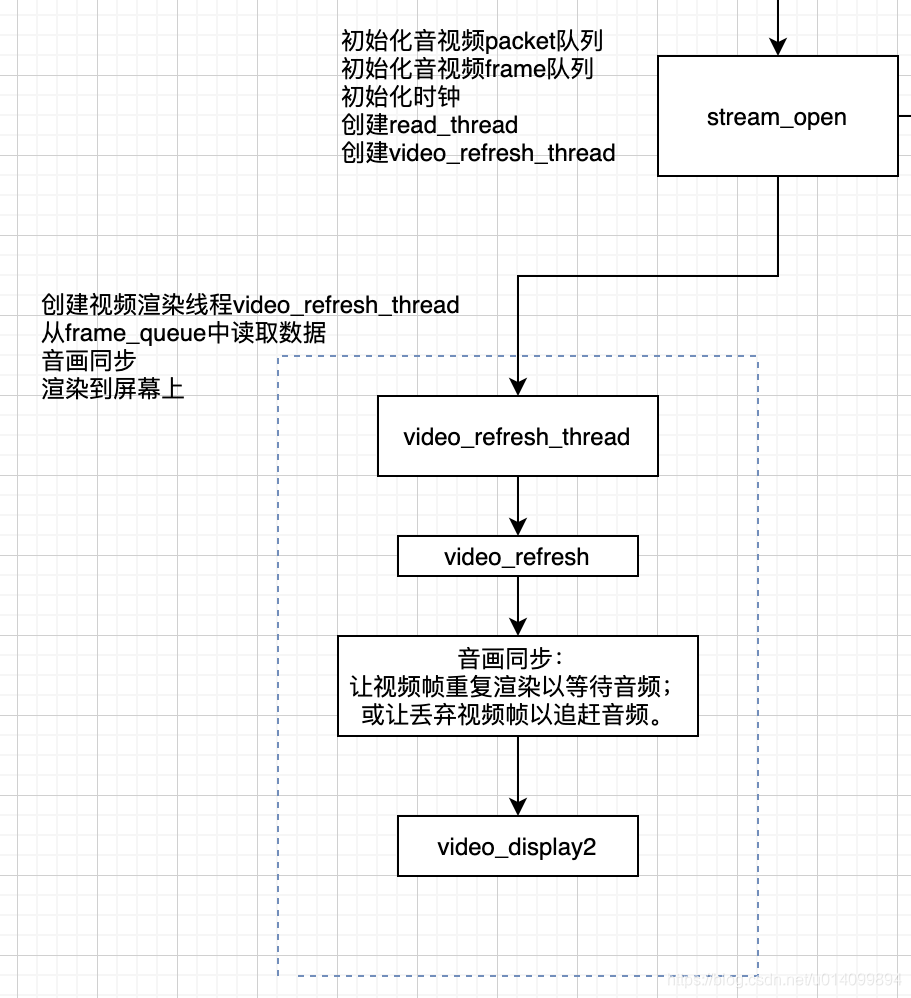

本文是流程分析的第一篇,分析ijkPlayer中的视频渲染流程,在video_refresh_thread中,如下流程图中所示。

SDL_Vout和SDL_VoutOverlay结构体

SDL_Vout

SDL_Vout表示一个显示上下文,或者理解为一块画布,ANativeWindow,控制如何显示overlay。

// ijksdl_aout.c

struct SDL_Vout {

SDL_mutex *mutex;

SDL_Class *opaque_class;

SDL_Vout_Opaque *opaque;

SDL_VoutOverlay *(*create_overlay)(int width, int height, int frame_format, SDL_Vout *vout);

void (*free_l)(SDL_Vout *vout);

int (*display_overlay)(SDL_Vout *vout, SDL_VoutOverlay *overlay);

Uint32 overlay_format;

};

- 实现为:

ijksdl_vout_dummy.c

ijksdl_vout_android_nativewindow.c //在Android中使用ANativeWindow实现

SDL_VoutOverlay

SDL_VoutOverlay表示显示层,或者理解为一块图像数据,表达如何显示。

struct SDL_VoutOverlay {

int w; /**< Read-only */

int h; /**< Read-only */

Uint32 format; /**< Read-only */

int planes; /**< Read-only */

Uint16 *pitches; /**< in bytes, Read-only */

Uint8 **pixels; /**< Read-write */

int is_private;

int sar_num;

int sar_den;

SDL_Class *opaque_class;

SDL_VoutOverlay_Opaque *opaque;

void (*free_l)(SDL_VoutOverlay *overlay);

int (*lock)(SDL_VoutOverlay *overlay);

int (*unlock)(SDL_VoutOverlay *overlay);

void (*unref)(SDL_VoutOverlay *overlay);

int (*func_fill_frame)(SDL_VoutOverlay *overlay, const AVFrame *frame);

};

- 实现为:

ijksdl_vout_overlay_android_mediacodec.c

ijksdl_vout_overlay_ffmpeg.c

调用流程

1. 通过什么方法渲染的,入口是什么

video_refresh音视频同步后,调用video_display2()方法,从frame_queue中取出一帧后进行渲染。

static void video_image_display2(FFPlayer *ffp) {

Frame *vp;

vp = frame_queue_peek_last(&is->pictq); // 从frame_queue中取出一帧

if (vp->bmp) {

SDL_VoutDisplayYUVOverlay(ffp->vout, vp->bmp); // frame中的bmp

}

}

int SDL_VoutDisplayYUVOverlay(SDL_Vout *vout, SDL_VoutOverlay *overlay)

{

if (vout && overlay && vout->display_overlay)

return vout->display_overlay(vout, overlay);

return -1;

}

2. SDL_Vout如何赋值的

ijkPlayer_jni.c#IjkMediaPlayer_native_setup

-> ijkplayer_android.c#ijkmp_android_create

-> ijksdl_vout_android_surface.c#SDL_VoutAndroid_CreateForAndroidSurface

-> ijksdl_vout_android_nativewindow.c#SDL_VoutAndroid_CreateForANativeWindow // 创建SDL_Vout

IjkMediaPlayer *ijkmp_android_create(int(*msg_loop)(void*))

{

IjkMediaPlayer *mp = ijkmp_create(msg_loop);

// ...

mp->ffplayer->vout = SDL_VoutAndroid_CreateForAndroidSurface();

// ...

}

之后通过setSurface(),创建ANativeWindow,并赋值给SDL_Vout_Opaque

3. frame中的bmp是如何赋值的

ff_ffplay.c#stream_component_open中decoder_start方法启动video_thread开启解码

–> 软解调用到ff_ffplay.c#ffplay_video_thread方法

–> 硬解调用到ffpipenode_android_mediacodec_vdec.c#func_run_sync方法

-> 最后都回调到queue_picture,将该帧放入frame_queue队列中,等待渲染。

// 软解,ffpipenode_ffplay_vdec.c

static int ffplay_video_thread(void *arg) {

AVFrame *frame = av_frame_alloc();

for(;;){

ret = get_video_frame(ffp, frame); // avcodec_receive_frame软解码获取一帧

queue_picture(ffp, frame, pts, duration, frame->pkt_pos, is->viddec.pkt_serial);

}

}

// 硬解,ffpipenode_android_mediacodec_vdec.c

static int func_run_sync(IJKFF_Pipenode *node){

int got_frame = 0;

while (!q->abort_request) {

drain_output_buffer(env, node, timeUs, &dequeue_count, frame, &got_frame);

if (got_frame) {

// 通过头文件调用到queue_picture

ffp_queue_picture(ffp, frame, pts, duration, av_frame_get_pkt_pos(frame), is->viddec.pkt_serial);

}

}

}

// 入队 ff_ffplay.c

static int

queue_picture(FFPlayer *ffp, AVFrame *src_frame, double pts, double duration, int64_t pos, int serial) {

Frame *vp;

vp = frame_queue_peek_writable(&is->pictq); // 获取一个可写节点

if (!vp->bmp){

alloc_picture(ffp, src_frame->format); // 创建bmp

vp->allocated = 0;

vp->width = src_frame->width;

vp->height = src_frame->height;

vp->format = src_frame->format;

}

if (vp->bmp) {

SDL_VoutLockYUVOverlay(vp->bmp); // 锁

SDL_VoutFillFrameYUVOverlay(vp->bmp, src_frame); // 调用func_fill_frame把帧画面“绘制”到最终的显示图层上

SDL_VoutUnlockYUVOverlay(vp->bmp);

vp->pts = pts;

vp->duration = duration;

vp->pos = pos;

vp->serial = serial;

vp->sar = src_frame->sample_aspect_ratio;

vp->bmp->sar_num = vp->sar.num;

vp->bmp->sar_den = vp->sar.den;

frame_queue_push(&is->pictq); // 对节点操作结束后,调用frame_queue_push告知FrameQueue“存入”该节点

}

}

// 创建bmp

static void alloc_picture(FFPlayer *ffp, int frame_format) {

vp->bmp = SDL_Vout_CreateOverlay(vp->width, vp->height,

frame_format,

ffp->vout);

}

// 实现如下

static SDL_VoutOverlay *

func_create_overlay_l(int width, int height, int frame_format, SDL_Vout *vout) {

switch (frame_format) {

case IJK_AV_PIX_FMT__ANDROID_MEDIACODEC:

return SDL_VoutAMediaCodec_CreateOverlay(width, height, vout);

default:

return SDL_VoutFFmpeg_CreateOverlay(width, height, frame_format, vout);

}

}

4. display_overlay流程

从frame_queue中取出一帧后渲染到屏幕上,从代码中看到,可以通过MediaCodec、EGL、ANativeWindow的方式渲染到屏幕上。

后面会具体分析相关api在Android native层的用法,是怎么渲染到屏幕上的。

// ijksdl_vout_android_nativewindow.c

static int func_display_overlay_l(SDL_Vout *vout, SDL_VoutOverlay *overlay) {

switch (overlay->format) {

case SDL_FCC__AMC: {

// only ANativeWindow support

IJK_EGL_terminate(opaque->egl);

return SDL_VoutOverlayAMediaCodec_releaseFrame_l(overlay, NULL, true); // MediaCodec

}

case SDL_FCC_RV24:

case SDL_FCC_I420:

case SDL_FCC_I444P10LE: {

// only GLES support

if (opaque->egl)

return IJK_EGL_display(opaque->egl, native_window, overlay); // EGL

break;

}

case SDL_FCC_YV12:

case SDL_FCC_RV16:

case SDL_FCC_RV32: {

// both GLES & ANativeWindow support

if (vout->overlay_format == SDL_FCC__GLES2 && opaque->egl)

return IJK_EGL_display(opaque->egl, native_window, overlay); // EGL

break;

}

}

// fallback to ANativeWindow

IJK_EGL_terminate(opaque->egl);

return SDL_Android_NativeWindow_display_l(native_window, overlay); // 渲染ARGB

}